SORTING

210 likes | 402 Views

SORTING. Dan Barrish-Flood. heapsort. made file “3-Sorting-Intro-Heapsort.ppt”. Quicksort. Worst-case running time is Θ(n 2 ) on an input array of n numbers. Expected running time is Θ( n lg n ). Constants hidden by Θ are quite small. Sorts in place.

SORTING

E N D

Presentation Transcript

SORTING • Dan Barrish-Flood

heapsort • made file “3-Sorting-Intro-Heapsort.ppt”

Quicksort • Worst-case running time is Θ(n2) on an input array of n numbers. • Expected running time is Θ(nlgn). • Constants hidden by Θ are quite small. • Sorts in place. • Probably best sorting algorithm for large input arrays. Maybe.

How does Quicksort work? • based on “divide and conquer” paradigm (so is merge sort). • Divide: Partition (re-arrange) the array A[p..r] into two (possibly empty) sub-arrays A[p .. q-1] and A[q+1 .. r] such that each element of A[p .. q-1] is ≤ each element of A[q], which is, in turn, ≤ each element of A[q+1 .. r]. Compute the index q as part of this partitioning procedure. • Conquer: Sort the two sub-arrays A[p .. q-1] and A[q+1 .. r] by recursive calls to quicksort. • Combine: No combining needed; the entire array A[p .. r] is now sorted!

Quicksort Running Time, worse-case • worst-case occurs when partition yields one subproblem of size n-1 and one of size 0. Assume this “bad split” occurs at each recursive call. • partition costs Θ(n). Recursive call to QS on array of size 0 just returns, so T(0) = 1, so we get: • T(n) = T(n-1) + T(0) + Θ(n), same as... • T(n) = T(n-1) + n • just an arithmetic series! So... • T(n) = Θ(n2) (worst-case) • Under what circumstances do you suppose we get this worst-case behavior?

Quicksort, best-case • In the most even possible split, PARTITION yields two subproblems each of size no more than n/2, since one is of size floor(n/2), and one is [ceiling(n/2)]-1. We get this recurrence, with some OK sloppiness: • T(n) = 2T(n/2) + n (look familiar?) • T(n) = O(nlgn) • This is asymptotically superior to worst-case, but this ideal scenario is not likely...

Quicksort, Average-case • suppose the great and awful splits alternate levels in the tree. • the running time for QS, when levels alternate between great and awful splits, is just the same as when all levels yield great splits! (with a slightly larger constant hidden by the big-oh notation). So, average case... • T(n) = O(nlgn)

A lower bound for sorting (Sorting, part 2) • We will show that any sorting algorithm based only on comparison of the input values must run in Ω(nlgn) time.

Decision Trees • Tree of comparisons made by a sorting algorithm. • Each comparison reduces the number of possible orderings. • Eventually, only one must remain. • A decision tree is a “full” (not “complete”) binary tree; each node is a leaf or has degree 2.

Q. How many leaves does a decision tree have? • A. There is one leaf for each permutation of n elements. There are n! permuatations. • Q. What is the height of the tree? • A. # of leaves = n! ≤ 2h • Note the height is the worst-case number of comparisons that might be needed.

Show we can’t beat nlgn • recall n! ≤ 2h ... now take logs • lg(n!) ≤ lg(2h) • lg(n!) ≤ h lg2 • lg(n!) ≤ h ... just flip it over • h ≥ lg(n!) • ( lg(n!) = Θ(nlgn) ) ...Stirling, CLRS p. 55 • h = Ω(nlgn) QED • In the worst case, Ω(nlgn) comparisons are needed to sort n items.

Sorting in Linear Time !!! • The Ω(nlgn) bound does not apply if we use info other than comparisons. • Like what other info? • Use the item as an array index. • Examine the digits (or bits) of the item.

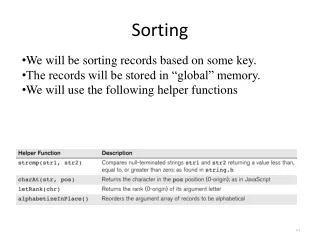

Counting Sort • Good for sorting integers in a narrow range • Assume the input numbers (keys) are in the range 0..k • Use an auxilliary array C[0..k] to hold the number of items less than i for 0 ≤ i ≤ k • if k = O(n), then the running time is Θ(n). • Counting sort is stable; it keeps records in their original order.

Radix Sort • How IBM made its money, using punch card readers for census tabulation in early 1900’s. Card sorters worked on one column at a time. • Sort each digit (or field) separately. • Start with the least-significant digit. • Must use a stable sort. RADIX-SORT(A, d) 1 fori← 1 to d 2 do use a stable sort to sort array A on digit i

Correctness of Radix Sort • induction on number of passes • base case: low-order digit is sorted correctly • inductive hypothesis: show that a stable sort on digit i leaves digits 1...i sorted • if 2 digits in position i are different, ordering by position i is correct, and positions 1 .. i-1 are irrelevant • if 2 digits in position i are equal, numbers are already in the right order (by inductive hypotheis). The stable sort on digit i leaves them in the right order. • Radix sort must invoke a stable sort.

Running Time of Radix Sort • use counting sort as the invoked stable sort, if the range of digits is not large • if digit range is 1..k, then each pass takes Θ(n+k) time • there are d passes, for a total of Θ(d(n+k)) • if k = O(n), time is Θ(dn) • when d is const, we have Θ(n), linear!