Summarization and Personal Information Management

460 likes | 480 Views

Explore the possibility of redesigning online discussions to promote summarization and brevity, and get users to write in a newsroom style. Discuss the challenges of relying on user-generated content for summarization.

Summarization and Personal Information Management

E N D

Presentation Transcript

Summarization and Personal Information Management Carolyn Penstein Rosé Language Technologies Institute/ Human-Computer Interaction Institute

Announcements • Questions? • Plan for Today • Finish Blog Analysis • Ku, Liang, and Chen, 2006 • Chat analysis • Zhou & Hovey, 2005 • Student presentation by Justin Weisz • Time permitting: Supplementary discussion about chat analysis • Arguello & Rosé, 2005

Food for thought!! • I propose that we redesign the way discussions appear on the Internet. Current discussion interfaces are terrible anyways. • And we can design them in ways that favor summarization or promote brevity. • Can we get users to write in newsroom, inverted pyramid style with all of the important info at the top? • Getting users to do work that algorithms can't do is currently in vogue. Even better if they can do some work that will assist algorithms …

More food! • While relying on "the masses" to provide free information seems to be the popular web 2.0 thing -- it is only really viable on large scales. While slashdot moderation can give good feedback about which posts are informative, the same methods wouldn't work to summarize, for instance, a class discussion board with 20 members.

Opinion Based Summarization • The high level view is that what they’re trying to do is determine what opinion is represented by a post • Where by opinion they mean whether it is positive or negative • Then they are trying to identify what sentences are responsible for expressing that positive or negative view • A summary is an extract from a blog post that expresses an opinion in a concise way

Data • English data came from a TREC blog analysis competition • They annotated positive and negative polarity at the word, sentence, and document level • They present the reliability of their coding, but don’t talk in great detail about their definitions of polarity that guided their judgments • 3 different coders worked on the annotation • They did the same with Chinese data

Algorithm • First identify which words express an opinion • Use opinion words to identify whether a sentence expresses an opinion (and whether that opinion is positive or negative) • Once you have the subset of sentences that express that opinion, you can make a decision about what opinion the post is expressing • The intuition is that what makes opinion classification hard is that a lot of comments are made in a post that are not part of the expression of the opinion, and this text acts as a distractor

Word Sentiment Algorithm for Chinese • The sentiment of a word depends on the sentiment of its characters and vice versa • Start with a list of seed words assigned to positive and negative • For a new word • Compute a sentiment for each character • Sentiment of its characters has to do with how many negative versus positive words it occurs in within the seed list • Basic formula is based on proportion of positive and negative letters, where an adjustment is made to correct for skew in distribution of positive and negative words in the language

Identifying Opinion Words • Their approach does not require cross-validation • SVM and C5 tested using 2 fold cross validation (A and B were the two folds) • Precision is higher for their approach • Statistical significance?

Now let’s see what you think… • They give examples of their summaries but no real quantitative evaluation of their quality • Look at the summaries and see whether you think they are really expressing a positive or negative opinion • Why or why not?

Discussion • If you didn’t think the summaries were expressing a positive or negative opinion, what do you think they were expressing instead? • What is the difference between an opinion and a sentiment? • What would you have done differently?

VOTE! • In class final versus take home final • Public poster session versus private poster session

Evaluation Issues! • Variety of student comments related to the evaluation • We will discuss those next week when we start the evaluation segment

Important Ideas • Topic segmentation • Topic clustering • Both are hard in chat! • Their corpus was atypical for chat: • Very long contirbutions • Email mixed with chat • Let’s take a moment to think about chat more broadly…

Definition of “Topic” in Dialogue • Discourse Segment Purpose(Passonneau and Litman, 1994),based on(Grosz and Sidner, 1984) • TOPIC SHIFT = SHIFT IN PURPOSE that is acknowledge and acted upon by both dialogue participants • Example: T: Let me know once you are done reading. T: I’ll be back in a min. T: Are you done reading? S: not yet. T: ok T: Do you know where to enter all the values? S: I think so. S: I’ll ask if I get stuck though. . . . Tutor wants to know when student is ready to start the session. Tutor checks if student knows how to setup the analysis

Cows make cheese. 110010 Hamsters eat seeds. 001101 Basic IdeaRepresent text as a vector where each position corresponds to a termThis is called the “bag of words” approach Cheese Cows Eat Hamsters Make Seeds

Student Questions • I don't know what Maximum Entropy is. • However, I don’t see how I can distinguish segment clusters (corresponding to subtopic) in terms of their degree of importance. • I vaguely understood the use of SVM was basically to classify the sentences as belonging in the summary or not. • I don't understand why SVM doesn't require "feature selection". Doesn't all machine learning require features?

Dialogue Transcript W1 W3 W2 Dialogue Transcript depth contribution # TextTiling Term Frequency (TF): accentuates frequent terms in this window Inverse Document Frequency (IDF): suppresses terms frequent in many windows W1 = 0.15 W2 = 0.20 W3 = 0.45 …. w TF.IDF W1 W3 W2

Student Comment • I think they should have reported how well TextTiling performed on chat/email data. Originally it has been designed for expository text.

Error Analysis: Lexical Cohesion LOTS OF REGIONS W/ ZERO TERM OVERLAP BUT,MAY BE USEFUL AS ONE SOURCE OF EVIDENCE • Why does Lexical Cohesion Fail?

Clustering Contributions in Chat • Lack of content produces overly skewed cluster size distribution

[initiation] • [initiation] • [response] • [initiation] • [response] • [feedback] • [initiation] Dialogue Exchanges • A more coarse-grained discourse unit • Initiation, Response, Feedback (IRF) Structure (Stubbs, 1983; Sinclair and Coulthard, 1975) • T:Hi • T:Are you connected? • S:Ya. • T:Are you done reading? • S:Yah • T: ok • T:Your objective today is to learn as much as you can about Rankin Cycles

Related Student Quote • Taking discourse structure into account • I feel it is a good strategy for the task. Beyond what they did I would like to reconstruct the relationship between sub-topics. This will increase the summary quality and capture what happened in the discussion

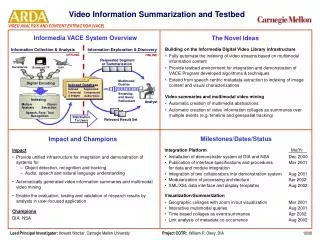

Museli:A Multi-Source Evidence Integration Approach to Topic Segmentation of Spontaneous Dialogue Jaime Arguello Carolyn Rosé Language Technologies Institute Carnegie Mellon University

Overview of Single Evidence Source Approaches • Models based on lexical cohesion • TextTiling (Hearst, 1997) • Foltz (Foltz, 1998) • Olney & Cai (Olney & Cai, 2005) • Models relying on regularities in topic sequencing • Barzilay & Lee (Barzilay & Lee, 2004)

Dialogue Transcript Latent Semantic Analysis (LSA) Approaches • Foltz (Foltz, 1998) • Similar to TextTiling, except: • LSA space constructed using contributions from corpus • Supervised: logistic regression (Olney and Cai, 2005) • Orthonormal Basis (Olney and Cai, 2005) • A more expressive model of discourse coherence than TextTiling and Foltz Imagine 2 contributions w/ LSA vectors v1 & v2 6 #’s: (1) cosine (2) informativity (3) relevance wrt to previous and next window. Logistic Regression Informativity v2 v1 Relevance

Dialogue Transcripts c1 Build Cluster- Specific LMs c3 c2 HMM Apply HMM to data. TRANSITION = TOPIC SHIFT Content Models (Barzilay and Lee, 2004) c1 Cluster Contributions c2 c3 ….. Dialogue 1 Dialogue 2 Dialogue 3 EM-like iterative Re-assignment of Contributions to States

MUSELI • Integrates multiple sources of evidence of topic shift • Features: • Lexical Cohesion (via cosine correlation) • Time lag between contributions • Unigrams (previous and current contribution) • Bigrams (previous and current cont.) • POS Bigrams (previous and current cont.) • Contribution Length • Previous/Current Speaker • Contribution of Content Words

Dialogue Transcripts (Training Set) MUSELI (cont’d) Dialogue Transcripts (Training Set) Separate Student & Tutor Role Tutor Student Segmentation (Test Set) Feature Selection (chi-squared) Feature Selection (chi-squared) Naïve Bayes Classifier Naïve Bayes Classifier If Agent = Student, use prediction from Student Model If Agent = Tutor, use prediction from Tutor Model

Experimental Corpora • Olney and Cai (Olney and Cai, 2005) • Thermo corpus: student/tutor optimization problem, unrestricted interaction, virtually co-present • Our thermo corpus: • Is more terse! • Has fewer Contributions! • Has more Topics/Dialogue! • Strict turn-taking not enforced! * P < .005

Baseline Degenerate Approaches • ALL: every contribution = NEW_TOPIC • EVEN: every nth contribution = NEW_TOPIC • NONE:no NEW_TOPIC

Two Evaluation Metrics • A metric commonly used to evaluate topic segmentation algorithms (Olney & Cai, 2005) • F-measure: Precision (P): # correct predictions / # predictions Recall (R): # correct predictions / # boundaries • An additional metric designed specifically for segmentation problems (Beeferman et al., 1999) • Pk: Pr(error|k) The probability that two contributions, separated by k contributions, are misclassified Effective if k = ½ average topic length

Experimental Results Compared to degenerates: > NO DEG. > 1 DEG. > ALL 3 DEG. P < .05

Experimental Results Museli > all approaches in BOTH corpora P < .05

Error Analysis: Barzilay & Lee • Lack of content produces overly skewed cluster size distribution

Perfect Dialogue Exchanges With Respect to Contributions Counter-part: P < .05 P < .1

Predicted Dialogue Exchanges • Museli approach for predicting exchange boundaries • F-measure: .5295 • Pk: .3358 • B&L with predicted exchanges: • F-measure: .3291 • Pk: .4154 • An improvement over B&L with contribution, P < .05