Inducing Structure for Perception

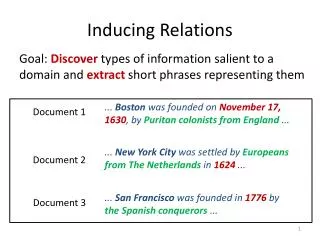

Inducing Structure for Perception. a.k.a. Slav’s split&merge Hammer. Slav Petrov Advisors: Dan Klein, Jitendra Malik Collaborators: L. Barrett, R. Thibaux, A. Faria, A. Pauls, P. Liang, A. Berg. The Main Idea. True structure. Manually specified structure. MLE structure. He was right.

Inducing Structure for Perception

E N D

Presentation Transcript

Inducing Structure for Perception a.k.a. Slav’s split&merge Hammer Slav Petrov Advisors: Dan Klein, Jitendra Malik Collaborators: L. Barrett, R. Thibaux, A. Faria, A. Pauls, P. Liang, A. Berg

The Main Idea True structure Manually specifiedstructure MLE structure He was right. Observation Complex underlying process

The Main Idea Automatically refinedstructure EM He was right. Manually specifiedstructure Observation Complex underlying process

Why Structure? the the the food cat dog ate and t e c a e h t g f a o d o o d n h e t d a

The dog ate the cat and the food. The dog and the cat ate the food. The cat ate the food and the dog. Structure is important

Syntactic Ambiguity Last night I shot an elephant in my pajamas.

Visual Ambiguity Old or young?

Machine Learning Natural Language Processing Computer Vision Three Peaks?

Machine Learning Natural Language Processing Computer Vision No, One Mountain!

Syntax Scenes Speech Three Domains

Syntax TrecVid Learning Inference Scenes Learning Synthesis Speech Decoding Bayesian Learning Inference Summer ISI Conditional Syntactic MT ‘07 ‘08 ‘09 Now Timeline

Scenes Speech Syntax Split & Merge Learning Coarse-to-Fine Inference Syntax Non- parametric Bayesian Learning Syntactic Machine Translation Generative vs. Conditional Learning Language Modeling

Learning accurate, compact and interpretable Tree Annotation Slav Petrov, Leon Barrett, Romain Thibaux, Dan Klein

Motivation (Syntax) • Task: He was right. • Why? • Information Extraction • Syntactic Machine Translation

S NP VP . 1.0 NP PRP 0.5 NP DT NN 0.5 … PRP She 1.0 DT the 1.0 … Treebank Grammar Treebank Parsing

NPs under S NPs under VP Non-Independence Independence assumptions are often too strong. All NPs

The Game of Designing a Grammar • Annotation refines base treebank symbols to improve statistical fit of the grammar • Parent annotation [Johnson ’98]

The Game of Designing a Grammar • Annotation refines base treebank symbols to improve statistical fit of the grammar • Parent annotation [Johnson ’98] • Head lexicalization[Collins ’99, Charniak ’00]

The Game of Designing a Grammar • Annotation refines base treebank symbols to improve statistical fit of the grammar • Parent annotation [Johnson ’98] • Head lexicalization[Collins ’99, Charniak ’00] • Automatic clustering?

Forward X1 X7 X2 X4 X3 X5 X6 . He was right Backward Learning Latent Annotations EM algorithm: • Brackets are known • Base categories are known • Only induce subcategories Just like Forward-Backward for HMMs.

Ax By Cz By Cz Inside/Outside Scores Inside: Outside: Ax

Ax By Cz Learning Latent Annotations (Details) • E-Step: • M-Step:

Limit of computational resources Overview - Hierarchical Training - Adaptive Splitting - Parameter Smoothing

DT-2 DT-3 DT-1 DT-4 Refinement of the DT tag DT

Refinement of the , tag • Splitting all categories the same amount is wasteful:

Oversplit? The DT tag revisited

Adaptive Splitting • Want to split complex categories more • Idea: split everything, roll back splits which were least useful

Adaptive Splitting • Want to split complex categories more • Idea: split everything, roll back splits which were least useful

Adaptive Splitting • Evaluate loss in likelihood from removing each split = Data likelihood with split reversed Data likelihood with split • No loss in accuracy when 50% of the splits are reversed.

Adaptive Splitting (Details) • True data likelihood: • Approximate likelihood with split at n reversed: • Approximate loss in likelihood:

Number of Phrasal Subcategories NP VP PP

Number of Lexical Subcategories POS TO ,

Number of Lexical Subcategories RB VBx IN DT

Number of Lexical Subcategories NNP JJ NNS NN

Smoothing • Heavy splitting can lead to overfitting • Idea: Smoothing allows us to pool statistics

Linguistic Candy • Proper Nouns (NNP): • Personal pronouns (PRP):

Linguistic Candy • Relative adverbs (RBR): • Cardinal Numbers (CD):

Nonparametric PCFGs using Dirichlet Processes Percy Liang, Slav Petrov, Dan Klein and Michael Jordan

Improved Inference for Unlexicalized Parsing Slav Petrov and Dan Klein

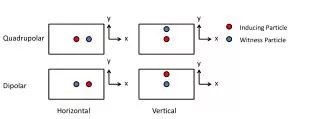

Treebank Coarse grammar Prune Parse Parse NP … VP NP-apple NP-1 VP-6 VP-run NP-17 NP-dog … … VP-31 NP-eat NP-12 NP-cat … … Refined grammar Refined grammar [Goodman ‘97, Charniak&Johnson ‘05] Coarse-to-Fine Parsing

Prune? For each chart item X[i,j], compute posterior probability: < threshold E.g. consider the span 5 to 12: coarse: refined: