Video Compression

Video Compression. Fall 2011 Hongli Luo. Video Compression. Image compression To reduce spatial redundancy Video compression spatial redundancy exists in each frame as in images Temporal redundancy exists between frames and can be used for compression

Video Compression

E N D

Presentation Transcript

Video Compression Fall 2011 Hongli Luo

Video Compression • Image compression • To reduce spatial redundancy • Video compression • spatial redundancy exists in each frame as in images • Temporal redundancy exists between frames and can be used for compression • Video compression reduces spatial redundancy within a frame and temporal redundancy between frames • Each video frame can be encoded differently depending on whether to exploit spatial redundancy or temporal redundancy • Intraframe • Interframe

Intraframe and Interframe • Intraframe • Each frame is encoded as an individual image • Use image compression technique, e.g., DCT • Interframe • Predictive Encoding between frames in the temporal domain • Instead of coding the current frame directly, the difference between the current frame and a prediction based on previous frames • Use motion compensation

Intraframe Coding • The frames are compressed using • Lossy compression, e.g., DCT or subsampling and quantization • Lossless entropy compression, e.g. huffman or arithmetic • MPEG/ITU standard compress the intraframe similar to JPEG image standard • Get 8 x 8 blocks • DCT transformation on each block • Quantization of the coefficients • AC zigzag • DPCM on DC coefficients • Runlength coding on AC coefficients • Huffman or arithmetic coding

Interframe Coding • How does a pixel value change from one frame to the next frame? • No change, e.g., background • Slight changes due to quantization • Changes due to motion of the object • Changes due to motion of the camera • Changes due to environment and lighting • No changes – no need to code • Changes due to motion of object or camera • Predict how the pixel has moved • Encoding the changing vector

Video Compression with MotionCompensation • Consecutive frames in a video are similar - temporal redundancy exists. • Temporal redundancy is exploited so that not every frame of the video needs to be coded independently as a new image. • The difference between the current frame and other frame(s) in the sequence will be coded - small values and low entropy, good for compression. • Steps of Video compression based on Motion Compensation (MC): • Motion Estimation (motion vector search). • Motion Compensation based Prediction. • Derivation of the prediction error, i.e., the difference.

Video Compression Based on Motion Compensation • Each image is divided into macroblocks of size N x N. By default • For luminance images, N = 16 • For chrominance images, N = 8 if 4:2:0 chroma subsampling is adopted • Motion compensation is at the macroblock level • The current image frame is referred to as Target Frame. • A match is sought between the macroblock in the Target Frame and the most similar macroblock in previous and/or future frame(s) (referred to as Reference frame(s)). • The displacement of the reference macroblock to the target macroblock is called a motion vector MV.

Assume color of (x, y) is the same or very similar to (x0,y0) • Displacement or motion vector d = (dx, dy) • (x, y) = (x0+dx, y0+dy) • d = (dx, dy) = (x-x0, y-y0) = (x,y) – (x0,y0) dx = x-x0 dy=y-y0

Motion Estimation and Compensation • Motion Estimation • For a certain macroblock of pixels in the current frame (referred to as target frame) , find the most similar macroblock in a reference frame (previous or future frame), within specified search area. • Search for the Motion Vector - MV search is usually limited to a small immediate neighborhood – both horizontal and vertical displacements in the range [−p, p] • Motion Compensation • The target macroblock is predicted from the reference macroblock • Use the motion vectors to compensate the picture

Simple Motion Example • Consider a simple block of a moving circle. Instead of coding the current frame, code the difference between 2 frames. The difference needs fewer bits to encode. From Multimedia CM0340 David Marshall

Estimate Motion of Blocks • Estimate the motion of the object, encode the motion vectors and difference picture. From Multimedia CM0340 David Marshall

Decode Motion of Blocks • Use the motion vector and difference picture for decoding. From Multimedia CM0340 David Marshall

Motion Estimation and Compensation • Advantage • Motion estimation and compensation reduce the video bitrates significantly • After the first frame, only the motion vectors and difference macroblocks need be coded. • Introduce extra computational complexity • The motion estimation is the most computation expensive part of a video encoder • Need to buffer reference pictures – previous frames or future frames

Video Compression Standard • Image, Video and Audio compression standards have been specified by two major groups since 1985 • ISO (International Standards Organization) • JPEG • MPEG • MPEG-1, MPEG-2, MPEG-4, MPEG-7, MPEG-21 • ITU (International Telecommunications Union) • H.261 • H.263 • H.264 – by Joint Video Team (JVT) of ISO/IEC MPEG and ITU-T VCEG.

H.261 • H.261: An earlier digital video compression standard, its principle of MC-based compression is retained in all later video compression standards. • The standard was designed for videophone, video conferencing and other audiovisual services over ISDN. • The video codec supports bit-rates of p x 64 kbps, where p ranges from 1 to 30 (Hence also known as p x 64). • Require that the delay of the video encoder be less than 150 msec so that the video can be used for real-time bidirectional video conferencing.

ITU Recommendations & H.261 Video Formats • H.261 belongs to the following set of ITU recommendations for visual telephony systems: • H.221 - Frame structure for an audiovisual channel supporting 64 to 1,920 kbps. • H.230 - Frame control signals for audiovisual systems. • H.242 - Audiovisual communication protocols. • H.261 - Video encoder/decoder for audiovisual services at p x 64 kbps. • H.320 - Narrow-band audiovisual terminal equipment for p x 64 kbps transmission.

H.261 Frame Sequence • Two types of image frames are defined: Intra-frames (I-frames) and Inter-frames (P-frames): • I-frames are treated as independent images. Transform coding method similar to JPEG is applied within each I-frame, hence “Intra”. • P-frames are not independent: coded by a forward predictive coding method (prediction from a previous P-frame is allowed - not just from a previous I-frame). • Temporal redundancy removal is included in P-frame coding, whereas I-frame coding performs only spatial redundancy removal. • To avoid propagation of coding errors, an I-frame is usually sent a couple of times in each second of the video.

Intra-frame (I-frame) Coding • Macroblocks are of size 16 x16 pixels for the Y frame, and 8 x8 for Cb and Cr frames, since 4:2:0 chroma subsampling is employed. • A macroblock consists of four Y, one Cb, and one Cr 8 x 8 blocks. For each 8 x 8 block a DCT transform is applied, the DCT coefficients then go through quantization zigzag scan and entropy coding.

Inter-frame (P-frame) Predictive Coding • Figure 10.6 shows the H.261 P-frame coding scheme based on motion compensation: • For each macroblock in the Target frame, a motion vector is allocated by one of the search methods discussed earlier. • After the prediction, a difference macroblock is derived to measure the prediction error. • Each of these 8 x 8 blocks go through DCT, quantization, zigzag scan and entropy coding procedures.

Inter-frame (P-frame) Predictive Coding • The P-frame coding encodes the difference macroblock (not the Target macroblock itself). • Sometimes, a good match cannot be found, i.e., the prediction error exceeds a certain acceptable level. • The MB itself is then encoded (treated as an Intra MB) and in this case it is termed a non-motion compensated MB. • In fact, even the motion vector is not directly coded. • The difference, MVD, between the motion vectors of the preceding macroblock and current macroblock is sent for entropy coding: MVD = MVPreceding− MVCurrent(10:3)

H.263 • H.263 is an improved video coding standard for video conferencing and other audiovisual services transmitted on Public Switched Telephone Networks (PSTN). • Aims at low bit-rate communications at bit-rates of less than 64 kbps. • Uses predictive coding for inter-frames to reduce temporal redundancy and transform coding for the remaining signal to reduce spatial redundancy (for both Intra-frames and inter-frame prediction).

MPEG-1 • MPEG: Moving Pictures Experts Group, established in 1988 for the development of digital video. • MPEG-1 adopts the CCIR601 digital TV format also known as SIF (Source Input Format). • MPEG-1 supports only non-interlaced video. Normally, its picture resolution is: • 352 x240 for NTSC video at 30 fps • 352 x288 for PAL video at 25 fps • It uses 4:2:0 chroma subsampling

Motion Compensation in MPEG-1 • Motion Compensation (MC) based video encoding in H.261 works as follows: • In Motion Estimation (ME), each macroblock (MB) of the Target P-frame is assigned a best matching MB from the previously coded I or P frame - prediction. • prediction error: The difference between the MB and its matching MB, sent to DCT and its subsequent encoding steps. • The prediction is from a previous frame - forward prediction.

The MB containing part of a ball in the Target frame cannot find a good matching MB in the previous frame because half of the ball was occluded by another object. • A match however can readily be obtained from the next frame.

Motion Compensation in MPEG-1 (Cont'd) • MPEG introduces a third frame type - B-frames, and its accompanying bi-directional motion compensation. • The MC-based B-frame coding idea is illustrated in Fig. 11.2: • Each MB from a B-frame will have up to two motion vectors (MVs) (one from the forward and one from the backward prediction).

Group of Picture (GOP): starts with a I-frame, followed by B and P frames This GOP has 10 frames, with the structure: IBBPBBPBB

MPEG-1 Frames • Coding mechanism similar to H.261 • Three types of frames: • I-frames, coded in intra-frame mode • P-frames, coded with motion compensation using a previous I or P frame as reference) • B-frames, coded with bidirectional motion compensation based on a previous or a future I or P frames

B-frames • Advantages: Coding efficiency. • Most B frames use less bits. • Better Error propagation: B frames are not used to predict future frames, errors generated will not be propagated further within the sequence. • Disadvantage: • Frame reconstruction memory buffers within the encoder and decoder must be doubled in size to accommodate the 2 anchor frames.

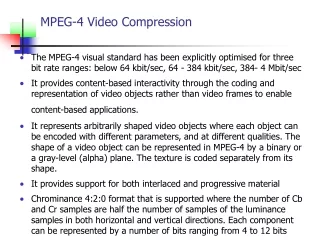

Other MPEG • MPEG-2: For higher quality video at a bit-rate of more than 4 Mbps. • Originally designed as a standard for digital broadcast TV • Also adopted for DVDs • MPEG-3: Originally for HDTV (1920 x 1080), got folded into MPEG-2 • MPEG-4: very low bit-rate communication • The bit-rate for MPEG-4 video now covers a large range between 5 kbps to 10 Mbps. • MPEG-7: Main objective is to serve the need of audiovisual content-based retrieval (or audiovisual object retrieval) in applications such as digital libraries. • MPEG-21: New standard • The vision for MPEG-21 is to define a multimedia framework to enable transparent and augmented use of multimedia resources across a wide range of networks and devices used by different communities.

MPEG-4 Part10/H.264 • The H.264 video compression standard, formerly known as “H.26L”, is being developed by the Joint Video Team (JVT) of ISO/IEC MPEG and ITU-T VCEG. • Preliminary studies using software based on this new standard suggests that H.264 offers up to 30-50% better compression than MPEG-2, and up to 30% over H.263+ and MPEG-4 advanced simple profile. • The outcome of this work is actually two identical standards: ISO MPEG-4 Part10 and ITU-T H.264.

H.264 • H.264 is currently one of the leading candidates to carry High Definition TV (HDTV) video content on many potential applications. • H.264 is adopted by Apple QuickTime 7 • delivers high quality at remarkably low data rates. • Generate bit stream across a broad range of bandwidths, • 3G mobile devices, iPod • Video on demand, video streaming (MPEG-4 Part 2) • video conferencing (H.263) • HD for broadcast (MPEG-2) • DVD (MPEG-2)