Advanced Methods for Speech Processing: Proposal for a Network of Excellence in FP6

This document outlines a proposal for establishing a Network of Excellence (NoE) in Automatic Speech Processing (ASP), emphasizing the integration of various areas such as recognition, synthesis, coding, language identification, and speaker verification. Key objectives include developing better models of speech production and perception, exploring nonlinear speech processing, and enhancing robustness to environmental conditions. The presentation covers methodologies including statistical models, speech coding innovations, and synthesis techniques aimed at improving the accuracy and quality of speech processing systems.

Advanced Methods for Speech Processing: Proposal for a Network of Excellence in FP6

E N D

Presentation Transcript

AMSP : Advanced Methods for Speech Processing An expression of Interest to set up a Network of Excellence in FP6 Prepared by members of COST-277 and colleagues Submitted by Marcos FAUNDEZ-ZANUY Presented here by Gérard CHOLLETchollet@tsi.enst.fr GET-ENST/CNRS-LTCIhttp://www.tsi.enst.fr/~chollet

Outline • Rationale of the proposition • Objectives • Approaches • Modeling • Recognition by synthesis • Robustness to environmental conditions • Evaluation paradigm • Excellence • Integration and structuring effect

Rationale for the NoE-AMSP • The areas of Automatic Speech Processing (recognition, synthesis, coding, language identification, speaker verification) should be better integrated • Better models of Speech Production and Perception • Investigate Nonlinear Speech Processing • Understanding, Semantic interpretation

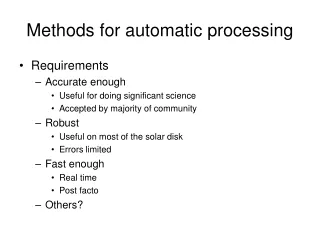

Features of Speech Models • Reflect auditory properties of human perception • Explain articulatory movements • Surpass the limitations of the source-filter model • Capture the dynamics of speech • Capable of natural speech restitution • Be discriminant for segmental information • Robust to noise and channel distortions • Adaptable to new speakers and new environments

Time – Frequency distributions • Short Time Fourier Transform • Non-linear frequency scale (PLP, WLP), mel-cepstrum • Wavelets, FAMlets • Bilinear distributions (Wigner-Ville, Choi-Williams,...) • Instantaneous frequency, Teager operator • Time – dependent representations (parametric and non parametric) • Vector quantisation • Matrix quantisation, non linear prediction

Time-dependent Spectral Models • Temporal Decomposition (B. Atal, 1983) • Vectorial Autoregressive models with detection of model ruptures (A. DeLima, Y. Grenier) • Segmental parameterisation using a time-dependent polynomial expansion (Y. Grenier)

Modeling of segmental units • Hidden Markov Model • Markov Fields • Bayesian Networks, Graphical Models OR • Production models • Synthesis (concatenative or rule based) with voice transformation AND / OR • Non linear predictor

Expected achievements in Speech Coding and Synthesis • Modeling the non-linearities in Speech Production and Perception will lead to more accurate and/or compact parametric representations. • Integrate segmental recognition and synthesis techniques in the coding loop to achieve bit rates as low as a few 100's bps with natural quality • Develop voice transformation techniques in order to : • Adapt segmental coders to new speakers, • Modify the characteristics of synthetic voices

Expected achievements inSpeech Synthesis • Self-excited nonlinear feedback oscillators will allow to better match synthetic and human voices. • Current concatenative techniques should be supplemented (or replaced) by (nonlinear) model based generative techniques to improve quality, naturalness, flexibility, training and adaptation. • Model-based voice mimicry controled by textual, phonetic and/or parametric input should not only improve synthesis but also coding, recognition and speaker characterisation.

Automatic Speech Recognition • Limitations of the HMM and hybrid HMM-ANN approaches • Keyword spotting (detection with SVM), noise robustness, adaptation • Large Vocabulary Speech Recognition (SIROCCO) http://perso.enst.fr/~sirocco/index-en.html • Markov Random Fields, Bayesian Networks and Graphical Models

Markov Random Fields Bayesian Networks and Graphical Models • Speech modelling with state constrained • Markov Random Field over Frequency bands • (Guillaume Gravier and Marc Sigelle) • http://perso.enst.fr/~ggravier/recherche.html#these • Comparative framework to study MRF, • Bayesian Networks and Graphical Models. • http://www.cs.berkeley.edu/~murphyk/Bayes/bayes.html

Recognition by Synthesis • If we could drive a synthesizer with meaningful units (phone sequences, words,...) to produce a speech signal that mimics the one to recognize, we may come close to transcription. • Analysis by Synthesis (which is in fact modeling) is a powerful tool in recognition and coding. • A trivial implementation is indexing a labelled speech memory

A L I S P Automatic Language Independent Speech Processing Automatic discovery of segmental units for speech coding, synthesis, recognition, language identification and speaker verification.

The robustness issue : • Mismatch between training and testing conditions • High Order Statistics are less sensitive to environment and transmission noise than autocorrelation • CMS, RASTA filtering • Independent Component Analysis • From Speaker Independent to Speaker Dependent recognition (Personalisation)

Expected achievements inAutomatic Speech Recognition • Dynamic nonlinear models should allow to merge feature extraction and classification under a common paradigm • Such models should be more robust to noise, channel distortions and missing data (transmission errors and packet losses) • Indexing a speech memory may help in the verification of hypotheses (a technique shared with Very Low Bit Rate Coders) • Statistical language models should be supplemented with adapted semantic information (conceptual graphs)

Voice technology in Majordome • Server side background tasks: continuous speech recognition applied to voice messages upon reception • Detection of sender’s name and subject • User interaction: • Speaker identification and verification • Speech recognition (receiving user commands through voice interaction) • Text-to-speech synthesis (reading text summaries, E-mails or faxes)

Collaboration with COST-278 • COST-278: Vocal Dialogue is a continuation of COST-249 • High interest in Robust Speech Recognition, Word spotting, Speech to actions, Speaker adaptation,... • Some members contribute to the Eureka-MAJORDOME project • Could be the seed for a Network of Excellence in FP6

Evaluation paradigm • DARPA • NIST • http://www.nist.gov/speech/tests/spk/index.htm Could we organize evaluation campaigns in Europe ? The 6th program of the EU is trying to promote Networks of Excellence. How should excellence be evaluated ? Should financial support be correlated with evaluation results ?

![[Advanced] Speech & Audio Signal Processing](https://cdn2.slideserve.com/3915760/advanced-speech-audio-signal-processing-dt.jpg)