Multi-Abstraction Retrieval

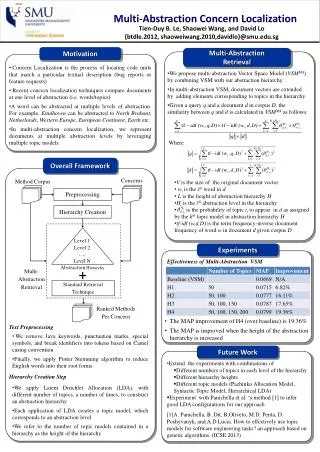

Concerns. Method Corpus. Preprocessing. Multi-Abstraction Concern Localization. Hierarchy Creation. Tien-Duy B. Le, Shaowei Wang, and David Lo {btdle.2012, shaoweiwang.2010,davidlo}@smu.edu.sg. Level 1. Level 2. …. Motivation. Level N. Multi-Abstraction Retrieval.

Multi-Abstraction Retrieval

E N D

Presentation Transcript

Concerns Method Corpus Preprocessing Multi-Abstraction Concern Localization Hierarchy Creation Tien-Duy B. Le, Shaowei Wang, and David Lo {btdle.2012, shaoweiwang.2010,davidlo}@smu.edu.sg Level 1 Level 2 …. Motivation Level N Multi-Abstraction Retrieval Abstraction Hierarchy + Multi-Abstraction Retrieval Standard Retrieval Technique • Concern Localization is the process of locating code units that match a particular textual description (bug reports or feature requests) • Recent concern localization techniques compare documents at one level of abstraction (i.e. words/topics) • A word can be abstracted at multiple levels of abstraction. For example, Eindhoven can be abstracted to North Brabant, Netherlands, Western Europe, European Continent, Earth etc. • In multi-abstraction concern localization, we represent documents at multiple abstraction levels by leveraging multiple topic models. • We propose multi-abstraction Vector Space Model (VSMMA) by combining VSM with our abstraction hierarchy. • In multi-abstraction VSM, document vectors are extended by adding elements corresponding to topics in the hierarchy. • Given a query q and a document d in corpus D, the similarity between q and d is calculated in VSMMA as follows: Ranked Methods Per Concern Where Overall Framework • V is the size of the original document vector • wi is the ith word in d • L is the height of abstraction hierarchy H • Hiis the ith abstraction level in the hierarchy • is the probability of topic ti to appear in d as assigned by the kth topic model in abstraction hierarchy H • tf-idf (w,d,D) is the term frequency-inverse document frequency of word w in document d given corpus D Experiments Effectiveness of Multi-Abstraction VSM • The MAP improvement of H4 (over baseline) is 19.36% • The MAP is improved when the height of the abstraction hierarchy is increased Text Preprocessing • We remove Java keywords, punctuation marks, special symbols, and break identifiers into tokens based on Camel casing convention • Finally, we apply Porter Stemming algorithm to reduce English words into their root forms. Future Work • Extend the experiments with combinations of • Different numbers of topics in each level of the hierarchy • Different hierarchy heights • Different topic models (Pachinko Allocation Model, Syntactic Topic Model, Hierarchical LDA) • Experiment with Panichella et al. ‘s method [1] to infer good LDA configurations for our approach • [1]A. Panichella, B. Dit, R.Oliveto, M.D. Penta, D. Poshyvanyk, and A.D Lucia. How to effectively use topic models for software engineering tasks? an approach based on genetic algorithms. (ICSE 2013) Hierarchy Creation Step • We apply Latent Dirichlet Allocation (LDA), with different number of topics, a number of times, to construct an abstraction hierarchy • Each application of LDA creates a topic model, which corresponds to an abstraction level. • We refer to the number of topic models contained in a hierarchy as the height of the hierarchy