Missing Data in Randomized Control Trials

Missing Data in Randomized Control Trials. John W. Graham The Prevention Research Center and Department of Biobehavioral Health Penn State University. IES/NCER Summer Research Training Institute, August 2, 2010. jgraham@psu.edu. Sessions in Three Parts.

Missing Data in Randomized Control Trials

E N D

Presentation Transcript

Missing Data in Randomized Control Trials John W. Graham The Prevention Research Center and Department of Biobehavioral Health Penn State University IES/NCER Summer Research Training Institute, August 2, 2010 jgraham@psu.edu

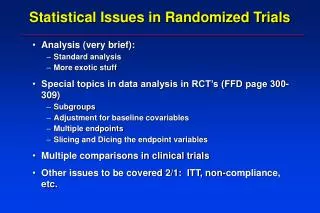

Sessions in Three Parts • (1) Introduction: Missing Data Theory • (2) Attrition: Bias and Lost Power After the break ... • (3) Hands-on with Multiple Imputation • Multiple Imputation with NORM • SPSS Automation Utility (New!) • SPSS Regression • HLM Automation Utility (New!) • 2-Level Regression with HLM 6

Recent Papers • Graham, J. W., (2009).Missing data analysis: making it work in the real world. Annual Review of Psychology, 60, 549-576. • Graham, J. W., Cumsille, P. E.,& Elek-Fisk,E. (2003).Methods for handling missing data. In J. A. Schinka & W. F. Velicer (Eds.). Research Methods in Psychology (pp. 87_114). Volume 2 of Handbook of Psychology (I. B. Weiner, Editor-in-Chief). New York: John Wiley & Sons. • Graham, J. W. (2010, forthcoming).Missing Data: Analysis and Design. New York: Springer. • Chapter 4: Multiple Imputation with Norm 2.03 • Chapter 6: Multiple Imputation and Analysis with SPSS 17/18 • Chapter 7: Multiple Imputation and Analysis with Multilevel (Cluster) Data

Recent Papers • Collins, L. M., Schafer, J. L.,& Kam, C. M.(2001). A comparison of inclusive and restrictive strategies in modern missing data procedures. Psychological Methods, 6, 330-351. • Schafer, J. L.,& Graham,J. W.(2002).Missing data: our view of the state of the art. Psychological Methods, 7, 147-177. • Graham,J. W., Taylor,B. J., Olchowski,A. E., &Cumsille,P. E.(2006).Planned missing data designs in psychological research. Psychological Methods, 11, 323-343.

Problem with Missing Data • Analysis procedures were designed for complete data. . .

Solution 1 • Design new model-based procedures • Missing Data + Parameter Estimation in One Step • Full Information Maximum Likelihood (FIML)SEM and Other Latent Variable Programs(Amos, LISREL, Mplus, Mx, LTA)

Solution 2 • Data based procedures • e.g., Multiple Imputation (MI) • Two Steps • Step 1: Deal with the missing data • (e.g., replace missing values with plausible values • Produce a product • Step 2: Analyze the product as if there were no missing data

FAQ • Aren't you somehow helping yourself with imputation?. . .

NO. Missing data imputation . . . • does NOT give you something for nothing • DOES let you make use of all data you have . . .

FAQ • Is the imputed value what the person would have given?

NO. When we impute a value . . • We do not impute for the sake of the value itself • We impute to preserve important characteristics of the whole data set . . .

We want . . . • unbiased parameter estimation • e.g., b-weights • Good estimate of variability • e.g., standard errors • best statistical power

Causes of Missingness • Ignorable • MCAR: Missing Completely At Random • MAR: Missing At Random • Non-Ignorable • MNAR: Missing Not At Random

MCAR(Missing Completely At Random) • MCAR 1: Cause of missingness completely random process (like coin flip) • MCAR 2: (essentially MCAR) • Cause uncorrelated with variables of interest • Example: parents move • No bias if cause omitted

MAR (Missing At Random) • Missingness may be related to measured variables • But no residual relationship with unmeasured variables • Example: reading speed • No bias if you control for measured variables

MNAR (Missing Not At Random) • Even after controlling for measured variables ... • Residual relationship with unmeasured variables • Example: drug use reason for absence

MNAR Causes • The recommended methods assume missingness is MAR • But what if the cause of missingness is not MAR? • Should these methods be used when MAR assumptions not met? . . .

YES! These Methods Work! • Suggested methods work better than “old” methods • Multiple causes of missingness • Only small part of missingness may be MNAR • Suggested methods usually work very well

Methods:"Old" vs MAR vs MNAR • MAR methods (MI and ML) • are ALWAYS at least as good as, • usually better than "old" methods (e.g., listwise deletion) • Methods designed to handle MNAR missingness are NOT always better than MAR methods

Old Procedures: Analyze Complete Cases(listwise deletion) • may produce bias • you always lose some power • (because you are throwing away data) • reasonable if you lose only 5% of cases • often lose substantial power

Analyze Complete Cases(listwise deletion) • 1 1 1 1 • 0 1 1 1 • 1 0 1 1 • 1 1 0 1 • 1 1 1 0 • very common situation • only 20% (4 of 20) data points missing • but discard 80% of the cases

Other "Old" Procedures • Pairwise deletion • May be of occasional use for preliminary analyses • Mean substitution • Never use it • Regression-based single imputation • generally not recommended ... except ...

Recommended Model-Based Procedures • Multiple Group SEM (Structural Equation Modeling) • LatentTransitionAnalysis (Collins et al.) • A latent class procedure

Recommended Model-Based Procedures • Raw Data Maximum Likelihood SEMaka Full Information Maximum Likelihood (FIML) • Amos (James Arbuckle) • LISREL 8.5+ (Jöreskog & Sörbom) • Mplus (Bengt Muthén) • Mx (Michael Neale)

Amos, Mx, Mplus, LISREL 8.8 • Structural Equation Modeling (SEM) Programs • In Single Analysis ... • Good Estimation • Reasonable standard errors • Windows Graphical Interface

Limitation with Model-Based Procedures • That particular model must be what you want

Recommended Data-Based Procedures EM Algorithm (ML parameter estimation) • Norm-Cat-Mix, EMcov, SAS, SPSS Multiple Imputation • NORM, Cat, Mix, Pan (Joe Schafer) • SAS Proc MI • SPSS 17/18 (not quite yet) • LISREL 8.5+ • Amos

EM Algorithm • Expectation - Maximization Alternate between E-step: predict missing data M-step: estimate parameters • Excellent (ML) parameter estimates • But no standard errors • must use bootstrap • or multiple imputation

Multiple Imputation • Problem with Single Imputation:Too Little Variability • Because of Error Variance • Because covariance matrix is only one estimate

Too Little Error Variance • Imputed value lies on regression line

Restore Error . . . • Add random normal residual

Covariance Matrix (Regression Line) only One Estimate • Obtain multiple plausible estimates of the covariance matrix • ideally draw multiple covariance matrices from population • Approximate this with • Bootstrap • Data Augmentation (Norm) • MCMC (SAS)

Data Augmentation • stochastic version of EM • EM • E (expectation) step: predict missing data • M (maximization) step: estimate parameters • Data Augmentation • I (imputation) step: simulate missing data • P (posterior) step: simulate parameters

Data Augmentation • Parameters from consecutive steps ... • too related • i.e., not enough variability • after 50 or 100 steps of DA ... covariance matrices are like random draws from the population

Multiple Imputation Allows: • Unbiased Estimation • Good standard errors • provided number of imputations (m) is large enough • too few imputations reduced power with small effect sizes

ρ From Graham, J.W., Olchowski, A.E., & Gilreath, T.D. (2007). How many imputations are really needed? Some practical clarifications of multiple imputation theory. Prevention Science, 8, 206-213.