Unsupervised Machine Learning

E N D

Presentation Transcript

Unsupervised Machine Learning Claudio Duran, Cannistraci lab, BIOTEC Robert Haase, Myers lab, MPI CBG Mahmood Nazari, ABX-Cro / Schroeder lab, BIOTEC Martin Weigert, Myers lab, MPI CBG https://www.biotec.tu-dresden.de/research/cannistraci/lecture.html

Python RECAP How to read a .csv file?

Python RECAP 0 1 2 3 4 5 6 7 8 9 Manipulating pandas dataframes myList = [2,7,3,9,8,1,5,6,0,4] [7,3,9,8,1,5] myList[1:7] = ? [7,9,1] myList[1:7:2] = ? [1,5,6,0,4] myList[5:] = ? Rows Columns X[i,j] =

Today... • What is machine learning? • Where do we find it? • Unsupervised machine learning • Clustering • Dimensionality Reduction • Measurments for performance (Classification) • Exercises • MNIST

Machine learning Use of AI to provide systems the ability to learn from experiences. • Search engines • Anti-spam softwares SPAM • Credit card transactions • Digital cameras (face recognition)

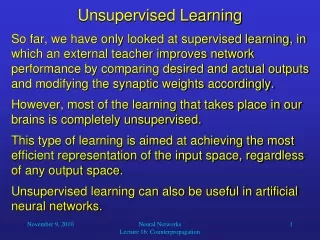

Machine learning • Machine Learning • Supervised • Unsupervised • Reinforced • Regression • Classification • Dimensionality reduction • Clustering • Learning by reward • Labels/response known • Labels/response unknown • GO game

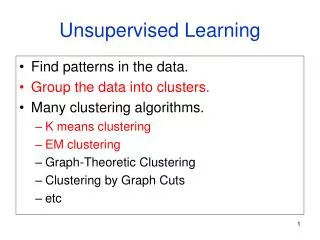

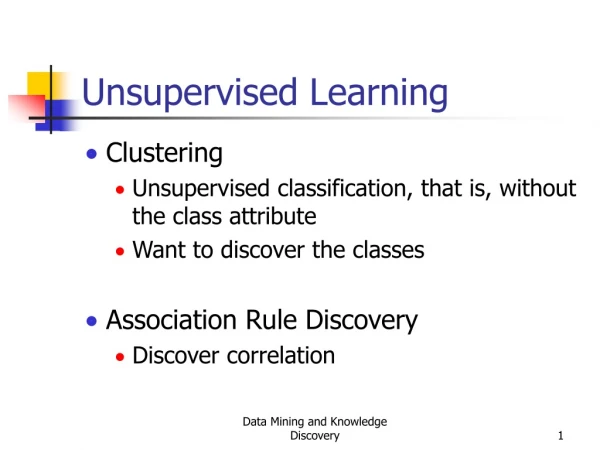

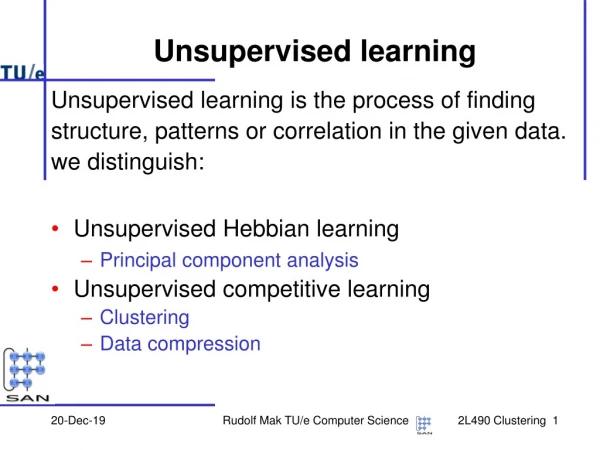

Unsupervised machine learning Informative way to visualize the data? Detect subgroups or patterns among the variables or observations?

Dimensionality Reduction Objective: to reduce the number of variables in consideration How? Most common dimensionality reduction technique: Principal Component Analysis (PCA)

PCA Finds a sequence of linear combinations of the variables that have maximal variance and are mutually uncorrelated.

PCA in python Never forget to import the library You have to create a pca variable with the number of desired components fit_transform is the function to call to apply the PCA transformation

ISOMAP Non-linear dimensionality reduction

ISOMAP Like in PCA

Clustering Process of grouping a set of similar objects into the same classes How many clusters?

K-Means Also known as K-Means, is an algorithm that group objects based on attributes into K number of groups. 1.- Place K centroids in random positions. 2.- Assign point X to the closest cluster (min D). 3.- New centroids are the average of the current point in cluster C 4.- Repeat until convergence (none of clusters assignment changes)

K-Means Fit is the function to apply the clustering method Number of clusters we are looking for “K”

DBSCAN Also known as Density-Based Spatial Clustering of Applications with Noise Density-based clustering locates regions of high density that are separated from one another by regions of low density Density = number of points within a specified radius (Eps)

DBSCAN Concepts: Core point: point that has more than a specified number of points (MinPts) within Eps Border point: has fewer than MinPts within Eps, but is in the neighborhood of a core point Noise point: any point that is not core point or border point If two core points are in their neighbourhood(within a distance Eps of one another) they are put in the same cluster

DBSCAN Eps and MinPts as input arguments Same function as Kmeans

Performance measurements Usually applied in binary classification tasks (supervised learning) -Accuracy -Sensitivity (or recall or true positive rate [TPR]) -Specificity (or true negative rate [TNR]) -Precision (or positive predicted values) -F-score

Performance measurements All positive samples Predicted as positive

Performance measurements Accuracy Sensitivity (recall) Precision Specificity How to check clustering performance? F-score

Exercises MNIST data hand writing 28x28 pixels Gray value scale Transformed into an array of 1x784

Exercises 1.- Load the MNIST data into a dataframe with pandas 2.- Apply Dimensionality Reduction to the data (PCA and ISOMAP) EXTRA: find the MDS dimensionality reduction technique and apply it [Hint: sklearn library, google] 3.- Show both results in a scatter plot and colour the point according to the label (number) [do not forget to add a legend to the plot] 4.- check If the clustering methods are able to retrieve the number of classes present in the data (10 classes). Try both Kmeans and DBSCAN 5.- check the performance of the clustering methods in retrieving the classes by measuring the accuracy (HINT: good predicted samples/total of samples)