Linear Classifiers

Explore linear classifiers for image classification, understanding distance projection, separating hyperplanes, Perceptron algorithm, and two-layer neural networks. Learn the basics and try an interactive demo.

Linear Classifiers

E N D

Presentation Transcript

Linear Classifiers Based on slides by William Cohen, Andrej Karpathy, Piyush Rai

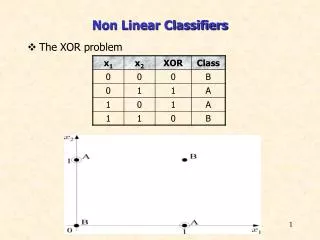

Linear Classifiers • Let’s simplify life by assuming: • Every instance is a vector of real numbers, x=(x1,…,xn). (Notation: boldface x is a vector.) • First we consider only two classes, y=(+1) and y=(-1) • A linear classifier is vector w of the same dimension as x that is used to make this prediction:

x2 Visually, x · w is the distance you get if you “project x onto w” w X1 . w X1 In 3d: lineplane In 4d: planehyperplane … X2 . w The line perpendicular to w divides the vectors classified as positive from the vectors classified as negative. -W

w -W Wolfram MathWorld Mediaboost.com

w -W Notice that the separating hyperplane goes through the origin…if we don’t want this we can preprocess our examples: or where b=w0 is called bias

Interactive Web Demo: http://vision.stanford.edu/teaching/cs231n/linear-classify-demo/

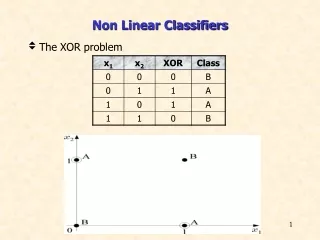

^ ^ Compute: yi = sign(wk . xi ) If mistake: wk+1 = wk + yixi yi yi Perceptron learning instancexi B A • 1957: The perceptron algorithm by Frank Rosenblatt • 1960: Perceptron Mark 1 Computer – hardware implementation • 1969: Minksky & Papert book shows perceptrons limited to linearly separable data • 1970’s: learning methods for two-layer neural networks