Classification with Decision Trees

Classification with Decision Trees. Instructor: Qiang Yang Hong Kong University of Science and Technology Qyang@cs.ust.hk Thanks: Eibe Frank and Jiawei Han. DECISION TREE [Quinlan93]. An internal node represents a test on an attribute.

Classification with Decision Trees

E N D

Presentation Transcript

Classification with Decision Trees Instructor: Qiang Yang Hong Kong University of Science and Technology Qyang@cs.ust.hk Thanks: Eibe Frank and Jiawei Han

DECISION TREE [Quinlan93] • An internal node represents a test on an attribute. • A branch represents an outcome of the test, e.g., Color=red. • A leaf node represents a class label or class label distribution. • At each node, one attribute is chosen to split training examples into distinct classes as much as possible • A new case is classified by following a matching path to a leaf node.

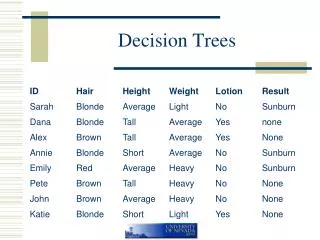

Example Outlook sunny overcast rain overcast humidity windy P high normal false true N P N P

Building Decision Tree [Q93] • Top-down tree construction • At start, all training examples are at the root. • Partition the examples recursively by choosing one attribute each time. • Bottom-up tree pruning • Remove subtrees or branches, in a bottom-up manner, to improve the estimated accuracy on new cases.

Choosing the Splitting Attribute • At each node, available attributes are evaluated on the basis of separating the classes of the training examples. A Goodness function is used for this purpose. • Typical goodness functions: • information gain (ID3/C4.5) • information gain ratio • gini index

A criterion for attribute selection • Which is the best attribute? • The one which will result in the smallest tree • Heuristic: choose the attribute that produces the “purest” nodes • Popular impurity criterion: information gain • Information gain increases with the average purity of the subsets that an attribute produces • Strategy: choose attribute that results in greatest information gain

Computing information • Information is measured in bits • Given a probability distribution, the info required to predict an event is the distribution’s entropy • Entropy gives the information required in bits (this can involve fractions of bits!) • Formula for computing the entropy: • Suppose a set S has n values: V1, V2, …Vn, where Vi has proportion pi, • E.g., the weather data has 2 values: Play=P and Play=N. Thus, p1=9/14, p2=5/14.

Example: attribute “Outlook” • “Outlook” = “Sunny”: • “Outlook” = “Overcast”: • “Outlook” = “Rainy”: • Expected information for attribute: Note: this is normally not defined.

Computing the information gain • Information gain: information before splitting – information after splitting • Information gain for attributes from weather data:

The final decision tree • Note: not all leaves need to be pure; sometimes identical instances have different classes Splitting stops when data can’t be split any further

Highly-branching attributes • Problematic: attributes with a large number of values (extreme case: ID code) • Subsets are more likely to be pure if there is a large number of values • Information gain is biased towards choosing attributes with a large number of values • This may result in overfitting (selection of an attribute that is non-optimal for prediction) • Another problem: fragmentation

The gain ratio • Gain ratio: a modification of the information gain that reduces its bias on high-branch attributes • Gain ratio takes number and size of branches into account when choosing an attribute • It corrects the information gain by taking the intrinsic information of a split into account • Also called split ratio • Intrinsic information: entropy of distribution of instances into branches • (i.e. how much info do we need to tell which branch an instance belongs to)

Gain Ratio • IntrinsicInfo should be • Large when data is evenly spread over all branches • Small when all data belong to one branch • Gain ratio (Quinlan’86) normalizes info gain by IntrinsicInfo:

Computing the gain ratio • Example: intrinsic information for ID code • Importance of attribute decreases as intrinsic information gets larger • Example of gain ratio: • Example:

More on the gain ratio • “Outlook” still comes out top • However: “ID code” has greater gain ratio • Standard fix: ad hoc test to prevent splitting on that type of attribute • Problem with gain ratio: it may overcompensate • May choose an attribute just because its intrinsic information is very low • Standard fix: • First, only consider attributes with greater than average information gain • Then, compare them on gain ratio

Gini Index • If a data set T contains examples from n classes, gini index, gini(T) is defined as where pj is the relative frequency of class j in T. gini(T) is minimized if the classes in T are skewed. • After splitting T into two subsets T1 and T2 with sizes N1 and N2, the gini index of the split data is defined as • The attribute providing smallest ginisplit(T) is chosen to split the node.

Stopping Criteria • When all cases have the same class. The leaf node is labeled by this class. • When there is no available attribute. The leaf node is labeled by the majority class. • When the number of cases is less than a specified threshold. The leaf node is labeled by the majority class. • You can make a decision at every node in a decision tree! • How?

Pruning • Pruning simplifies a decision tree to prevent overfitting to noise in the data • Two main pruning strategies: • Postpruning: takes a fully-grown decision tree and discards unreliable parts • Prepruning: stops growing a branch when information becomes unreliable • Postpruning preferred in practice because of early stopping in prepruning

Prepruning • Usually based on statistical significance test • Stops growing the tree when there is no statistically significant association between any attribute and the class at a particular node • Most popular test: chi-squared test • ID3 used chi-squared test in addition to information gain • Only statistically significant attributes where allowed to be selected by information gain procedure

Postpruning • Builds full tree first and prunes it afterwards • Attribute interactions are visible in fully-grown tree • Problem: identification of subtrees and nodes that are due to chance effects • Two main pruning operations: • Subtree replacement • Subtree raising • Possible strategies: error estimation, significance testing, MDL principle

Subtree replacement • Bottom-up: tree is considered for replacement once all its subtrees have been considered

Estimating error rates • Pruning operation is performed if this does not increase the estimated error • Of course, error on the training data is not a useful estimator (would result in almost no pruning) • One possibility: using hold-out set for pruning (reduced-error pruning) • C4.5’s method: using upper limit of 25% confidence interval derived from the training data • Standard Bernoulli-process-based method

Post-pruning in C4.5 • Bottom-up pruning: at each non-leaf node v, if merging the subtree at v into a leaf node improves accuracy, perform the merging. • Method 1: compute accuracy using examples not seen by the algorithm. • Method 2: estimate accuracy using the training examples: • Consider classifying E examples incorrectly out of N examples as observing E events in N trials in the binomial distribution. • For a given confidence level CF, the upper limit on the error rate over the whole population is with CF% confidence.

Pessimistic Estimate • Usage in Statistics: Sampling error estimation • Example: • population: 1,000,000 people, • population mean: percentage of the left handed people • sample: 100 people • sample mean: 6 left-handed • How to estimate the REAL population mean? Possibility(%) 25% confidence interval 6 2 10 L0.25(100,6) U0.25(100,6)

C4.5’s method • Error estimate for subtree is weighted sum of error estimates for all its leaves • Error estimate for a node: • If c = 25% then z = 0.69 (from normal distribution) • f is the error on the training data • N is the number of instances covered by the leaf

Example Outlook ? sunny cloudy yes overcast yes yes no

Example cont. • Consider a subtree rooted at Outlook with 3 leaf nodes: • Sunny: Play = yes : (0 error, 6 instances) • Overcast: Play= yes: (0 error, 9 instances) • Cloudy: Play = no (0 error, 1 instance) • The estimated error for this subtree is • 6*0.074+9*0.050+1*0.323=1.217 • If the subtree is replaced with the leaf “yes”, the estimated error is • So no pruning is performed

Another Example If we have a single leaf node If we split, we can compute average error using ratios 6:2:6 this gives 0.51 f=5/14 e=0.46 f=0.33 e=0.47 f=0.5 e=0.72 f=0.33 e=0.47

Other Trees • Classification Trees • Current node • Children nodes (L, R): • Decision Trees • Current node • Children nodes (L, R): • GINI index used in CART (STD= ) • Current node • Children nodes (L, R):

Efforts on Scalability • Most algorithms assume data can fit in memory. • Recent efforts focus on disk-resident implementation for decision trees. • Random sampling • Partitioning • Examples • SLIQ (EDBT’96 -- [MAR96]) • SPRINT (VLDB96 -- [SAM96]) • PUBLIC (VLDB98 -- [RS98]) • RainForest (VLDB98 -- [GRG98])

Questions • Consider the following variations of decision trees

1. Apply KNN to each leaf node • Instead of choosing a class label as the majority class label, use KNN to choose a class label

2. Apply Naïve Bayesian at each leaf node • For each leave node, use all the available information we know about the test case to make decisions • Instead of using the majority rule, use probability/likelihood to make decisions

3. Use error rates instead of entropy • If a node has N1 positive class labels P, and N2 negative class labels N, • If N1> N2, then choose P • The error rate = N2/(N1+N2) at this node • The expected error at a parent node can be calculated as weighted sum of the error rates at each child node • The weights are the proportion of training data in each child

Cost Sensitive Decision Trees • When the FP and FN have different costs, the leaf node label is different depending on the costs: • If growing a tree has a smaller total cost, • then choose an attribute with minimal total cost. • Otherwise, stop and form a leaf. • Label leaf according to minimal total cost: • Suppose the leaf have P positive examples and N negative examples • FP denotes the cost of a false positive example and FN false negative • If (P×FN N×FP)THEN label = positive ELSE label = negative

5. When there is missing value in the test data, we allow tests to be done • Attribute selection criterion: minimal total cost(Ctotal = Cmc + Ctest) instead of minimal entropy in C4.5 • Typically, if there are missing values, then to obtain a value for a missing attribute (say Temperature) will incur new cost • But may increase accuracy of prediction, thus reducing the miss classification costs • In general, there is a balance between the two costs • We care about the total cost

Stream Data • Suppose that you have built a decision tree from a set of training data • Now suppose that some additional training data comes at a regular interval, in fast speed • How do you adapt the existing tree to fit the new data? • Suggest an ‘online’ algorithm. • Hint: find a paper online on this topic