Slide 0

Lecture 1 MATHEMATICS OF THE BRAIN with an emphasis on the problem of a universal learning computer (ULC) and a universal learning robot (ULR) Victor Eliashberg Consulting professor, Stanford University, Department of Electrical Engineering. Slide 0.

Slide 0

E N D

Presentation Transcript

Lecture 1 MATHEMATICS OF THE BRAIN with an emphasis on the problem of a universal learning computer (ULC) and a universal learning robot (ULR) Victor Eliashberg Consulting professor, Stanford University, Department of Electrical Engineering Slide 0

WHAT DOES IT MEAN TO UNDERSTAND THE BRAIN? 1. User understanding. 2. Repairman understanding. 3. Programmer (educator) understanding. 4. Systems developer understanding. 5. Salesman understanding. Slide 1

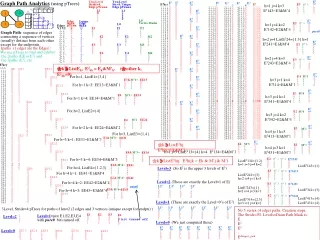

TWO MAIN APPROACHES 1. BIOLOGICALLY-INSPIRED ENGINEERING (bionics) Formulate biologically-inspired engineering / mathematical problems. Try to solve these problems in the most efficient engineering way. This approach had big success in engineering: universal programmable computer vs. human computer , a car vs. a horse, an airplane vs. a bird. It hasn’t met with similar success in simulating human cognitive functions. 2. SCIENTIFIC / ENGINEERING (reverse engineering = hacking) Formulate biologically-inspired engineering or mathematical hypotheses. Study the implications of these hypotheses and try to falsify the hypotheses. That is, try to eliminate biologically impossible ideas! We believe this approach has a better chance to succeed in the area of brain-like computers and intelligent robots than the first one. Why? So far the attempts to define the concepts of learning and intelligence per se as engineering/mathematical concepts have led to less interesting problems than the original biological problems. Slide 2

HUMAN ROBOT Slide 3

CONTROL SYSTEM Slide 4

OUR MOST IMPORTANT PERSONAL COMPUTER 12 cranial nerves ; ~1010 neurons in each hemisphere ~1011 neurons 31 pairs of nerves; ~ 107 neurons 8 pairs 12 pairs 5 pairs 6 pairs Slide 5

The brain has a very large but topologically simple circuitry The shown cerebellar network has ~1011 granule (Gr) cells and ~2.5 107 Purkinje (Pr) cells. There are around 105 synapses between T-shaped axons of Gr cells and the dendrites of a single Pr cell. Pr Memory is stored in such matrices Slide 6 LTM size: Cerebelum: N=2,5 107 * 105= 2.51012 B= 2.5 TB. Neocortex: N=1010 * 104= 1014 B= 100 TB.

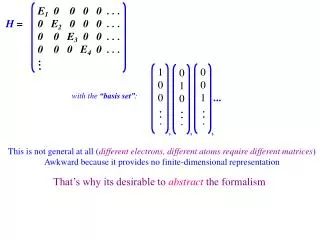

Big picture: Cognitive system (Robot,World) External system (W,D) Computing system, B, simulating the work of human nervous system Sensorimotor devices, D B D W Human-like robot (D,B) External world, W B(t)is a formal representation ofBat time t, where t=0 is the beginning of learning. B(0) is an untrained brain.B(0)=(H(0),g(0)), where H(0) = H is the representation of the brain hardware, g(0) is the representation of initial knowledge (state of LTM) Slide 7

CONCEPT OF FORCED MOTOR TRAINING M SM External system (W,D) Brain (NS,NM,AM) D AM S NS Motor control: W associations M NM Teacher During training, motor signals (M) can be controlled byTeacher or by learner (AM) . Sensory signals (S) are received from external system (W,D). . Slide 8

Working memory and mental imagery M AS D S MS S associations S NS Motor control W S AM M SM M associations M NM Teacher TWO TYPES OF LEARNING Slide 10

Mental computations (thinking) as an interaction between motor control and working memory (EROBOT.EXE) Slide 11

Motor and sensory areas of the neocortex Working memory, episodic memory, and mental imagery Motor control AM AS Slide 12

Association fibers (neural busses) Slide 14

SYSTEM-THEORETICAL BACKGROUND Slide 15

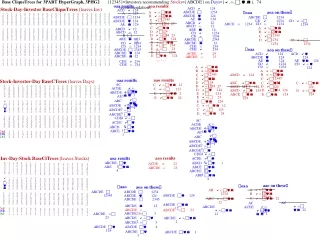

Type 0: Turing machines (the highest computing power) Type 0 Type 1 Type 1: Context-sensitive grammars Type 2 Type 2: Context-free grammars (push-down automata) Type 3 Type 4 Type 3: Finite-state machines Type 4: Combinatorial machines (the lowest computing power) Fundamental constraint associated with the general levels of computing power Traditional ANN models are below thered line. Symbolic systems go above the red line but they require a read/write memory buffer.The brain doesn’t have such buffer. Fundamental problem: How can the human brain achieve the highest level of computing power without a memory buffer? Slide 16

Type 4: Combinatorial machines X={a,b,c} PROM a b c b a c Y={0,1} G 010011 f: X×G→Y y x f General structure of universal programmable systems of different types PROMstands forProgrammable Read-Only Memory. In psychological terms PROM can be thought of as a Long-Term Memory (LTM). Letter G implies the notion of synaptic Gain. Slide 17

Type 3: Finite-state machines PROM x X={a,b,c} a b c a b c a a s S=Y={0,1} 0 0 1 1 G 0 1 snext 0 1 0 1 1 1 f: X×S×G→S×Y a a y 0 0 0 1 1 1 y x f snext s register Slide 18

Type 0: Turing machines(state machines coupled with a read/write external memory) PROM f: X×S×G×M→S×M×Y G y M x f s Memory buffer, e.g, a tape snext register Slide 19

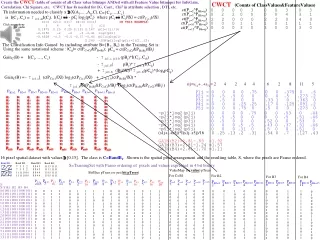

Basic arcitecture of a primitive E-machine Association inputs Data inputs to ILTM INPUT LONG-TERM MEMORY (ILTM) DECODING, INPUT LEARNING Similarity function Control inputs E-STATES (dynamic STM and ITM) MODULATION, NEXT E-STATE PROCEDURE Modulated (biased) similarity function CHOICE Data inputs to OLTM Control outputs Selected subset of active locations of OLTM OUTPUT LONG-TERM MEMORY (OLTM) ENCODING, OUTPUT LEARNING Association outputs Data outputs from OLTM Slide 20

The brain as a complex E-machine D SUBCORTICAL SYSTEMS SENSORY CORTEX S1 AS1 ASk W D MOTOR CORTEX SUBCORTICAL SYSTEMS M1 AM1 AMm Slide 21

A GLANCE AT THE SENSORIMOTOR DEVICES Slide 21

VISION Slide 22

EYE Slide 23

EYE MOVEMENT CONTOL Slide 24

AUDITORY AND VESTIBULAR SENSORS Slide 25

AUDITORY PREPROCESSING ~100,000,000 cells ~580,000 cells ~4,000 inner hair cells ~12,000 outer hair cells ~390,000 cells ~90,000 cells ~30,000 fibers Slide 26

OTHER STUFF Slide 27

EMOTIONS(1) Slide 28

EMOTIONS(2) Slide 29

SPINAL MOTOR CONTROL SENSORY FIBERS MOTOR FIBERS Slide 30