Possible scenarios for LHC data analysis

100 likes | 318 Views

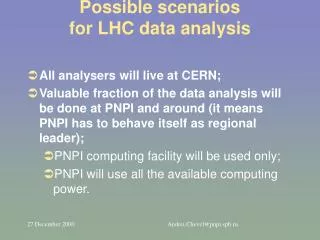

Possible scenarios for LHC data analysis. All analysers will live at CERN; Valuable fraction of the data analysis will be done at PNPI and around (it means PNPI has to behave itself as regional leader); PNPI computing facility will be used only;

Possible scenarios for LHC data analysis

E N D

Presentation Transcript

Possible scenarios for LHC data analysis • All analysers will live at CERN; • Valuable fraction of the data analysis will be done at PNPI and around (it means PNPI has to behave itself as regional leader); • PNPI computing facility will be used only; • PNPI will use all the available computing power. Andrei.Chevel@pnpi.spb.ru

LHC Data analysis at PNPI and around • In total we should have in 2005 the following: • 50K-90K SpecInt95 of processor power; • 2-5TB disk cache; • 10TB-50TB tape library (tape robot); • All the equipment could be used in different manner: • In one place (for example at PNPI); • In several places in SPb and Gatchina. Andrei.Chevel@pnpi.spb.ru

As soon as possible HEPD needs for computing • We should have as soon as possible: • Small tape library (10 cartridges) with drive DLT 8000 (about $10K); • 0.5TB disk cache (about $10K); • 1K SpecInt 95 (about 10 dual processor 1 GHz) (about $20K); • Network Equipment and Accessories (about $2K). Andrei.Chevel@pnpi.spb.ru

Existing and planned channels at St.Petersburg region Andrei.Chevel@pnpi.spb.ru

HEPD urgently needs for at least 2 Mbit to SPb • Currently available link from PNPI to SPb is reasonably below critics; • That means PNPI needs to upgrade the link to SPb (not the Internet); • I would like to emphasize that partner Universities at SPb do need for the same (good channel in between Universities and PNPI). Andrei.Chevel@pnpi.spb.ru

BCFpc hardware (picture) Andrei.Chevel@pnpi.spb.ru

Projects for distributed computing • Main goal of all projects: • To realize more or less uniform access to distributed data, computing power, other resources • GriPhyN – Grid Physics Network (www.griphyn.org) • DataGrid (grid.web.cern.ch/grid) • RRCF (www.pnpi.spb.ru/RRCF) Andrei.Chevel@pnpi.spb.ru

Completed tasks in year 2000 (1) • Seminar at SPb University (A. Chevel, March 2000); • CERNlib and related software was installed at IHPC&DB (A. Chevel, T. Serebrova); • Published paper on LHC computing at Russian journal ‘Byte Russia’ number 2, 2000; • Meetings at PNPI on LHC computing (see ‘www.pnpi.spb.ru/RRCF’; all people from CSD). • Upgrade of the existing computing farm (A. Lodkin, A. Oreshkin) Andrei.Chevel@pnpi.spb.ru

Completed tasks in year 2000 (2) • It was created testbed setup for system Globus • Globus was installed on ‘pcfarm’ and Linux station; • The people from HPC&DB was asked to install Globus on ‘spp.csa.ru’ (the installation is in progress now). • It was installed client part of AFS on ‘pcfarm’. Andrei.Chevel@pnpi.spb.ru

Conclusion • HEPD needs In nearest future (2001?) to upgrade the link to SPb. • HEPD needs to realize in nearest future (2001?) the 10-20 processor computing farm. • The End Andrei.Chevel@pnpi.spb.ru