Optimistic Intra-Transaction Parallelism using Thread Level Speculation

Optimistic Intra-Transaction Parallelism using Thread Level Speculation. Chris Colohan 1 , Anastassia Ailamaki 1 , J. Gregory Steffan 2 and Todd C. Mowry 1,3 1 Carnegie Mellon University 2 University of Toronto 3 Intel Research Pittsburgh. Chip Multiprocessors are Here!.

Optimistic Intra-Transaction Parallelism using Thread Level Speculation

E N D

Presentation Transcript

Optimistic Intra-Transaction Parallelism using Thread Level Speculation Chris Colohan1, Anastassia Ailamaki1, J. Gregory Steffan2 and Todd C. Mowry1,3 1Carnegie Mellon University 2University of Toronto 3Intel Research Pittsburgh

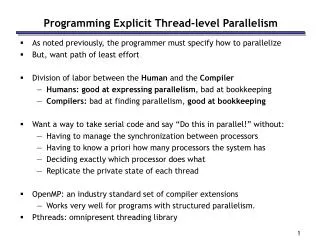

Chip Multiprocessors are Here! • 2 cores now, soon will have 4, 8, 16, or 32 • Multiple threads per core • How do we best use them? AMD Opteron IBM Power 5 Intel Yonah

Transactions DBMS Database Multi-Core Enhances Throughput Users Database Server Cores can run concurrent transactions and improve throughput

Transactions DBMS Database Using Multiple Cores Users Database Server Can multiple cores improve transaction latency?

Parallelizing transactions DBMS SELECT cust_info FROM customer; UPDATE district WITH order_id; INSERT order_id INTO new_order; foreach(item) { GET quantity FROM stock; quantity--; UPDATE stock WITH quantity; INSERT item INTO order_line; } • Intra-query parallelism • Used for long-running queries (decision support) • Does not work for short queries • Short queries dominate in commercial workloads

Parallelizing transactions DBMS SELECT cust_info FROM customer; UPDATE district WITH order_id; INSERT order_id INTO new_order; foreach(item) { GET quantity FROM stock; quantity--; UPDATE stock WITH quantity; INSERT item INTO order_line; } • Intra-transaction parallelism • Each thread spans multiple queries • Hard to add to existing systems! • Need to change interface, add latches and locks, worry about correctness of parallel execution…

Parallelizing transactions DBMS SELECT cust_info FROM customer; UPDATE district WITH order_id; INSERT order_id INTO new_order; foreach(item) { GET quantity FROM stock; quantity--; UPDATE stock WITH quantity; INSERT item INTO order_line; } • Intra-transaction parallelism • Breaks transaction into threads • Hard to add to existing systems! • Need to change interface, add latches and locks, worry about correctness of parallel execution… Thread Level Speculation (TLS) makes parallelization easier.

Epoch 1 Epoch 2 Time =*p =*p =*q =*q Thread Level Speculation (TLS) *p= *p= *q= *q= =*p =*q Sequential Parallel

Time =*p =*q Thread Level Speculation (TLS) Epoch 1 Epoch 2 • Use epochs • Detect violations • Restart to recover • Buffer state • Oldest epoch: • Never restarts • No buffering • Worst case: • Sequential • Best case: • Fully parallel =*p Violation! *p= *p= R2 *q= *q= =*p =*q Sequential Parallel Data dependences limit performance.

A Coordinated Effort Choose epoch boundaries TransactionProgrammer DBMS Programmer Remove performance bottlenecks Hardware Developer Add TLS support to architecture

Intra-transaction parallelism Without changing the transactions With minor changes to the DBMS Without having to worry about locking Without introducing concurrency bugs With good performance Halve transaction latency on four cores So what’s new?

Related Work • Optimistic Concurrency Control (Kung82) • Sagas (Molina&Salem87) • Transaction chopping (Shasha95)

Outline • Introduction • Related work • Dividing transactions into epochs • Removing bottlenecks in the DBMS • Results • Conclusions

78% of transaction execution time Case Study: New Order (TPC-C) GET cust_info FROM customer; UPDATE district WITH order_id; INSERT order_id INTO new_order; foreach(item) { GET quantity FROM stock WHERE i_id=item; UPDATE stock WITH quantity-1 WHERE i_id=item; INSERT item INTO order_line; } • Only dependence is the quantity field • Very unlikely to occur (1/100,000)

Case Study: New Order (TPC-C) GET cust_info FROM customer; UPDATE district WITH order_id; INSERT order_id INTO new_order; foreach(item) { GET quantity FROM stock WHERE i_id=item; UPDATE stock WITH quantity-1 WHERE i_id=item; INSERT item INTO order_line; } GET cust_info FROM customer; UPDATE district WITH order_id; INSERT order_id INTO new_order; TLS_foreach(item) { GET quantity FROM stock WHERE i_id=item; UPDATE stock WITH quantity-1 WHERE i_id=item; INSERT item INTO order_line; }

Outline • Introduction • Related work • Dividing transactions into epochs • Removing bottlenecks in the DBMS • Results • Conclusions

Time Dependences in DBMS

Time Dependences in DBMS Dependences serialize execution! Example: statistics gathering • pages_pinned++ • TLS maintains serial ordering of increments • To remove, use per-CPU counters Performance tuning: • Profile execution • Remove bottleneck dependence • Repeat

Buffer Pool Management CPU get_page(5) put_page(5) Buffer Pool ref: 1 ref: 0

get_page(5) put_page(5) Time Buffer Pool Management CPU get_page(5) • TLS maintains original load/store order • Sometimes this is not needed get_page(5) put_page(5) put_page(5) get_page(5) Buffer Pool put_page(5) TLS ensures first epoch gets page first. Who cares? ref: 0

Time = Escape Speculation Buffer Pool Management • Escape speculation • Invoke operation • Store undo function • Resume speculation CPU get_page(5) get_page(5) get_page(5) put_page(5) put_page(5) put_page(5) get_page(5) Buffer Pool put_page(5) ref: 0 Isolated: undoing get_page will not affect other transactions Undoable: have an operation (put_page) which returns the system to its initial state

put_page(5) put_page(5) put_page(5) Time = Escape Speculation Buffer Pool Management CPU get_page(5) get_page(5) get_page(5) put_page(5) get_page(5) Buffer Pool Not undoable! ref: 0

put_page(5) put_page(5) Time = Escape Speculation Buffer Pool Management CPU get_page(5) • Delayput_page until end of epoch • Avoid dependence get_page(5) get_page(5) put_page(5) Buffer Pool ref: 0

Removing Bottleneck Dependences We introduce three techniques: • Delay operations until non-speculative • Mutex and lock acquire and release • Buffer pool, memory, and cursor release • Log sequence number assignment • Escape speculation • Buffer pool, memory, and cursor allocation • Traditional parallelization • Memory allocation, cursor pool, error checks, false sharing

Outline • Introduction • Related work • Dividing transactions into epochs • Removing bottlenecks in the DBMS • Results • Conclusions

CPU CPU CPU CPU 32KB 4-way L1 $ 32KB 4-way L1 $ 32KB 4-way L1 $ 32KB 4-way L1 $ 2MB 4-way L2 $ Rest of memory system Experimental Setup • Detailed simulation • Superscalar, out-of-order, 128 entry reorder buffer • Memory hierarchy modeled in detail • TPC-C transactions on BerkeleyDB • In-core database • Single user • Single warehouse • Measure interval of 100 transactions • Measuring latency not throughput

Idle CPU Violated Cache Miss Busy Locks B-Tree Logging Latches Buffer Pool Malloc/Free Error Checks False Sharing Cursor Queue No Optimizations Optimizing the DBMS: New Order 1.25 26% improvement 1 0.75 Time (normalized) Other CPUs not helping 0.5 Can’t optimize much more Cache misses increase 0.25 0 Sequential

Idle CPU Violated Cache Miss Busy Locks B-Tree Logging Latches Buffer Pool Malloc/Free Error Checks False Sharing Cursor Queue No Optimizations Optimizing the DBMS: New Order 1.25 1 0.75 Time (normalized) 0.5 0.25 0 This process took me 30 days and <1200 lines of code. Sequential

3/5 Transactions speed up by 46-66% Other TPC-C Transactions 1 0.75 Idle CPU Failed Time (normalized) Cache Miss 0.5 Busy 0.25 0 New Order Delivery Stock Level Payment Order Status

Conclusions • TLS makes intra-transaction parallelism practical • Reasonable changes to transaction, DBMS, and hardware • Halve transaction latency

Needed backup slides (not done yet) • 2 proc. Results • Shared caches may change how you want to extract parallelism! • Just have lots of transactions: no sharing • TLS may have more sharing

Any questions? For more information, see: www.colohan.com

Latches • Mutual exclusion between transactions • Cause violations between epochs • Read-test-write cycle RAW • Not needed between epochs • TLS already provides mutual exclusion!

Homefree Homefree Large critical section Latches: Aggressive Acquire Acquire latch_cnt++ …work… latch_cnt-- latch_cnt++ …work… (enqueue release) latch_cnt++ …work… (enqueue release) Commit work latch_cnt-- Commit work latch_cnt-- Release

Homefree Homefree Small critical sections Latches: Lazy Acquire Acquire …work… Release (enqueue acquire) …work… (enqueue release) (enqueue acquire) …work… (enqueue release) Acquire Commit work Release Acquire Commit work Release

Time Concurrenttransactions TLS in Database Systems • Large epochs: • More dependences • Must tolerate • More state • Bigger buffers Non-Database TLS TLS in Database Systems

think I know this is parallel! par_for() { do_work(); } Must…Make…Faster feed back feed back feed back feed back feed back feed back feed back feed back feed back feed back feed Feedback Loop for() { do_work(); }

Time Must…Make…Faster =*p 0x0FD8 0xFD20 0x0FC0 0xFC18 =*q Violations == Feedback =*p Violation! *p= *p= R2 *q= *q= =*p =*q Sequential Parallel

=*p Violation! Violation! *p= R2 =*q *q= *q= Time =*q =*p Optimization maymake slower? =*q Parallel Eliminate *p Dep. Eliminating Violations 0x0FD8 0xFD20 0x0FC0 0xFC18

Violation! =*q *q= Time =*q =*q =*q Eliminate *p Dep. Tolerating Violations: Sub-epochs Violation! *q= Sub-epochs

=*q =*q Sub-epochs • Started periodically by hardware • How many? • When to start? • Hardware implementation • Just like epochs • Use more epoch contexts • No need to check violations between sub-epochs within an epoch Violation! *q= Sub-epochs

L1 $ L1 $ L1 $ L1 $ Old TLS Design Buffer speculative state in write back L1 cache CPU CPU CPU CPU L1 $ L1 $ L1 $ L1 $ Restart by invalidating speculative lines Invalidation Detect violations through invalidations • Problems: • L1 cache not large enough • Later epochs only get values on commit L2 $ Rest of system only sees committed data Rest of memory system

L1 $ L1 $ L1 $ L1 $ New Cache Design CPU CPU CPU CPU Speculative writes immediately visible to L2 (and later epochs) L1 $ L1 $ L1 $ L1 $ Restart by invalidating speculative lines Buffer speculative and non-speculative state for all epochs in L2 L2 $ L2 $ Invalidation Detect violations at lookup time Rest of memory system Invalidation coherence between L2 caches

L1 $ L1 $ L1 $ L1 $ New Features New! CPU CPU CPU CPU Speculative state in L1 and L2 cache L1 $ L1 $ L1 $ L1 $ Cache line replication (versions) L2 $ L2 $ Data dependence tracking within cache Speculative victim cache Rest of memory system

1 1 0.75 0.75 Idle CPU Failed Speculation IUO Mutex Stall 0.5 0.5 Cache Miss Instruction Execution 0.25 0.25 0 0 2 CPUs 4 CPUs 8 CPUs 2 CPUs 4 CPUs 8 CPUs Sequential Sequential Modified with 50-150 items/transaction Scaling Time (normalized)

Idle CPU Failed Speculation IUO Mutex Stall Cache Miss Instruction Execution Evaluating a 4-CPU system Parallelized benchmark run on 1 CPU Original benchmark run on 1 CPU Without sub-epoch support 1 0.75 Parallel execution Time (normalized) 0.5 Ignore violations (Amdahl’s Law limit) 0.25 0 Baseline TLS Seq Sequential No Sub-epoch No Speculation

Sub-epochs: How many/How big? • Supporting more sub-epochs is better • Spacing depends on location of violations • Even spacing is good enough