Parallel Inversion of Polynomial Matrices

Parallel Inversion of Polynomial Matrices. Alina Solovyova-Vincent Frederick C. Harris, Jr. M. Sami Fadali. Overview. Introduction Existing algorithms Busłowicz’s algorithm Parallel algorithm Results Conclusions and future work. Definitions.

Parallel Inversion of Polynomial Matrices

E N D

Presentation Transcript

Parallel Inversion of Polynomial Matrices Alina Solovyova-Vincent Frederick C. Harris, Jr. M. Sami Fadali

Overview • Introduction • Existing algorithms • Busłowicz’s algorithm • Parallel algorithm • Results • Conclusions and future work

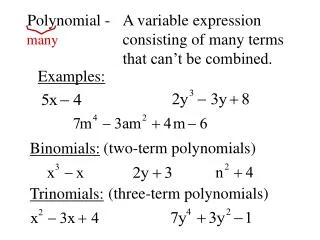

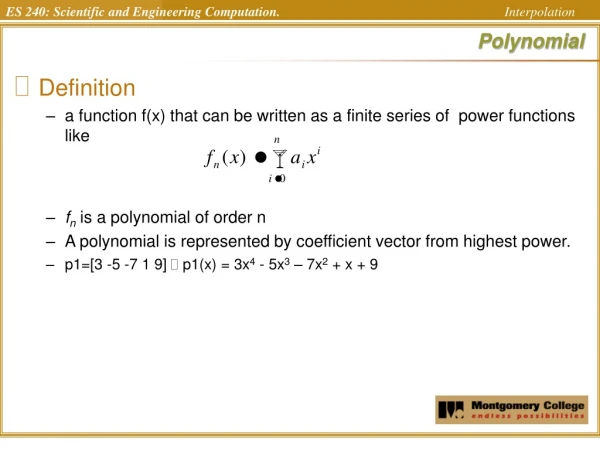

Definitions A polynomial matrix is a matrix which has polynomials in all of its entries. H(s) = Hnsn+Hn-1sn-1+Hn-2sn-2+…+Ho, where Hi are constant r x r matrices, i=0, …, n.

Definitions Example: s+2 s3+ 3s2+s s3 s2+1 n=3 – degree of the polynomial matrix r=2 – the size of the matrix H Ho= H1= … • 2 0 • 0 1 • 1 • 0 0

Definitions H-1(s) – inverse of the matrix H(s) One of the ways to calculate it H-1(s) = adj H(s) /det H(s)

Definitions • A rational matrix can be expressed as a ration of a numerator polynomial matrix and a denominator scalar polynomial.

Who Needs It??? • Multivariable control systems • Analysis of power systems • Robust stability analysis • Design of linear decoupling controllers • … and many more areas.

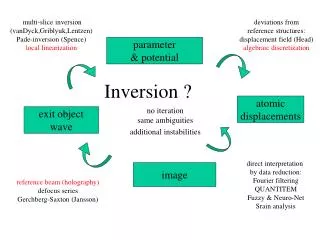

Existing Algorithms • Leverrier’s algorithm ( 1840) • [sI-H] - resolvent matrix • Exact algorithms • Approximation methods

The Selection of the Algorithm • Large degree of polynomial operations • Lengthy calculations • Not very general Before Buslowicz’s algorithm (1980) After • Some improvements at the cost of increased computational complexity

Buslowicz’s Algorithm Benefits: • More general than methods proposed earlier • Only requires operations on constant matrices • Suitable for computer programming Drawback: • the irreducible form cannot be ensured in general

Details of the Algorithm • Available upon request

Challenges Encountered (sequential) • Several inconsistencies in the original paper:

Challenges Encountered (parallel) • Dependent loops for(k=0; k<n*i+1; k++) { } for (i=2; i<r+1; i++) { calculations requiring R[i-1][k] } O(n2r4)

Challenges Encountered (parallel) • Loops of variable length for(k=0; k<n*i+1; k++) { for(ll=0; ll<min+1; ll++) { main calculations } } Varies with k

Shared and Distributed Memory • Main differences • Synchronization of the processes • Shared Memory (barrier) • Distributed memory (data exchange)

Platforms • Distributed memory platforms: • SGI 02 NOW MIPS R5000 180MHz • P IV NOW 1.8 GHz • P III Cluster 1GHz • P IV Cluster Zeon 2.2GHz

Platforms • Shared memory platforms: • SGI Power Challenge 10000 8 MPIS R10000 • SGI Origin 2000 16 MPIS R12000 300MHz

Understanding the Results • n – degree of polynomial (<= 25) • r – size of a matrix (<=25) • Sequential algorithm – O(n2r5) • Average of multiple runs • Unloaded platforms

Results – Distributed Memory • Speedup • SGI O2 NOW - slowdown • P IV NOW - minimal speedup

Results – Shared Memory • Excellent results!!!

Speedup (SGI Origin 2000) Superlinear speedup!

Run times (SGI Power Challenge) 8 processors

Conclusions • We have performed an exhaustive search of all available algorithms; • We have implemented the sequential version of Busłowicz’s algorithm; • We have implemented two versions of the parallel algorithm; • We have tested parallel algorithm on 6 different platforms; • We have obtained excellent speedup and efficiency in a shared memory environment.

Future Work • Study the behavior of the algorithm for larger problem sizes (distributed memory). • Re-evaluate message passing in distributed memory implementation. • Extend Buslowicz’s algorithm to inverting multivariable polynomial matrices H(s1, s2 …sk).