Maximizing Classifier Utility when Training Data is Costly

330 likes | 353 Views

Maximizing Classifier Utility when Training Data is Costly. Gary M. Weiss Ye Tian Fordham University. Outline. Introduction Motivation, cost model Experimental Methodology Results Adult data set Progressive Sampling Related Work Future Work/Conclusion. Motivation.

Maximizing Classifier Utility when Training Data is Costly

E N D

Presentation Transcript

Maximizing Classifier Utility when Training Data is Costly Gary M. Weiss Ye Tian Fordham University

Outline • Introduction • Motivation, cost model • Experimental Methodology • Results • Adult data set • Progressive Sampling • Related Work • Future Work/Conclusion UBDM 2006 Workshop

Motivation • Utility-Based Data Mining • Concerned with utility of overall data mining process • A key cost is the cost of training data • These costs often ignored (except for active learning) • First ones to analyze the impact of a very simple cost model • In doing so we fill a hole in existing research • Our cost model • A fixed cost for acquiring labeled training examples • No separate cost for class labels, missing features, etc. • Turney1 called this the “cost of cases” • No control over which training examples chosen • No active learning UBDM 2006 Workshop

Motivation (cont.) • Efficient progressive sampling2 • Determines “optimal” training set size • Optimal is where the learning curve reaches a plateau • Assumes data acquisition costs are essentially zero • What if the acquisition costs are significant? UBDM 2006 Workshop

Motivating Examples • Predicting customer behavior/buying potential • Training data from D&B and Ziff-Davis • These and other “information vendors” make money by selling information • Poker playing • Learn about an opponent by playing him UBDM 2006 Workshop

Outline • Introduction • Motivation, cost model • Experimental Methodology • Results • Adult data set • Progressive Sampling • Related Work • Future Work/Conclusion UBDM 2006 Workshop

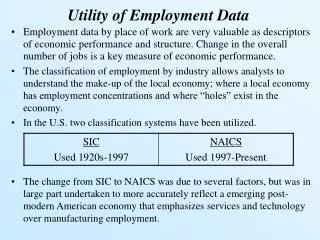

Experiments • Use C4.5 to determine relationship between accuracy and training set size • 20 runs used to increase reliability of results • Random sampling to reduce training set size • For this talk we focus on adult data set • ~ 21,000 examples • We utilize a predetermined sampling schedule • CPU times recorded, mainly for future work UBDM 2006 Workshop

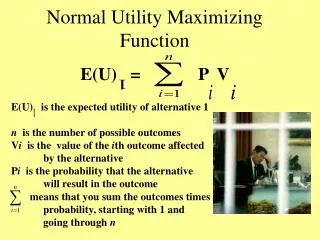

Measuring Total Utility • Total cost = Data Cost + Error Cost = n∙Ctr + e ∙|S| ∙Cerr n = number training examples e = error rate |S| = number examples in score set Ctr = cost of a training example Cerr = cost of an error • Will know n and e for any experiment • With domain knowledge can estimate Ctr, Cerr, |S| • But we don’t have this knowledge • Treat Ctr andCerr as parameters and vary them • Assume |S| = 100 with no loss of generality • If |S| is 100,000 then look at results for Cerr/1,000 UBDM 2006 Workshop

Measuring Total Utility (cont.) • Now only look at cost ratio, Ctr:Cerr • Typical values evaluated: 1:1, 1:1000, etc. • Relative cost ratio is Cerr/Ctr • Example • If cost ratio is 1:1000 then even trade-off if buying 1000 training examples eliminates 1 error • Alternatively: buying 1000 examples is worth a 1% reduction in error rate (then can ignore |S| = 100) UBDM 2006 Workshop

Outline • Introduction • Motivation, cost model • Experimental Methodology • Results • Adult data set • Progressive Sampling • Related Work • Future Work/Conclusion UBDM 2006 Workshop

Learning Curve UBDM 2006 Workshop

Utility Curves UBDM 2006 Workshop

Utility Curves (Normalized Cost) UBDM 2006 Workshop

Optimal Training Set Size Curve UBDM 2006 Workshop

Value of Optimal Curve • Even without specific cost information, this chart could be useful for a practitioner • Can put bounds on appropriate training set size • Analogous to Drummond and Holte’s cost curves3 • They looked at cost ratio of false positives and negatives • We look at cost ratio of errors vs. cost of data • Both types of curves allows the practitioner to understand the impact of the various costs UBDM 2006 Workshop

Idealized learning curve UBDM 2006 Workshop

Outline • Introduction • Motivation, cost model • Experimental Methodology • Results • Adult data set • Progressive Sampling • Related Work • Future Work/Conclusion UBDM 2006 Workshop

Progressive Sampling • We want to find the optimal training set size • Need to determine when to stop acquiring data before acquiring all of it! • Strategy: use a progressive sampling strategy • Key issues: • When do we stop? • What sampling schedule should we use? UBDM 2006 Workshop

Our Progressive Sampling Strategy • We stop after first increase in total cost • Results therefore never optimal, but near-optimal if learning curve is non-decreasing • We evaluate 2 simple sampling schedules • S1: 10, 50, 100, 500, 1000, 2000, …, 9000, 10,000, 12,000, 14,000, … • S2: 50, 100, 200, 400, 800, 1600, … • S2 & S1 are similar given modest sized data sets • Could use an adaptive strategy UBDM 2006 Workshop

Adult Data Set: S1 vs. Straw Man UBDM 2006 Workshop

Progressive Sampling Conclusions • We can use progressive sampling to determine a near optimal training set size • Effectiveness mainly based on how well behaved the learning curve is (i.e., non-decreasing) • Sampling schedule/batch size is also important • Finer granularity requires more CPU time • But if data costly, CPU time most likely less expensive • In our experiments, cumulative CPU time < 1 minute UBDM 2006 Workshop

Related Work • Efficient progressive sampling2 • It tries to efficiently find the asymptote • That work has a data cost of ε • Stop only when added data has no benefit • Active Learning • Similar in that data cost is factored in but setting different • User has control over which examples are selected or features measured • Does not address simple “cost of cases” scenario • Find best class distribution when training data costly4 • Assumes training set size limited but size pre-specified • Finds the best class distribution to maximize performance UBDM 2006 Workshop

Limitations/Future Work • Improvements: • Bigger data sets where learning curve plateaus • More sophisticated sampling schemes • Incorporate cost-sensitive learning (cost FP ≠ FN) • Generate better behaved learning curves • Include CPU time in utility metric • Analyze other cost models • Study the learning curves • Real world motivating examples • Perhaps with cost information UBDM 2006 Workshop

Conclusion • We analyze impact of training data cost on classification process • Introduce new ways of visualizing the impact of data cost • Utility curves • Optimal training set size curves • Show that we can use progressive sampling to help learn a near-optimal classifier UBDM 2006 Workshop

We Want Feedback • We are continuing this work • Clearly many minor enhancements possible • Feel free to suggest some more • Any major new directions/extensions? • What if anything is most interesting? • Any really good motivating examples that you are familiar with UBDM 2006 Workshop

Questions? • If I have run out of time, please find me during the break!! UBDM 2006 Workshop

References • P. Turney (2000). Types of cost in inductive concept learning. Workshop on Cost-Sensitive Learning at the 17th International Conference on Machine Learning. • F. Provost, D. Jensen & T. Oates (1999). Proceedings of the 5th International Conference on Knowledge Discovery and Data Mining. • C. Drummond & R. Holte (2000). Explicitly Representing Expected Cost: An Alternative to ROC Representation. Proceedings of the 6th ACM SIGKDD International Conference of Knowledge Discovery and Data Mining, 198-207. • G. Weiss & F. Provost (2003). Learning when Training Data are Costly: The Effect of Class Distribution on Tree Induction, Journal of Artificial Intelligence Research, 19:315-354. UBDM 2006 Workshop

Learning Curves for Large Data Sets UBDM 2006 Workshop

Optimal Curves for Large Data Sets UBDM 2006 Workshop

Learning Curves for Small Data Sets UBDM 2006 Workshop

Optimal Curves for Small Data Sets UBDM 2006 Workshop

Results for Adult Data Set UBDM 2006 Workshop

Optimal vs. S1 for Large Data Sets UBDM 2006 Workshop