Iterative Source- and Channel Decoding

Iterative Source- and Channel Decoding. Speaker: Inga Trusova Advisor: Joachim Hagenauer. Content. 1. Introduction 2. System model 3. Joint Source-Channel Decoding(JSCD) 4. Iterative Source-Channel Decoding(ISCD) 5. Simulation Results 6. Conclusions. Introduction.

Iterative Source- and Channel Decoding

E N D

Presentation Transcript

Iterative Source- and Channel Decoding Speaker: Inga Trusova Advisor: JoachimHagenauer

Content 1. Introduction 2. System model 3. Joint Source-Channel Decoding(JSCD) 4. Iterative Source-Channel Decoding(ISCD) 5. Simulation Results 6. Conclusions

Introduction PROBLEMS EXIST • Limited block length for source and channel coding • Data-bits issued by a source encoder contain residual redundancies • Infinite block-length for achieving “perfect” channel codes • Output bits of a practical channel decoder are not error free Application of the separation theorem of information theory is not justified in practice!

Introduction GOAL: To improve the performance of communication systems without sacrificing resources SOLUTION: Joint source-channel coding & decoding (JSCCD) • Several auto correlated source signals are considered • Source samples are 1. quantized 2. their indexes appropriately mapped into bit vectors 3. bits are interleaved & channel-encoded

Introduction AREA OF INTEREST: Joint source-channel decoding (JSCD) Key idea of JSCD: To exploit the residual redundancies in the data bits in order To improve the overall quality of the transmission The turbo principle (iterative decoding between components) is a general scheme, which we apply to JSCD

System Model Initial data • AWGN channel is assumed for transmission • A set of input source signals has to be transmitted at each time index k • Only one of the inputs, the samples , is considered • are quantized by the bit vector with and , denoting the set of all possible N-bit vectors (1)

System Model Figure 1: System Model

System Model As coherently detected binary modulation (phase shift keying) is assumed Than conditional pdf of the received value at the channel output, given that code bit has been transmitted, is given by (3)

System Model Where, the variance energy that is used to transmit each channel-code bit One-sided power spectral density of the channel noise Note: The joint conditional pdf for a channel word to be received , given that codeword is transmitted, is the product of (3) over all code-bits, since the channel noise is statistically independent

System Model IF areautocorrelated THAN show dependencies AND are modeledby first-oder stationary Markov-process, which is describedby transition probabilities ASSUMPTIONS • Transition probabilities and probability-distributions of the bitvectors are known • Bitvectors are independent of all other data, which is transmitted in parallel by bitvector

Joint Source-Channel Decoding GOAL: Distortion of the decoder output signal min JSCD for a fixed transmitter Optimization criterion is given by the conditional expectation of the mean square error: (4)

Joint Source-Channel Decoding In(4) is the quantizer reproduction value corresponding to the bitvector , which is used by the source encoder to quantize is a set of channel output words which were received up to the current time k Dmin results inthe minimum mean – square estimator (5) (6)

Joint Source-Channel Decoding Bitvector a-posteriori probabilities (APPs), using the Bayes-rule, are given by Where is the bitvector a-priori probability is a normalizing constant Since (7)

Joint Source-Channel Decoding A-priori probabilities are given by At k=0 the unconditional probability distribution is used in stead of the “old” APPs Drawback : From (7) the term is very hard to compute analytically (8)

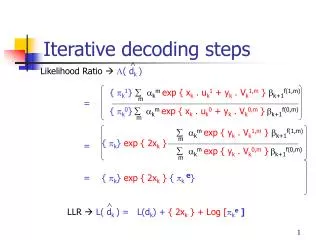

Iterative Source-Channel Decoding Goal: To find more feasible, less complex way to compute at least a good approximation Solution: Iterative Source-Channel Decoding (ISCD) We write: (9)

Iterative Source-Channel Decoding Now, Bitvectorprobability densities are approximated by the product over the corresponding bitprobability densities With the bits (10)

Iterative Source-Channel Decoding If we insert (10) into the formula (7) which defines bitvector a-posteriori probabilities we obtain: The bit a-posteriori probabilities can be efficiently computed by the symbol-by-symbol APP algorithm for a binary convolution channel code with a small number of states. (11)

Iterative Source-Channel Decoding Note: ALL the received channel words up to the current time are used for the computation of the bit APPs, because the bit-based a-priori information For a specific bit (12)

Iterative Source-Channel Decoding Let interpret the fraction in (11) as the extrinsic information that we get from the channel decoder: Note: Superscript “(C)” is used to indicate that is the extrinsic information produced by the channel decoder . (13)

Iterative Source-Channel Decoding As a result we have: A modified channel-term (btw brackets ) that includes the reliabilities of the received bits and, additionally, the information derived by the APP-algorithm from the channel-code. Drawback: Bitvector APPs are only approximations of the optimal values, since the bit a-priori information didn’t contain the mutual dependencies of the bits within bitvectors

Iterative Source-Channel Decoding How to improve the accuracy of the bitvector APPs? Idea: Iterative decoding of turbo codes: From the intermediate results for the bitvector APPs (13), new bit APPs are computed by (14)

Iterative Source-Channel Decoding Bit extrinsic information from the source decoder: Note: Computed extrinsic information is used as the new a-priori information for the second and further runs of the channel decoder. (15)

Iterative Source-Channel Decoding SUMMARY OF ISCD: Step 1 At each time k, compute the initial bitvector a-priori probabilities by: (8)

Iterative Source-Channel Decoding Step 2: Use the results from step 1 in to compute the initial bit a-priori information for the APP channel decoder. Step 3: Perform APP channel decoding (12)

Iterative Source-Channel Decoding Step 4: Perform source decoding by inserting the extrinsic bit information from APP channel decoding into to compute new (temporary) bitvector APPs Step 5: If this is the last iteration proceed with step 8, otherwise continue with step 6 (13)

Iterative Source-Channel Decoding Step 6: Use the bitvector APPs of step 4 in to compute extrinsic bit information from the source redundancies (14) (15)

Iterative Source-Channel Decoding Step 7: Set the extrinsic bit information from Step 6 equal to the new bit a-priori information for the APP channel decoder in the next iteration ; proceed with Step 3 Step 8: Estimate the receiver output signals by using the bitvector APPs from Step 4 (6)

Iterativesource channel decoding Figure 2: Iterative Source-Channel Decoding according to the Turbo Principle

Iterativesource channel decoding Computationof the bitvector APPs by (13) requires bit probabilities which can be computed fromfrom the output L-values: With inversion: (16) (17) are fixed real numbers

Iterativesource channel decoding SIMPLIFICATION 1: Reminder: formula (13) bitvector APPs computation: Let’s insert (17) into (13) and turn the product over the exponential functions into summations in the exponents: (13) (18)

Iterativesource channel decoding Benefits of using (18) instead of (13): • Normalizing constant Ak doesn’t depend on the variable Ik,n • L-values from the APP channel decoder can be integrated into the Optimal-Estimation algorithm for APP source decoding without converting the individual L-values back to bit probabilities • Strong numerical advantages

Iterativesource channel decoding SIMPLIFICATION 2: The computation of new bit APPs within the iteration is still carried out by (14) Reminder: But, instead of (15) for the new bit extrinsic information Reminder: (14) (15)

Iterativesource channel decoding Extrinsic L-values are used: Benefits of using (19) in stead of (15): • Division is turned into a simple subtraction in the L-value domain THUS, In ISCD the L-values from the APP channel decoder are used and the probabilities are not required (19)

Quantizer Bit Mapping Assumption: Input is a low-pass correlation The value of the sample xkwill be close to xk-1 If: The channel code is strong enough L-values at the APP channel decoder output have large magnitudes a-priori information for the source decoder is perfect ISCD: APP source decoder tries to generate extrinsic information for a particular data bit , while it exactly knows all other bits

Quantizer Bit Mapping natural optimized Gray Figure 3: Bit Mappings for a 3-bit Quantizer to be used in ISCD

Simulation Results Simulation process: • Correlation of independent Gaussian random samples by a first-order recursive filter (coefficient ) • Source encoders: 5-bit Lloyd Max scalar quantizers • 50 mutually independent bitvectors were generated, all transmitted at time index k. • The bits were scrambled by a random-interleaver

Simulation Results Simulation process (contd.): • The bits were channel-encoded by a rate- ½ recursive systematic convolution code (RSC-code with memory 4, which were terminated after each block of 50 bitvectors (250 bits) • AWGN-channel was used for transmission • ISCD was performed at the decoder

Simulation Results Figure 4: Performance of ISCD for various 5-Bit Mappings

Conclusions Strong quality gains are achievable by: • Application of the turbo principle in joint source-channel decoding • Bitmapping of the quantizers is important for the performance • Optimized bit mapping of the quantizers in ISCD allows to obtain strong quality improvements