Solving Laplacian Systems: Some Contributions from Theoretical Computer Science

320 likes | 491 Views

Solving Laplacian Systems: Some Contributions from Theoretical Computer Science . Nick Harvey UBC Department of Computer Science. Today’s Problem. Solve the system of linear equations L x = b where L is the Laplacian matrix of a graph. Assumptions:

Solving Laplacian Systems: Some Contributions from Theoretical Computer Science

E N D

Presentation Transcript

Solving Laplacian Systems:Some Contributions fromTheoretical Computer Science Nick HarveyUBC Department of Computer Science

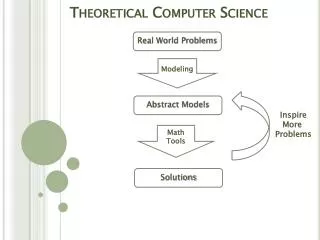

Today’s Problem • Solve the system of linear equations L x = b where L is the Laplacian matrix of a graph. • Assumptions: • Want fast algorithms for sparse matrices:running time ¼ # non-zero entries of L • Want provable,asymptotic running time bounds • Computational model: single CPU, random access memory, infinite-precision arithmetic • Ignore numerical stability, etc. Think: Symmetric, Diagonally Dominant

What is the Laplacian of a Graph? a c d -1 indicates an edgefrom a to c • L is symmetric, diagonally dominant) positive semidefinite) all eigenvalues real, non-negative • Kernel(L) = span( [1,1,…,1] ) (assuming G connected) b c d b a degree of node c = # edges that hit c a b L = c d

Why is L useful? Replace edges with 1-ohm resistors d a c • What is effective resistance between a and d? • Highschool Calculation: 1 + 1/(1+1/(1+1)) = 5/3 • Linear Algebra: xTL+ x, where x = [1, 0, 0, -1] b “Pseudoinverse” L = L+ = /48

PDEs ¡ Laplace’s Equation Given a function f : ¡!R,find a function g : !R such that Image from Wikipedia.

Discretization a b c q d e f L = g h i q2 r2g(e) ¼(g(f)-g(e))-(g(e)-g(d))+(g(b)-g(e))-(g(e)-g(h)) = g(b)+g(d)+g(f)+g(h)-4g(e) So q2r2g(v) ¼ -Lg(v) for all vertices v in grid interior Conclusion: can compute approximate solution toLaplace’s equation by solving a linear system involving L.

Aren’t existing algorithms good enough? • Preconditioned Conjugate Gradient • Exact solution in O(nm) time(L has size nxn, m non-zeros) • Caveat: this bound fails with inexact arithmetic • Better bounds with a good preconditioner? • Multigrid • Provable performance for specific types of PDEs inlow-dimensional spaces • Not intended for general “Lx = b” Laplacian systems • Algebraic Multigrid • This is close to what we want • I am unaware of an existing theorem of the form:For any matrix from class X we can efficiently compute coarsenings such that convergence rate is Y

Some Contributions fromTheoretical Computer Science , max degree ¢ • Let L be Laplacian of a graph with n vertices • Vaidya[unpublished ‘91],Bern-Gilbert-Hendrickson-Nguyen-Toledo [SIAM J. Matrix Anal. ’06]: • Key Idea: Use Graph Theory to design preconditioners for L • Can find a preconditionerP in O((¢n)1.5) time such that: • relative condition number κ(L,P) = O((¢n)1.5) • solving systems in P takes O(¢n) time • Using conjugate gradient method, get a solutionfor “Lx=b” with relative error ² in O((¢n)1.75 log(1/²)) time • i.e., letx := L+ b and let y be the algorithm’s outputThen ky-xkL·²kxkL • xTLx (y-x)TL(y-x)

Some Contributions fromTheoretical Computer Science • Let L be Laplacian of a graph with n vertices, m edges • Spielman-Teng[STOC ‘04]: • Can find a solution for “Lx = b” with relative error ²in O(m1+o(1) log(1/²)) time. • Can view as a rigorous implementation of Algebraic Multigrid • Highly technical: journal version is 116 pages • Koutis-Miller-Peng[FOCS ‘11]: • Can find a solution for “Lx = b” with relative error ²in O(m log n (log log n)2 log(1/²)) time. • Significantly simpler: only 16 pages • Ingredients:low-stretch trees, random matrix theory

Iterative methods & Preconditioning • Suppose you want to solve Lx = b (m non-zeros) • Instead choose a preconditionermatrix Pand solve P-1/2 LP-1/2y = P-1/2b • Setting x = P-1/2y gives a solution to Lx = b • The relative condition number is • Iterative methods (e.g., conjugate gradient) give • a solution with relative error ² • after iterations • each iteration takes time O(m + (time to solve a linear system in P) )

Tool #1: Low-Stretch Trees Image from D. Spielman. “Algorithms, Graph Theory, and Linear Equations in Laplacians”. Talk at ICM 2010.

Laplacians of Subgraphs • Suppose you want to solve Lx = b, whereL is the Laplacian of graph G=(V,E) • For any FµE, we can write L = LF + LE\F, where • LF is the Laplacian of (V,F) • LE\F is the Laplacian of (V,E\F) • Easy Fact: λmin(LF+ L)¸1 )κ(L,LF)·λmax(LF+ L) F c d a F b a b c d a b c d a b c d a a a b b b L= LF= LE\F= c c c d d d

SubtreePreconditioners • Let L be the Laplacian of G=(V,E) (n = #vertices, m = #edges) • Let T=(V,F) be a subtree of G • Consider using P=LF as a preconditioner • Useful Property: Solving linear systems in P takesO(n) time, essentially by back-substitution G T

SubtreePreconditioners • Let L be the Laplacian of G=(V,E) (n = #vertices, m = #edges) • Let T=(V,F) be a subtree of G • Consider using P=LF as a preconditioner • Useful Property: Solving linear systems in P takesO(n) time, essentially by back-substitution • Easy Fact: κ(L,P)·λmax(P+ L) • CG gives a solution with relative error ² in time

Low-Stretch Trees • Let L be the Laplacian of G=(V,E) (n = #vertices, m = #edges) • Let T=(V,F) be a subtree of G and P=LF • Lemma[Boman-Hendrickson ‘01]: λmax(P-1 L) · stretch(G,T),where • Theorem [Alon-Karp-Peleg-West ‘91]:Every graph G has a tree T with stretch(G,T) = m1+o(1). G T u u distance fromu to v is 5 v v

Low-Stretch Trees • Let L be the Laplacian of G=(V,E) (n = #vertices, m = #edges) • Let T=(V,F) be a subtree of G and P=LF • Lemma[Boman-Hendrickson ‘01]: λmax(P-1 L) · stretch(G,T),where • Theorem [Alon-Karp-Peleg-West ‘91]:Every graph G has a tree T with stretch(G,T) = m1+o(1). • Theorem [Abraham-Bartal-Neiman ‘08]:Every G has a tree T with stretch(G,T) · m log n (log log n)2.Moreover, T can be found in O(m log2 n) time.

Low-Stretch Trees • Let L be the Laplacian of G=(V,E) (n = #vertices, m = #edges) • Let T=(V,F) be a subtree of G and P=LF • Lemma[Boman-Hendrickson ‘01]: λmax(P-1 L) · stretch(G,T),where • Theorem [Alon-Karp-Peleg-West ‘91]:Every graph G has a tree T with stretch(G,T) = m1+o(1)and T can be found in O(m log2 n) time. • Corollary: Every Laplacian L has a preconditionerPs.t. • κ(L,P)·λmax(P-1 L) · m1+o(1) • CG gives ²-approx solution in time • Tighter analysis gives [Spielman-Woo ‘09]

Tool #2: Random Sampling Data from L. Adamic, N. Glance. “The Political Blogosphere and the 2004 U.S. Election: Divided They Blog”, 2005.

Spectral Sparsifiers • Let LG be the Laplacian of G=(V,E) (n = #vertices, m = #edges) • A spectral sparsifierof G is a (weighted) graph H=(V,F) s.t. • |F| is small • 1-²·λmin(LH+ L) and λmax(LH+ L)·1+² • Useful notation: (1-²) LG¹LH¹ (1+²) LG • Consider the linear system LG x = b.Actual solution is x := LG+ b.Instead, computey := LH+b. • Theny has low multiplicative error: ky-xkLG· 2²kxkLG • Computing y is fast since H is sparse:conjugate gradient method takes O(n|F|) time

Spectral Sparsifiers • Let LG be the Laplacian of G=(V,E) (n = #vertices, m = #edges) • A spectral sparsifierof G is a (weighted) graph H=(V,F) s.t. • |F| is small • (1-²) LG¹LH¹ (1+²) LG • Theorem [Spielman-Srivastava ‘08]: Every G has aspectral sparsifierH with |F| = O(n log n/²2).Moreover, H can be constructed in O(m log3 n) time. • Algorithm: Using CG, we get an ²-approx solutionto “Lx = b” in O(m log3 n + n2 log n/²2) time • Caveat: this algorithm uses circular logic. To construct the sparsifierH, we need to solve several linear systems “Lx = b”.

Decomposing Laplacian into Edges • Let LG be the Laplacian of G=(V,E) • For every e2E, let Le be the Laplacian of (V,{e}) • Then LG = e Le LG = c d a b Lab Lac Lbc Lcd

Decomposing Laplacian into Edges • Let LG be the Laplacian of G=(V,E) • For every e2E, let Le be the Laplacian of (V,{e}) • Then LG = e Le • Sparsification: • Find coefficients we for every e2E • Let H be the weighted graph where e has weight we • So LH = eweLe • Goals: • Most of the we are zero • (1-²) LG¹LH¹ (1+²) LG

The General Problem:Sparsifying Sums of PSD Matrices • General Problem: Given PSD matrices Les.t. eLe=L, find coefficients we, mostly zero, such that (1-²) L¹eweLe¹ (1+²) L • Theorem:[Ahlswede-Winter ’02]Random sampling gives w with O( n log n/²2 ) non-zeros. • Theorem:[de Carli Silva-Harvey-Sato ‘11],building on [Batson-Spielman-Srivastava ‘09]There exists w with O( n/²2 ) non-zeros.

Concentration Inequalities • Theorem: [Chernoff ‘52, Hoeffding ‘63]Let Y1,…,Yk be i.i.d. randomnon-negative real numberss.t. E[ Yi ] = Z andYi·uZ. Then • Theorem: [Ahlswede-Winter ‘02]Let Y1,…,Yk be i.i.d. randomPSD nxn matricess.t. E[ Yi ] = Z andYi¹uZ. Then The only difference

Solving the General Problem • General Problem: Given PSD matrices Les.t. eLe = L, find coefficients we, mostly zero, such that (1-²) L¹eweLe¹ (1+²) L • AW Theorem: Let Y1,…,Yk be i.i.d. random PSD matricessuch that E[ Yi ] = Z andYi¹uZ. Then • To solve General Problem with O(nlogn/²2) non-zeros • Repeat k:=£(nlogn/²2) times • Pick an edge e with probability pe := Tr(LeL+)/n • Increment we by 1/k¢pe Main Caveat: Samplingprobabilities are hard to compute.

Low-Stretch Trees + Random Sampling + Recursion = Nearly-Optimal Algorithm

Obstacles encountered so far • Low-stretch trees are easy to compute but only give preconditioners with κ ¼ m • Low-stretch tree: fast, low-quality preconditioner • Sparsifiers give preconditioners with κ ¼ 1+², but they are harder to compute • Sparsifier: slow, high-quality preconditioner • Using a sparsifier H as a preconditioner is not very efficient because solving LH+ x = b is slow • Sparsifier: slow to construct and slow to use

Bypassing the Obstacles • Idea #1: Get a “medium-quality” preconditioner by combining the low- and high-quality preconditioners. • Specifically, do random sampling according to stretch • Intuition: The path is a bad approximation to the cycle Κ(LG,LT) = n G High condition number caused by missing edge,which has high stretch. T

Bypassing the Obstacles • Idea #1: Get a “medium-quality” preconditioner by combining the low- and high-quality preconditioners. • Specifically, do random sampling according to stretch • Compute a low-stretch tree T. For every edge uv, set • Construct sparsifier H by making O(m / log2 n) samples, where e is sampled with probability pe.Then add log4 n copies of T to H. • Theorem [Koutis, Miller, Peng FOCS’10]: • H has at most O(m / log2 n) edges • LG¹LH¹ log4 n LG

Idea #2 [Joshi ‘97, Spielman-Teng ‘04]:To solve linear systems in the sparsifier “LHx = b”, use recursion. Solving LGx = b - Construct sparsifierH1 - Recursively solve LH1x = b - Use Chebyshev iterations to improve x - Output ²-approx solution to LGx = b G • LG¹ LH1¹ log4 n LG Solving LH1x = b - Construct sparsifierH2 - Recursively solve LH2x = b - Use Chebyshev iterations to improve x - Output ²-approx solution to LH1x = b H1 • LH1¹ LH2¹ log4 n LH1 Solving LH2x = b - Output ²-approx solution to LH2x = b H2 • LH2¹ LH3¹ log4 n LH2 …

Solving LGx = b - Construct sparsifierH1 - Recursively solve LH1x = b - Use Chebyshev iterations to improve x - Output ²-approx solution to LGx = b G • Idea #2 [Joshi ‘97, Spielman-Teng ‘04]:To solve linear systems in the sparsifier “LHx = b”, use recursion. • LG¹ LH1¹ log4 n LG Solving LH1x = b - Construct sparsifierH2 - Recursively solve LH2x = b - Use Chebyshev iterations to improve x - Output ²-approx solution to LH1x = b H1 • LH1¹ LH2¹ log4 n LH1 Sketch of Analysis: - Few Chebyshev iterations because Hi+1isa good approximation of Hi. - Few levels of recursion because Hi+1isa constant factor smaller than Hi. H2 • LH2¹ LH3¹ log4 n LH2 …

Conclusion • Let A be a symmetric, diagonally dominant matrixof size nxn with m non-zero entries. • There is an algorithm to solve “Ax = b” with relative error ² in O(m log n (log log n)2 log(1/²)) time. • Ingredients: Low-stretch trees, concentration of random matrices Open Questions • Parallelization? • Practical implementation? • Numerical stability? • Arbitrary PSD matrices?