Model Comparison in Linear Regression Analysis

E N D

Presentation Transcript

BMS 617 Lecture 10: Comparing Models

Recap of Models • Last time we saw: • A statistical model is a mathematical function that predicts the value of a dependent variable from the values of independent variables • Depends on parameters • Unknown values that are properties of the population • “Fitting a model to data” means finding the values of the parameters which make the observed values most likely

Linear Regression • One example of a model is Simple Linear Regression • Predict a dependent variable as a linear function of an independent variable • Has parameters intercept and slopeYi = β0 + β1Xi + εi

Comparing Models • In the linear regression example, we also computed a p-value • The null hypothesis was that the slope was zero • I.e. we compared the modelY = β0 + β1 × X + εto Y = β0 + ε • So we can think of this statistical test as the comparison between two models • In fact, we can think of most (perhaps all) statistical tests as the comparison between two models Marshall University School of Medicine

Hypothesis test of linear regression as a comparison of models Marshall University School of Medicine

Why model comparison is not straightforward • It is not enough just to compare the “residuals” between two models • Remember the residuals are the error terms in the model • A model with more parameters will always come closer to the data • However, the confidence intervals will be wider • So the model will be less useful for predicting future values Marshall University School of Medicine

Comparing the models and R2 • The total sum of squares of the distance of points from the mean • i.e. the total variance • is 155,642.3. • The total sum of squares of the residuals is 63,361.37 • The difference between these is 92,280.93, which is 59.3% of the total variance • So the linear model results in an improvement in the variance which is 59.3% of the total: this is the definition of R2: R2=0.593 Marshall University School of Medicine

Interpreting the difference in variance With a little algebra, you can show that the difference between the total variance and the sum of the squares of the residuals is the sum of the squares of the distance between the regression line and the mean So the regression line “accounts for 59.3% of the variance” Marshall University School of Medicine

Computing a p-value for model comparison • To compute a p-value for the comparison of models, we look at both the sum of squares for each model and the degrees of freedom for each model • The number of degrees of freedom is the number of data points, minus the number of parameters in the model • We had 13 data points, so there are 12 degrees of freedom for the null hypothesis model, and 11 degrees of freedom for the linear model Marshall University School of Medicine

Mean squares and F-ratio • The same data presented in the format of an ANOVA (we will see this later) • “Total” represents the total variation in the data • “Random” is the variation in the data around the regression line • “Regression” is the difference between them: the sum of squares of distances from the regression line to the mean • The “mean squares” is the sum of squares divided by the degrees of freedom • The F-ratio is the ratio of mean squares Marshall University School of Medicine

Computing a p-value • The null hypothesis is that the “horizontal line model” is the correct model • i.e. the slope in the regression model is zero • If the null hypothesis were true, the F-ratio would be close to 1 (this is not obvious!) • The distribution of values of the F-ratio, assuming the null hypothesis is known, is a known distribution • Called the F-distribution • depends on two different degrees of freedom • so a p-value can be computed • The p-value in this example is p=0.0021 Marshall University School of Medicine

Recap • We re-examined the linear regression example and re-cast it as a comparison of statistical models • Can compute a p-value for the null hypothesis that the simpler model is “correct” • “As correct as the more complex model” • This is the same p-value we computed before • The R2 value is the proportion of variance “explained by” the regression • We can do the same for other statistical tests! Marshall University School of Medicine

A t-test considered as a comparison of models • Recall the GRHL2 expression in Basal-A and Basal-B cancer cells • We can re-cast this as a linear regression… • Let x=0 for Basal A cells and x=1 for Basal B cells • Our linear model is:Expression = β0 + β1 × x + εwith the null hypothesisExpression = β0 + ε • What is β1? • Slope = increase in expression for increase in one unit of x • = difference in expression between Basal A and Basal B • = difference in means… Marshall University School of Medicine

t-test as a comparison of models Marshall University School of Medicine

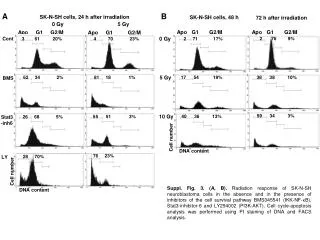

Results of running the t-test as a comparison of models Running the linear regression gives estimates of the intercept of 1.933 and slope of -1.861 The table of variances is Marshall University School of Medicine

Interpreting the table of variances • The total sum of squares (33.753) is the sum of squares of the differences between each value and the overall mean • This, divided by the df (33.753/26=1.298) is the sample variance • The residual sum of squares is the sum of the squares of each expression value minus its predicted value • The predicted value is just the mean for its basal type • This is the “within group” variance • The regression sum of squares is the sum of squares of the differences between predicted values and the overall mean • This is the sum of squares of the differences between the group means and the overall mean • One squared difference for each data point • These interpretations will be really useful to consider when we study ANOVA Marshall University School of Medicine