Sum Check

Sum Check. Where Quadratic-Polynomials get slim. Introduction. Our starting point is a gap-QS instance [HPS]. We need to decrease (to constant) the number of variables each quadratic-polynomial depends on.

Sum Check

E N D

Presentation Transcript

Sum Check Where Quadratic-Polynomials get slim

Introduction • Our starting point is a gap-QSinstance [HPS]. • We need to decrease (to constant) the number of variables each quadratic-polynomial depends on. • We will add variables to those of the original gap-QS instance, to check consistency, and replace each polynomial with some new ones.

Introduction • We utilize the efficient consistent-reader above. • Our test thus assumes the values for some preset sets of variables to correspond to the point-evaluation of a low-degree polynomial (an assumption to be removed by plugging in the consistent reader) .

Representing a Quadratic-Polynomial Given: A polynomial P, We write: P(A) =i,j[1..m] (i,j) · A(yi) · A(yj)((i,j) is the coefficient of the monomial yiyj ). Note that P(A) means estimating P at a point A = (a1,…,am) mand A(yi) means the assignment of ai to yi.

Polynomials are hard, linear forms are easy Assume A(yij) = A(yi) · A(yj), for special the variables yij, i,j [1..m], and also A(y00) = 1 we can then writeP(A) =i,j[1..m] (i,j) · A(yij) .

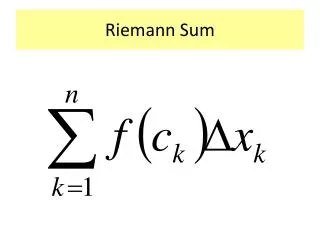

Checking Sum over an LDE Next, we associate each pair ij with some xHdP(A) =i1, …, idH (i1, …, id) · A(i1, …, id) . Let ƒ: below-degree-extension of · A ƒ = LDE() · LDE(A) . LDE of both and A is of degree |H|-1 in each variable, hence of total degree r = d(|H|-1), which makes ƒ of degree 2r. We therefore can write:P(A) =i1, .., idH ƒ(i1, …, id) .

We show next a test that, for any assignment for which some variables corresponds to a function ƒ: of degree 2r, verifies the sum of values of ƒ over Hd equals a given value. Each local-test accesses much smaller number than |Hd| of representation variables, and a single value ofƒ. We will then replace the assumption that ƒ is a low-degree-function by evaluating that single point accessed with an efficient consistent-reader for ƒ.

Partial Sums For any j[0..d] letSumƒ(j, a1,..,ad)=ij+1, .., idH ƒ(a1,..,aj,ij+1,..,id) . That is, Sumƒ is the function that ignores all indices larger than j, and instead sums over all points for which these indices are all in H. Proposition:Since ƒ is of degree 2r, Sumƒ is of degree 2rd (being the combination of d degree-r functions) .

Properties of Sumƒ Proposition:For every a1, .., ad and any j[0..d] , • Sumƒ ( d, a1, .., ad ) = ƒ(a1, .., ad) . • Sumƒ ( 0, a1, .., ad ) = i1, .., idH ƒ(i1, …, id) . • Sumƒ (j, a1,..,ad)= iHSumƒ(j+1,a1,..,aj,i,..,ad) . Now we can assume Sumƒ to be of degree 2r (and later plug in a consistent-reader) and verify property 2, namely that for j=0, Sumƒ gives the appropriate sum of values of ƒ.

The Sum-Check Test Representation:One variable [j , a1, .., ad ]for everya1, .., ad and j[0..d]Supposedly assigned Sumƒ (j, a1, .., ad )(hence ranging over ) . Test:One local-test for every a1, .., ad that accepts an assignment A if for every j[0..d] ,A([j,a1,..,ad]) = iH A([j+1,a1,..,aj,i,aj+2,..,ad]) .

How good is it ? • The above test already drastically reduces the number of variables each linear-sum accesses to O(d |H|), nevertheless, we would like to decrease it to constant.

Embedding Extension • As we've seen, representing an assignment by an LDF might not be enough. • We want a technique of lowering the degree of the LDF (even at the price of moderately increasing the dimension).

Example: P(x) = X12 + X25 Y1 = X, Y2 = X3, Y3 = X9 X12 = Y3Y2, X25 = Y32Y22Y1 Pe(Y1,Y2,Y3) = Y3Y2 + Y32Y22Y1 [Note that the degree of Pe is much smaller then that of P, However it is defined over 3 rather than over ].

Embedding-Map • Def: (Embedding-Map) Let d,t and r be natural numbers, d > t (r is the degree of the LDF that should be encoded). The embedding-map for parameters (t,r,d) is a manifold M: t d of degree bl-1, as defined shortly. Let l = d/t, and let b = r1/l. Given X = (X1,…,Xt) t, M(X) is a vector Y d, structured by concatenating of sub vectors Y1,…,Ytwhereas Yi = (Y(i,0),…Y(i,l-1)) = ((xi),(xi)b,(xi)b2,…,(xi)bl-1) M(X) = Y = (Y1,…Yt,0,…0) d, (Y is padded with zeros if necessary, so it has d coordinates).

Encoding an LDF • Given an [r,t]-LDF P over t we construct its proper extension Pe: • Each term (Xi)jin P is represented by low-degree monomial m(i,j): d over the coordinates (Y(i,0),…Y(i,l-1)) which correspond to powers of xi. m(i,j) =(Y) = (Y(i,0)) 0(Y(i,1)) 1…Y(i,l-1)) l-1 Wheres 12…l-1 is the base b representation of j, that is k=0…l-1 k = j, 0 k < b for all k. • Note that Pe is not just an encoding of P, but is actually an extension of the function that P induces on M(t).

The degree of the Proper-Extension • The degree of Pe in each variable is no more than b-1. • Pe has d variables, therefore deg(Pe) d(b-1) < db. • This roughly equals drt/d, which is much smaller than r ( = deg(P) ) as long as d is not very large.

Linearizing-Extension • If the parameters r and t (of an [r,t]-LDF) are small enough, namely (r+r t) < d it is possible to transform this [r,t]-LDF to a linear LDF over d. • This technique, adopted from [ALM+92], is similar to the embedding-extension technique. • This is the (more) formal representation os what we saw in Polynomials are hard, linear forms are easy].

Linearizing-Extension • Take for example the LDF: P(x,z) = 5x2z3 + 2xz + 3x • We replace it by Pe(Yx2z3,Yxz,Yx) = 5Yx2z3 + 2Yxz + 3Yx We now have three coordinates (instead of two) but linear function. Pe Encodes P in the sense that if we assign Yx2z3 = x2z3, Yxz = xz and Yx = x then Pe(Yx2z3,Yxz,Yx) = P(x,z)

Linearizing-Map For the general case: Def: Let d,t and r be natural numbers, Let S = {m1,…ms} be the set of monomials of degree r over t variables. The linearizing-map for parameters (t,r,d) is function M: t d defined by M(x1,…xt) = (Ym1,…,Yms,0,…,0) where Ymi = mi(x1,…,xt) (padding with zeros to have d coordinates). M(Ft) is therefore a manifold in Fd of degree r.

Show that as M has only d coordinates, we must have |S| = (r+r t) d .

Encoding and Decoding • Consider an [r,t]-LDF P over t. The proper-extension of P is a linear LDF over d such that Pe o M = P • To construct Pe, we write P as a linear combination of monomials P = i=1…si mi. • Peis then defined by Pe(Ym1,…,Yms) = i=1…si Ymi. • The other direction (Decoding from a linear LDF over d to an LDF over t) is left to the reader.

The Composition-Recursion Consistent Reader • We now have sufficient tools to construct the final consistent reader, which will access only constant number of variables. • We do so by recursively applying the (hyper)-cube-vs.-point reader upon itself.

The Composition-Recursion Consistent Reader • The consistent-reader asks for a value of a (hyper-)cube (supposedly a LDF ) P. It then bounds it to a point x and compare the result with the value on x. • This will be done by “compressing” this LDF (using the embedding-extension) and sending it to another consistent reader.

Ft The manifold representing the hyper-cube in the higher dimension space The hyper-cube (affine subspace) Embedding Map t < d Fd

The Composition-Recursion Consistent Reader • Every (hyper-)cube induces a different embedding-map, followed by a different consistent-reader. We will therefore get a tree-like structure. • Of course this tree-like structure should be constructed in polynomial time, and be of polynomial size. It will follow from the height (the recursion depth) being bounded by a constant.

A different map for every different affine-subspace Map 2 Map 1 Map 3 Map 8785 …

Contraction - Expansion • For every cube (affine subspace) we apply embedding-extension. • After several applications of this contraction - expansion procedure, we get an LDF of degree small enough for the application of linearizing - extension. (When we say “we get an LDF”, we mean – if the original function was a LDF).

Embedding Extension maps Embedding Extension maps O(1) … Linearization maps

The Composition-Recursion Consistent Reader • We’ll then have a representation of the original LDF by linear functions. • Which means that a (hyper)-cube variable (in the new space) can be represented by a constant number of scalars.

The Composition-Recursion Consistent Reader • Each cube-vs.-point reader adds a constant number of scalars (3). • The depth of the recursion was constant. • Hence the composition recurtion constisten reader access only constant number of scalar. • Thus proving gap-QS PCP[O(1), , 2/]

Summary • We started with [HPS]: gap-QS[ n, , 2||-1 ] is NP-hard As long as || ncfor some c>0. • We recursively applied the (hyper-)cube-vs.point consistent reader with the Embedding-Extension and the Linearizaion-Extension techniques to construct the CR-Consistent-reader which access only constant numer of variables. • Thus we proved that QS[ O(1), , 2/ ] is NP-hard,as long as log|| logn for any constant < 1.