Test Worthiness

Test Worthiness. Chapter 3. Test Worthiness. Four cornerstones to test worthiness: Validity Reliability Practicality Cross-cultural Fairness But first, we must learn one statistical concept: Correlation Coefficient. Correlation Coefficient. Correlation –

Test Worthiness

E N D

Presentation Transcript

Test Worthiness Chapter 3

Test Worthiness • Four cornerstones to test worthiness: • Validity • Reliability • Practicality • Cross-cultural Fairness • But first, we must learn one statistical concept: • Correlation Coefficient

Correlation Coefficient • Correlation – • Statistical expression of the relationship between two sets of scores (or variables) • Positive correlation • Increase in one variable accompanied by increase in other • “Direct relationship” - They move in the same direction • Negative correlation • Increase in one variable accompanied by decrease in other • “Inverse relationship” – Variables move in opposite directions

Examples of Correlation Relationships • What is the relationship between: • Gasoline prices and grocery prices? • Grocery prices and good weather? • Stress and depression? • Depression and job productivity? • Partying and grades? • Study time and grades?

Correlation (Cont’d) • Correlation coefficient (r ) • A number between -1 and +1 that indicates direction and strength of the relationship • As “r” approaches +1, strength increases in a direct relationship (positive) • As “r” approaches -1, strength increases in an inverse relationship (negative) • As “r” approaches 0, the relationship is weak or non existent (at zero)

-1 0 +1 Strong Strong Weak Inverse Direct Correlation (cont) • Correlation coefficient “r” 0 to +.3 = weak +.4 to +.6 = medium +.7 to +1.0 = strong 0 to -.3 = weak -.4 to -.6 = medium -.7 to -1.0 = strong

Correlation Examples r = .35 r = -.67

Correlation Scatterplots • Plotting two sets of scores from the previous examples on a graph? • Place person A’s SAT score on the x-axis, and his/her GPA on the y-axis • Continue this for person B,C, D etc. • This process forms a “Scatterplot.”

What correlation (r ) do you think this graph has? How about this correlation? Scatterplots (cont)

What might this correlation be? This correlation? More Scatterplots

This correlation? Last one More Scatterplots

Shared Trait Variance Depression Anxiety Coefficient of Determination (Shared Variance) • The square of the correlation (r = .80, r2 = .64) • A statement about factors that underlie the variables that account for their relationship. • Correlation between depression and anxiety = .85. • Shared variance = .72. • What factors might underlie both depression and anxiety?

Validity • What is validity? • The degree to which all accumulated evidence supports the intended interpretation of test scores for the intended purpose • Lay Def’n: Does a test measure what it is supposed to measure? • It is a unitary concept; however, there are 3 general types of validity evidence • Content Validity • Criterion-Related Validity • Construct Validity

Content Validity • Is the content of the test valid for the kind of test it is? • Developers must show evidence that the domain was systematically analyzed and concepts are covered in correct proportion • Four-step process: • Step 1 - Survey the domain • Step 2 - Content of the test matches the above domain • Step 3 - Specific test items match the content • Step 4 - Analyze relative importance of each objective (weight)

Survey of Domain Step 1: Survey the Domain Content Matches Domain Step 2: Content Matches Domain Step 3: Test items reflect content Item 1 x 3 Item 2 x 2 Item 3 x 1 Item 4 x 2 Item 5 x 2.5 Step 4: Adjusted for relative importance Content Validity (cont)

Content Validity (cont) • Face Validity • Not a real type of content validity • A quick look at “face” value of questions • Sometimes, questions may not “seem” to measure the content, but do (e.g., panic disorder example in book (p. 48) • How might you show content validity for an instrument that measures depression?

Criterion-Related Validity: Concurrent and Predictive Validity • Criterion-Related Validity • The relationship between the test and a criterion the test should be related to • Two types: • Concurrent Validity – Does the instrument relate to another criterion “now” (in the present)? • Predictive Validity – Does the instrument relate to another criterion in the future?

Criterion-Related Validity: Concurrent Validity • Example 1 • 100 clients take the BDI • Correlate their scores with clinicians’ ratings of depression of the same group of clients. • Example 2 • 500 people take test of alcoholism tendency • Correlate their scores with how significant others rate the amount of alcohol they drink.

Criterion-Related Validity: Predictive Validity • Examples: • SAT scores correlated with how well students do in college. • ASVAB scores correlated with success at jobs. • GREs correlated with success in graduate school. (See Table 3.1, p. 50) • Do Exercise 3.2, p. 49

Construct Validity • Construct Validity • Extent to which the instrument measures a theoretical or hypothetical trait • Many counseling and psychological constructs are complex, ambiguous and not easily agreed upon: • Intelligence • Self-esteem • Empathy • Other personality characteristics

Construct Validity (cont) • Four methods of gathering evidence for construct validity: • experimental design • factor analysis • convergence with other instruments • discrimination with other measures.

Construct Validity: Experimental Design • Creating hypothesis and research studies that show the instrument captures the correct concept • Example: • Hypothesis: The “Blank” depression test will discriminate between clinically depressed clients and “normals.” • Method: • Identify 100 clinically depressed clients • Identify 100 “normals” • Show statistical analysis • Second Example: Clinicians measure their depressed clients before, then after, 6 months of treatment

Construct Validity: Factor Analysis • Factor analysis: • Statistical relationship between subscales of test • How similar or different are the sub-scales? • Example: • Develop a depression test that has three subscales: self-esteem, suicidal ideation, hopelessness. • Correlate subscales correlate: • Self-esteem and suicidal ideation: .35 • Self-esteem and hopelessness: .25 • Hopelessness and suicidal ideation: .82 • What implications might the above scores have for this test?

Construct Validity: Convergent Validity • Convergent Evidence – • Comparing test scores to other, well-established tests • Example: • Correlate new depression test against the BDI • Is there a good correlation between the two? • Implications if correlation is extremely high? (e.g., .96) • Implications if correlation is extremely low? (e.g., .21)

Construct Validity: Discriminant Validity • Discriminant Evidence – • Correlate test scores with other tests that are different • Hope to find a meager correlation • Example: • Compare new depression test with an anxiety test. • Implications if correlation is extremely high? (e.g., .96) • Implications if correlation is extremely low? (e.g., .21)

Validity Recap • Three types of validity • Content • Criterion • Concurrent • Predictive • Construct validity • Experimental • Factor Analysis • Convergent • Discriminant

Reliability • Accuracy or consistency of test scores. • Would you score the same if you took the test over, and over, and over again? • Reported as a reliability(correlation) coeffiecient. • The closer to r = 1.0, the less error in the test.

Three Ways of Determining Reliability • Test-Retest • Alternate, Parallel, or Equivalent Forms • Internal Consistency a. Coefficient Alpha b. Kuder-Richardson c. Split-half or Odd Even

A A Time Test-Retest Reliability • Give the test twice to same group of people. • E.g. Take the first test in this class, and very soon after, take it again. Are the scores about the same? person 1person 2person 3person 4person 5 others…. • 1st test: 35 42 43 34 38 • 2nd test: 36 44 41 34 37 Graphic: • Problem: Person can look up answers between 1st and second testing

A B Alternate, Parallel, or Equivalent Forms Reliability • Have two forms of same test • Give students two forms the same time • Correlate scores on first form with scores on second form. • Graphic: • Problem: Are two “equivalent” forms ever really equivalent?

Internal Consistency Reliability • How do individual items relate to each other and the test as a whole? • Internal Consistency reliability is going “within” the test rather than using multiple administrations • High speed computers and only one test administration has made internal consistency popular • Three types: • Split-Half or Odd-Even • Cronbach’s Coefficient Alpha • Kuder-Richardson

Split-half or Odd-even Reliability • Correlate one half of test with other half for all who took the test • Example: • Person 1 scores 16 on first half of test and 16 on second half • Person 2 scores 14 on first half and 18 on second half • Also get scores for persons 3, 4, 5, etc. • Correlate all persons scores on first half with their scores on second half • The correlation = the reliability estimate • Use “Spearman Brown formula to control for shortness of test

A Split-half or Odd-even Reliability Internal Consistency • Example Continued: • Person Score on 1st Half Score on 2nd half • 1 16 16 • 2 14 18 • 3 12 20 • 4 15 17 • And so forth….. • Problem: Are any two halves really equivalent? • Graphic:

A Cronbach’s Alpha and Kuder-Richardson Internal Consistency • Other types of Internal Consistency: • Average correlation of all of the possible split-half reliabilities • Two popular types: • Cronbach’s Alpha • Kuder-Richardson (KR-20, KR-21) • Graphic:

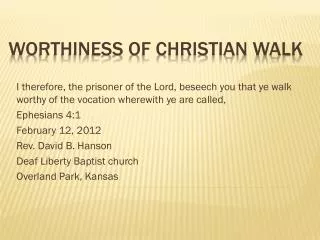

Cross-Cultural Fairness • Issues of bias in testing did not get much attention until civil rights movement of 1960’s. • Series of court decisions established is was unfair to use tests to track students in schools. • Black and Hispanic students were being unfairly compared to whites-not their norm group.

Cross-Cultural Fairness • Americans with Disabilities Act: • Accommodations for individuals taking tests for employment must be made • Tests must be shown to be relevant to the job in question. • Buckley Amendment (FERPA): • Right to access school records, including test records. • Parents have the rights to their child’s records

Cross-Cultural Fairness • Carl Perkins Act: • Individuals with a disability have the right to vocational assessment, counseling and placement. • Civil Rights Acts: • Series of laws concerned with tests used in employment and promotion. • Freedom of Information Act: • Assures access to federal records, including test records. • Most states have expanded this law so that it also applies to state records.

Cross-Cultural Fairness • Griggs v. Duke Power Company • Tests for hiring and advancement much show ability to predict job performance. • Example: Can’t give a test to measure intelligence for those who want to get a job as a road worker. • IDEIA and PL 94-142: • Assures rights of students (age 2 – 21) suspected of having a learning disability to be tested at the school’s expense. • Child Study Teams and IEP set up when necessary

Cross-Cultural Fairness • Section 504 of the Rehabilitation Act: • Relative to assessment, any instrument used to measure appropriateness for a program or service must measure the individual’s ability, not be a reflection of his or he disability. • The Use of Intelligence Tests with Minorities: Confusion and Bedlam • (See Insert 3.1, p. 58)

Disparities in Ability • Cognitive differences between people exist, however, they are clouded over by issues of SES, prejudice, stereotyping, etc: are there real differences? • Why do differences exist and what can be done to eliminate these differences? • Often seen as environmental-No Child Left Behind • Exercise 3.4, p. 58: Why might their be differences among cultural groups on their ability scores?

Practicality • Several practical concerns: • Time • Cost • Format(clarity of print, print size, sequencing of questions and types of questions) • Readability • Ease of Administration, Scoring, and Interpretation

Selecting & Administering Tests • Five Steps: • Determine your client’s goals • Choose instruments to reach client goals. • Access information about possible instruments: • Source books: E.g.,: Buros Mental Measurement Yearbook and Tests in Print • Publisher resource catalogs • Journals in the field • Books on testing • Experts • The internet • Examine validity, reliability, cross-cultural fairness, and practicality. • Make a wise choice.