Introduction to Machine Learning

Introduction to Machine Learning. Manik Varma Microsoft Research India http://research.microsoft.com/~manik manik@microsoft.com. Binary Classification. Is this person Madhubala or not? Is this person male or female? Is this person beautiful or not?. Multi-Class Classification.

Introduction to Machine Learning

E N D

Presentation Transcript

Introduction to Machine Learning Manik Varma Microsoft Research India http://research.microsoft.com/~manik manik@microsoft.com

Binary Classification • Is this person Madhubala or not? • Is this person male or female? • Is this person beautiful or not?

Multi-Class Classification • Is this person Madhubala, Lalu or Rakhi Sawant? • Is this person happy, sad, angry or bemused?

Ordinal Regression • Is this person very beautiful, beautiful, ordinary or ugly?

Regression • How beautiful is this person on a continuous scale of 1 to 10? 9.99?

Ranking • Rank these people in decreasing order of attractiveness.

Multi-Label Classification • Tag this image with the set of relevant labels from {female, Madhubala, beautiful, IITD faculty}

Can regression solve all these problems • Binary classification – predict p(y=1|x) • Multi-Class classification – predict p(y=k|x) • Ordinal regression – predict p(y=k|x) • Ranking – predict and sort by relevance • Multi-Label Classification – predict p(y{1}k|x) • Learning from experience and data • In what form can the training data be obtained? • What is known a priori? • Complexity of training • Complexity of prediction Are These Problems Distinct?

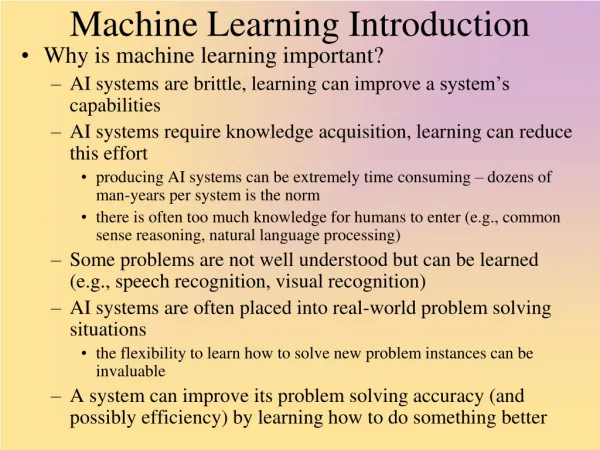

Supervised learning • Classification • Generative methods • Nearest neighbour, Naïve Bayes • Discriminative methods • Logistic Regression • Discriminant methods • Support Vector Machines • Regression, Ranking, Feature Selection, etc. • Unsupervised learning • Semi-supervised learning • Reinforcement learning In This Course

Noise and uncertainty • Unknown generative model Y = f(X) • Noise in measuring input and feature extraction • Noise in labels • Nuisance variables • Missing data • Finite training set size Learning from Noisy Data

Non-negativity and unit measure • 0 ≤ p(y) , p() = 1, p() = 0 • Conditional probability – p(y|x) • p(x, y) = p(y|x) p(x) = p(x|y) p(y) • Bayes’ Theorem • p(y|x) = p(x|y) p(y) / p(x) • Marginalization • p(x) = yp(x, y) dy • Independence • p(x1, x2) = p(x1) p(x2) p(x1|x2) = p(x1) • Chris Bishop, “Pattern Recognition & Machine Learning” Probability Theory

p(x|,) = exp( -(x – )2/22) / (22)½ The Univariate Gaussian Density -3 -2 -1 1 2 3

p(x|,) = exp( -½(x – )t-1 (x – ) )/ (2)D/2||½ The Multivariate Gaussian Density

p(|a,b) = a-1(1 – )b-1(a+b) / (a)(b) The Beta Density

Bernoulli: Single trial with probability of success = • n {0, 1}, [0, 1] • p(n|) = n(1 – )1-n • Binomial: N iid Bernoulli trials with n successes • n {0, 1, …, N}, [0, 1], • p(n|N,) = NCnn(1 – )N-n • Multinomial: N iid trials, outcome k occurs nk times • nk {0, 1, …, N}, knk = N, k [0, 1], kk = 1 • p(n|N,) = N! kknk / nk! Probability Distribution Functions

We don’t know whether a coin is fair or not. We are told that heads occurred n times in N coin flips. • We are asked to predict whether the next coin flip will result in a head or a tail. • Let y be a binary random variable such that y = 1 represents the event that the next coin flip will be a head and y = 0 that it will be a tail • We should predict heads if p(y=1|n,N) > p(y=0|n,N) A Toy Example

Let p(y=1|n,N) = and p(y=0|n,N) = 1 - so that we should predict heads if > ½ • How should we estimate ? • Assuming that the observed coin flips followed a Binomial distribution, we could choose the value of that maximizes the likelihood of observing the data • ML = argmaxp(n|) = argmaxNCnn(1 – )N-n • = argmaxn log() + (N – n) log(1 – ) • = n / N • We should predict heads if n > ½ N The Maximum Likelihood Approach

We should choose the value of maximizing the posterior probability of conditioned on the data • We assume a • Binomial likelihood : p(n|) = NCnn(1 – )N-n • Beta prior : p(|a,b)=a-1(1–)b-1(a+b)/(a)(b) • MAP = argmaxp(|n,a,b) = argmaxp(n|) p(|a,b) • = argmaxn (1 – )N-na-1 (1–)b-1 • = (n+a-1) / (N+a+b-2) as if we saw an extra a – 1 heads & b – 1 tails • We should predict heads if n > ½ (N + b – a) The Maximum A Posteriori Approach

We should marginalize over • p(y=1|n,a,b) = p(y=1|n,) p(|a,b,n) d • = p(|a,b,n) d • = (|a + n, b + N –n) d • = (n + a) / (N + a + b) as if we saw an extra a heads & b tails • We should predict heads if n > ½ (N + b – a) • The Bayesian and MAP prediction coincide in this case • In the very large data limit, both the Bayesian and MAP prediction coincide with the ML prediction (n > ½ N) The Bayesian Approach

Memorization • Can not deal with previously unseen data • Large scale annotated data acquisition cost might be very high • Rule based expert system • Dependent on the competence of the expert. • Complex problems lead to a proliferation of rules, exceptions, exceptions to exceptions, etc. • Rules might not transfer to similar problems • Learning from training data and prior knowledge • Focuses on generalization to novel data Approaches to Classification

Training Data • Set of N labeled examples of the form (xi, yi) • Feature vector – xD. X = [x1x2 … xN] • Label – y {1}. y = [y1, y2 … yN]t. Y=diag(y) • Example – Gender Identification Notation (x1 = , y1 = +1) (x2 = , y2 = +1) (x3 = , y3 = +1) (x4 = , y4 = -1)

Binary Classification b w wtx + b = 0 = [w; b]

Bayes’ decision rule • p(y=+1|x) > p(y=-1|x) ? y = +1 : y = -1 • p(y=+1|x) > ½ ? y = +1 : y = -1 Bayes’ Decision Rule

Bayesian versus MAP versus ML • Should we choose just one function to explain the data? • If yes, should this be the function that explains the data the best? • What about prior knowledge? • Generative versus Discriminative • Can we learn from “positive” data alone? • Should we model the data distribution? • Are there any missing variables? • Do we just care about the final decision? Issues to Think About

p(y|x,X,Y) = fp(y,f|x,X,Y) df • = fp(y|f,x,X,Y) p(f|x,X,Y) df • = fp(y|f,x) p(f|X,Y) df • This integral is often intractable. • To solve it we can • Choose the distributions so that the solution is analytic (conjugate priors) • Approximate the true distribution of p(f|X,Y) by a simpler distribution (variational methods) • Sample from p(f|X,Y) (MCMC) Bayesian Approach

p(y|x,X,Y) = fp(y|f,x) p(f|X,Y) df • = p(y|fMAP,x) when p(f|X,Y) = (f – fMAP) • The more training data there is the better p(f|X,Y) approximates a delta function • We can make predictions using a single function, fMAP, and our focus shifts to estimating fMAP. Maximum A Posteriori (MAP)

fMAP = argmaxfp(f|X,Y) • = argmaxfp(X,Y|f) p(f) / p(X,Y) • = argmaxfp(X,Y|f) p(f) • fML argmaxfp(X,Y|f) (Maximum Likelihood) • Maximum Likelihood holds if • There is a lot of training data so that • p(X,Y|f) >> p(f) • Or if there is no prior knowledge so that p(f) is uniform (improper) MAP & Maximum Likelihood (ML)

fML = argmaxfp(X,Y|f) • = argmaxfIp(xi,yi|f) • The independent and identically distributed assumption holds only if we know everything about the joint distribution of the features and labels. • In particular, p(X,Y) Ip(xi,yi) IID Data

MAP = argmaxp() Ip(xi,yi| ) • = argmaxp(x) p(y) Ip(xi,yi| ) • = argmaxp(x) p(y) Ip(xi|yi,) p(yi|) • = argmaxp(x) p(y) Ip(xi|yi,) p(yi|) • = [argmaxxp(x) Ip(xi|yi,x)] * • [argmaxyp(y) Ip(yi|y)] • x and y can be solved for independently • The parameters of each class decouple and can be solved for independently Generative Methods

The parameters of each class decouple and can be solved for independently Generative Methods

MAP = [argmaxxp(x) Ip(xi|yi,x)] * • [argmaxyp(y) Ip(yi|x)] • Naïve Bayes assumptions • Independent Gaussian features • p(xi|yi,x) = jp(xij|yi,x) • p(xij|yi=1,x) = N(xij| j1, i) • Improper uniform priors (no prior knowledge) • p(x) = p(y) = const • Bernoulli labels • p(yi=+1|y) = , p(yi=-1|y) = 1- Generative Methods – Naïve Bayes

ML = [argmaxxIjN(xij| j1, i)] * • [argmaxI (1+yi)/2 (1-)(1-yi)/2] • Estimating ML • ML = argmaxI (1+yi)/2 (1-)(1-yi)/2 • = argmax (N+I yi) log()+ (N-I yi) log(1-) • = N+ / N (by differentiating and setting to zero) • Estimating ML, ML • ML = (1 / N) yi=1xi • 2jML = [ yi=+1 (xij - +jML)2 + yi=-1 (xij - -jML)2 ]/N Generative Methods – Naïve Bayes

p(y=+1|x) = p(x|y=+1) p(y=+1) / p(x) • = 1 / (1 + exp(log(p(y=-1)/ p(y=+1)) • +log(p(x|y=-1) / p(x|y=+1))) • = 1 / (1 + exp( log(1/ - 1) - ½ -t-1- • + ½ +t-1+ + (+- -)t-1x )) • = 1 / (1 + exp(-b – wtx)) (Logistic Regression) • p(y=-1|x)= exp(-b – wtx) / (1 + exp(-b – wtx)) • log(p(y=-1|x)/ p(y=+1|x)) = -b – wtx • y = sign(b + wtx) • The decision boundary will be linear! Naïve Bayes – Prediction

MAP = argmaxp() Ip(xi,yi| ) • We assume that • p() = p(w) p(w) • p(xi,yi| ) = p(yi| xi, ) p(xi| ) • = p(yi| xi, w) p(xi| w) • MAP = [argmaxwp(w) Ip(yi| xi, w)] * • [argmaxwp(w) Ip(xi|w)] • It turns out that only w plays no role in determining the posterior distribution • p(y|x,X,Y) = p(y|x, MAP) = p(y|x, wMAP) • where wMAP = argmaxwp(w) Ip(yi| xi, w) Discriminative Methods

MAP = argmaxw,bp(w) Ip(yi| xi, w) • Regularized Logistic Regression • Gaussian prior – p(w) = exp( -½ wtw) • Logistic likelihood– • p(yi| xi, w) = 1 / (1 + exp(-yi(b + wtxi))) Disc. Methods – Logistic Regression

MAP = argmaxw,bp(w) Ip(yi| xi, w) • = argminw,b ½wtw+ I log(1+exp(-yi(b+wtxi))) • Bad news: No closed form solution for w and b • Good news: We have to minimize a convex function • We can obtain the global optimum • The function is smooth • Tom Minka, “A comparison of numerical optimizers for LR” (Matlab code) • Keerthi et al., “A Fast Dual Algorithm for Kernel Logistic Regression”, ML 05 • Andrew and Gao, “OWL-QN” ICML 07 • Krishnapuram et al., “SMLR” PAMI 05 Regularized Logistic Regression

Zhu & Hastie, “KLR and the Import Vector Machine”, NIPS 01 Regularized Logistic Regression

Zhu & Hastie, “KLR and the Import Vector Machine”, NIPS 01 Regularized Logistic Regression

Convex f : f(x1 + (1- )x2) f(x1) + (1- )f(x2) • The Hessian 2f is always positive semi-definite • The tangent is always a lower bound to f Convex Functions