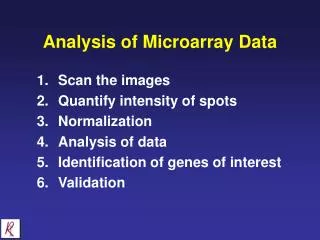

Analysis of Microarray Data

This document provides an in-depth analysis of microarray data, focusing on key statistics and computational methods. It covers foundational statistical concepts such as dot products, means, and standard deviations, and explores gene expression through red/green intensity measurements obtained via gene chips. The necessity of normalization in data analysis is debated, highlighting issues in gene expression comparisons. Advanced techniques such as Pearson correlation, hierarchical clustering, and K-means clustering are discussed, providing a robust framework for analyzing complex datasets effectively.

Analysis of Microarray Data

E N D

Presentation Transcript

Analysis of Microarray Data Rhys Price Jones Anne Haake Bioinformatics Computing II rpjavp@rit.edu

Some Basic Statistics • dot product • mean • standard deviation • log base 2 • etc. • util.ss rpjavp@rit.edu

Gene chips • Spots representing thousands of genes • Two populations of cDNA • different conditions to be compared • One colored with Cy5 (red) • One colored with Cy3 (green) • Mixed, incubated with the chip • Figures from Campbell-Heyer Chapter 4 rpjavp@rit.edu

Red/Green Intensity measurements • (define redgreens '((2345 2467) (3589 2158) (4109 1469) (1500 3589) (1246 1258) (1937 2104) (2561 1562) (2962 3012) (3585 1209) (2796 1005) (2170 4245) (1896 2996) (1023 3354) (1698 2896))) • Shows (red green) intensities for 14 (out of 6200!) genes rpjavp@rit.edu

Should we normalize? • Average of reds is 2386.9 • Average of greens is 2380.3 • What does John Quackenbush say? (page 420) • Calculate standard deviations. • Return to this issue • For now, no normalization rpjavp@rit.edu

Ratios of red values to green • (define redgreenratios (map (lambda (x) (round2 (/ (car x) (cadr x)))) redgreens)) • Produces (0.95 1.66 2.8 0.42 0.99 0.92 1.64 0.98 2.97 2.78 0.51 0.63 0.31 0.59) • Which genes are expressed more in red than green? • Should these values be normalized? rpjavp@rit.edu

Yet another Color scheme • (0.951.66 2.80.420.99 0.921.640.982.972.780.51 0.630.310.59) • HighlyexpressedNeutralLess expressed • >2.0>1.3close to 1.0>0.5 <0.5 • Seems arbitrary? • Log scale?? • Why oh why did they re-use red and green? • Clustering? Meaning? rpjavp@rit.edu

Larger experiment • 12 Genes • Expression values at 0, 2, 4, 6, 8 and 10 hours rpjavp@rit.edu

Table 4.2 of Campbell/Heyer • Name 0 hrs 2 hrs 4 hrs 6 hrs 8 hrs 10 hrsC 1 8 12 16 12 8 D 1 3 4 4 3 2 E 1 4 8 8 8 8 F 1 1 1 .25 .25 .1 G 1 2 3 4 3 2 H 1 .5 .33 .25 .33 .5 I 1 4 8 4 1 .5 J 1 2 1 2 1 2 K 1 1 1 1 3 3 L 1 2 3 4 3 2 M 1 .33 .25 .25 .33 .5 N 1 .125 .0833 .0625 .0833 .125 • Normalized how? rpjavp@rit.edu

Take logs • C 0 3.0 3.58 4.0 3.58 3.0 D 0 1.58 2.0 2.0 1.58 1.0 E 0 2.0 3.0 3.0 3.0 3.0 F 0 0 0 -2.0 -2.0 -3.32 G 0 1.0 1.58 2.0 1.58 1.0 H 0 -1.0 -1.6 -2.0 -1.6 -1.0 I 0 2.0 3.0 2.0 0 -1.0 J 0 1.0 0 1.0 0 1.0 K 0 0 0 0 1.58 1.58 L 0 1.0 1.58 2.0 1.58 1.0 M 0 -1.6 -2.0 -2.0 -1.6 -1.0 N 0 -3.0 -3.59 -4.0 -3.59 -3.0 • Compare rpjavp@rit.edu

How Similar are two Rows? • How similar are the expressions of two genes? • First we’ll normalize each row (define normalize ; substract mean and divide by sd (lambda (l) (let ((m (mean l)) (s (standarddeviation l))) (map (lambda (x) (/ (- x m) s)) l)))) • What are the new mean and standard deviation? rpjavp@rit.edu

How Similar are two Rows? • Calculate the Pearson Correlation between pairs of rows (define pc ; pearson correlation (lambda (xs ys) (/ (dotproduct (normalize xs) (normalize ys)) (length xs)))) > (pc '( 1 2 3 4 3 2 ) ; row G '( 1 2 3 4 3 2 )) ; row L 1.0 > (pc '( 1 2 3 4 3 2 ) ; row G '( 1 3 4 4 3 2 )) ; row D 0.8971499589146109 rpjavp@rit.edu

Some other pairs • Name 0 hrs 2 hrs 4 hrs 6 hrs 8 hrs 10 hrsC 1 8 12 16 12 8 D 1 3 4 4 3 2 E 1 4 8 8 8 8 F 1 1 1 .25 .25 .1 G 1 2 3 4 3 2 H 1 .5 .33 .25 .33 .5 I 1 4 8 4 1 .5 J 1 2 1 2 1 2 K 1 1 1 1 3 3 L 1 2 3 4 3 2 M 1 .33 .25 .25 .33 .5 N 1 .125 .0833 .0625 .0833 .125 > (pc '( 1 3 4 4 3 2) ; row D '( 1 .33 .25 .25 .33 .5)) ; row M -0.9260278787295065 > (pc '( 1 2 3 4 3 2) ; row G '( 1 .5 .33 .25 .33 .5)) ; row H -0.9090853650855358 rpjavp@rit.edu

Correlation is sensitive to relative magnitudes • pc(G,L) = 1 -- identically expressed genes • pc(G,D) = .897 -- similarly expressed genes • pc(D,M) = -.926 -- reciprocally expressed • pc(G,H) = -.909 -- also reciprocally expressed • What happens if, instead of using the expression data we use the log transforms? • pc(G,L) = 1.0 • pc(G,D) = 0.939 • pc(D,M) = -1.0 • pc(G,H) = -1.0 rpjavp@rit.edu

Hierarchical Clustering • Repeat • Replace the two closest objects by their combination • Until only one object remains rpjavp@rit.edu

What are the objects? (define objects (map (lambda (x) (cons (symbol->string (car x)) (cdr x))) logtable42)) • Initially, the objects are the genes with the log transformed expression levels • Typical object • ("E" 0 2.0 3.0 3.0 3.0 3.0) rpjavp@rit.edu

Combining objects (define combine ; (lambda (xs ys) (cons (string-append (car xs) (car ys)) (map (lambda (x y) (/ (+ x y) 2.0)) (cdr xs) (cdr ys))))) • combine names • average the entries • Typical combined pair: • ("EG" 0 1.5 2.29 2.5 2.29 2.0)) rpjavp@rit.edu

Manual Hierarchical Clustering • Let’s go to emacs rpjavp@rit.edu

K-means Clustering -- Lloyd’s Algorithm Partition data into k clusters REPEAT FOR each datapoint { Calculate its distance to the centroid of each cluster IF this is minimal for its own cluster Leave the datapoint in its current cluster ELSE Place it in its closest cluster } UNTIL no datapoint is moved • Goal: minimize sum of distances from datapoints to centroids rpjavp@rit.edu

Analysis of k-means clustering • There are always exactly k clusters • No cluster is empty (why?) • The clusters are not hierarchical • The clusters do not overlap • Run time with n datapoints: • Partitioning O(n) • FOR loop is O(nk) • REPEAT loop is ??? • kanungo et al • Partition data into k clusters • REPEAT • FOR each datapoint { • Calculate its distance to the centroid of each cluster • IF this is minimal for its own cluster • Leave the datapoint in its current cluster • ELSE • Place it in its closest cluster • } • UNTIL no datapoint is moved rpjavp@rit.edu

Pro and Con • Pro • With small k, may be faster than hierarchical • Clusters may be “tighter” • Con • Sensitive to initial choice of k • Sensitive to initial partition • May converge to local, rather than global minimum • Not clear how good resulting clusters are rpjavp@rit.edu

Other Methods for Clustering • Self Organizing Maps • SWARM technology • SOM/SWARM hybrid rpjavp@rit.edu