Automatic Indexing (Term Selection)

Automatic Indexing (Term Selection). Automatic Text Processing by G. Salton, Chap 9, Addison-Wesley, 1989. Automatic Indexing. Indexing: assign identifiers (index terms) to text documents. Identifiers: single-term vs. term phrase

Automatic Indexing (Term Selection)

E N D

Presentation Transcript

Automatic Indexing (Term Selection) Automatic Text Processing by G. Salton, Chap 9, Addison-Wesley, 1989.

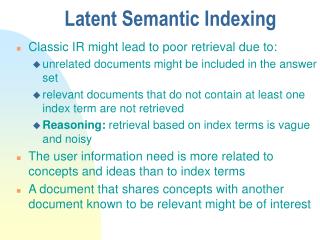

Automatic Indexing • Indexing: • assign identifiers (index terms) to text documents. • Identifiers: • single-term vs. term phrase • controlled vs. uncontrolled vocabulariesinstruction manuals, terminological schedules, … • objective vs. nonobjective text identifiers cataloging rules define, e.g., author names, publisher names, dates of publications, …

Two Issues • Issue 1: indexing exhaustivity • exhaustive: assign a large number of terms • nonexhaustive • Issue 2: term specificity • broad terms (generic)cannot distinguish relevant from nonrelevant documents • narrow terms (specific)retrieve relatively fewer documents, but most of them are relevant

Term-Frequency Consideration • Function words • for example, "and", "or", "of", "but", … • the frequencies of these words are high in all texts • Content words • words that actually relate to document content • varying frequencies in the different texts of a collect • indicate term importance for content

A Frequency-Based Indexing Method • Eliminate common function words from the document texts by consulting a special dictionary, or stop list, containing a list of high frequency function words. • Compute the term frequency tfij for all remaining terms Tj in each document Di, specifying the number of occurrences of Tj in Di. • Choose a threshold frequency T, and assign to each document Di all term Tj for which tfij > T.

How to compute wij ? • Inverse document frequency, idfj • tfij*idfj (TFxIDF) • Term discrimination value, dvj • tfij*dvj • Probabilistic term weightingtrj • tfij*trj • Global properties of terms in a document collection

Inverse Document Frequency • Inverse Document Frequency (IDF) for term Tjwhere dfj (document frequency of term Tj) is the number of documents in which Tj occurs. • fulfil both the recall and the precision • occur frequently in individual documents but rarely in the remainder of the collection

TFxIDF • Weight wij of a term Tj in a document di • Eliminating common function words • Computing the value of wij for each term Tj in each document Di • Assigning to the documents of a collection all terms with sufficiently high (tfxidf) factors

Term-discrimination Value • Useful index terms • Distinguish the documents of a collection from each other • Document Space • Two documents are assigned very similar term sets, when the corresponding points in document configuration appear close together • When a high-frequency term without discrimination is assigned, it will increase the document space density

A Virtual Document Space After Assignment of good discriminator After Assignment of poor discriminator Original State

Good Term Assignment • When a term is assigned to the documents of a collection, the few objects to which the term is assigned will be distinguished from the rest of the collection. • This should increase the average distance between the objects in the collection and hence produce a document space less dense than before.

Poor Term Assignment • A high frequency term is assigned that does not discriminate between the objects of a collection. Its assignment will render the document more similar. • This is reflected in an increasein document spacedensity.

Term Discrimination Value • Definitiondvj = Q - Qjwhere Q and Qj are space densities before and after the assignments of term Tj. • dvj>0, Tj is a good term; dvj<0, Tj is a poor term.

Variations of Term-Discrimination Value with Document Frequency Document Frequency N Low frequency dvj=0 Medium frequency dvj>0 High frequency dvj<0

TFijx dvj • wij = tfijx dvj • compared with • : decrease steadily with increasing document frequency • dvj: increase from zero to positive as the document frequency of the term increase, decrease shapely as the document frequency becomes still larger.

Document Centroid • Issue: efficiency problemN(N-1) pairwise similarities • Document centroidC = (c1, c2, c3, ..., ct)where wij is the j-th term in document i. • Space density

Probabilistic Term Weighting • GoalExplicit distinctions between occurrences of terms in relevant and nonrelevant documents of a collection • DefinitionGiven a user query q, and the ideal answer set of the relevant documents • From decision theory, the best ranking algorithm for a document D

Probabilistic Term Weighting • Pr(rel), Pr(nonrel):document’s a priori probabilities of relevance and nonrelevance • Pr(D|rel), Pr(D|nonrel):occurrence probabilities of document D in the relevant and nonrelevant document sets

Assumptions • Terms occur independently in documents

Given a document D=(d1, d2, …, dt) Assume di is either 0 (absent) or 1 (present). For a specific document D Pr(xi=1|rel) = pi Pr(xi=0|rel) = 1-pi Pr(xi=1|nonrel) = qi Pr(xi=0|nonrel) = 1-qi

Issue • How to computepjand qj? pj = rj / R qj = (dfj-rj)/(N-R) • R: the total number of relevant documents • N: the total number of documents

Estimation of Term-Relevance • The occurrence probability of a term in the nonrelevant documents qj is approximated by the occurrence probability of the term in the entire document collectionqj= dfj/ N • The occurrence probabilities of the terms in the small number of relevant documents is equal by using a constant value pj= 0.5 for all j.

Comparison = idfj When N is sufficiently large, N-dfj N,