Privacy: Lessons from the Past Decade

750 likes | 936 Views

Privacy: Lessons from the Past Decade. Vitaly Shmatikov The University of Texas at Austin. Tastes Purchases. Medical and genetic data. Browsing history. Web searches. Web tracking. Aggregation. Universal data accessibility. Social aggregation. Database marketing. Medical data.

Privacy: Lessons from the Past Decade

E N D

Presentation Transcript

Privacy:Lessons from the Past Decade Vitaly Shmatikov The University of Texas at Austin

Tastes Purchases Medical and genetic data Browsing history Web searches

Aggregation Universal data accessibility Social aggregation Database marketing

Medical data • Electronic medical records (EMR) • Cerner, Practice Fusion … • Health-care datasets • Clinical studies, hospital discharge databases … • Increasingly accompanied by DNA information • PatientsLikeMe.com

High-dimensional datasets • Row = user record • Column = dimension • Example: purchased items • Thousands or millions of dimensions • Netflix movie ratings: 35,000 • Amazon purchases: 107

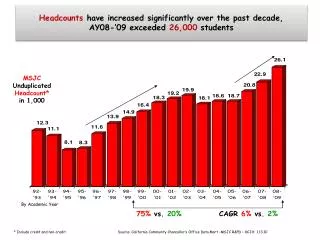

Sparsity and “Long Tail” Average record has no “similar” records Netflix Prize dataset: Considering just movie names, for 90% of records there isn’t a single other record which is more than 30% similar similarity

Graph-structured social data • Node attributes • Interests • Group membership • Sexual orientation • Edge attributes • Date of creation • Strength • Type of relationship

Whose data is it, anyway? Traditional notion: everyone owns and should control their personal data • Social networks • Information about relationships is shared • Genome • Shared with all blood relatives • Recommender systems • Complex algorithms make it impossible to trace origin of data

Famous privacy breaches Search Mini-feed Beacon Applications • Why did they happen?

Data release today • Datasets are “scrubbed” and published • Why not interactive computation? • Infrastructure cost • Overhead of online privacy enforcement • Resource allocation and competition • Client privacy • What about privacy of data subjects? • Answer: data have been ANONYMIZED

The crutch of anonymity (U.S) (U.K) Deals with ISPs to collect anonymized browsing data for highly targeted advertising. Users not notified. Court ruling over YouTube user log data causes major privacy uproar. Deal to anonymize viewing logs satisfies all objections.

Targeted advertising “… breakthrough technology that uses social graph data to dramatically improve online marketing … "Social Engagement Data" consists of anonymous information regarding the relationships between people” “The critical distinction … between the use of personal information for advertisements in personally-identifiable form, and the use, dissemination, or sharing of information with advertisers in non-personally-identifiable form.”

The myth of the PII • Data are “scrubbed” by removing personally identifying information (PII) • Name, Social Security number, phone number, email, address… what else? • Problem: PII has no technical meaning • Defined in disclosure notification laws • If certain information is lost, consumer must be notified • In privacy breaches, any information can be personally identifying

More reading Narayanan and Shmatikov. “Myths and Fallacies of ‘Personally Identifiable Information’ ” (CACM 2010)

De-identification Tries to achieve “privacy” by syntactic transformation of the data - Scrubbing of PII, k-anonymity, l-diversity… Fatally flawed! Insecure against attackers with external information Does not compose (anonymize twice reveal data) No meaningful notion of privacy No meaningful notion of utility

Latanya Sweeney’s attack (1997) Massachusetts hospital discharge dataset Public voter dataset

Closer look at two records Identifiable, no sensitive data Anonymized, contains sensitive data Age (70) ZIP code (78705) Sex (Male) Age (70) ZIP code (78705) Sex (Male) Name (Vitaly) Disease (Jetlag) Voter registration Patient record

Database join Age (70) Zip code (78705) Sex (Male) Name (Vitaly) Disease (Jetlag) Vitaly suffers from jetlag!

Observation #1: data joins • Attacker learns sensitive data by joining two datasets on common attributes • Anonymized dataset with sensitive attributes • Example: age, race, symptoms • “Harmless” dataset with individual identifiers • Example: name, address, age, race • Demographic attributes (age, ZIP code, race, etc.) are very common

Observation #2: quasi-identifiers • Sweeney’s observation: (birthdate, ZIP code, gender) uniquely identifies 87% of US population • Side note: actually, only 63% • Publishing a record with a quasi-identifier is as bad as publishing it with an explicit identity • Eliminating quasi-identifiers is not desirable • For example, users of the dataset may want to study distribution of diseases by age and ZIP code [Golle WPES ‘06]

k-anonymity • Proposed by Samarati and Sweeney • First appears in an SRI tech report (1998) • Hundreds of papers since then • Extremely popular in the database and data-mining communities (SIGMOD, ICDE, KDD, VLDB) • Many k-anonymization algorithms, most based on generalization and suppression of quasi-identifiers

Anonymization in a nutshell • Dataset is a relational table • Attributes (columns) are divided into quasi-identifiers and sensitive attributes • Generalize/suppress quasi-identifiers, but don’t touch sensitive attributes (keep them “truthful”)

k-anonymity: definition • Any (transformed) quasi-identifier must appear in at least k records in the anonymized dataset • k is chosen by the data owner (how?) • Example: any age-race combination from original DB must appear at least 10 times in anonymized DB • Guarantees that any join on quasi-identifiers with the anonymized dataset will contain at least k records for each quasi-identifier

Two (and a half) interpretations • Membership disclosure: cannot tell that a given person in the dataset • Sensitive attribute disclosure: cannot tell that a given person has a certain sensitive attribute • Identity disclosure: cannot tell which record corresponds to a given person Does not imply any privacy! Example: k clinical records, all HIV+ This interpretation is correct (assuming the attacker only knows quasi-identifiers)

Curse of dimensionality Aggarwal VLDB ‘05 • Generalization fundamentally relies on spatial locality • Each record must have k close neighbors • Real-world datasets are very sparse • Netflix Prize dataset: 17,000 dimensions • Amazon: several million dimensions • “Nearest neighbor” is very far • Projection to low dimensions loses all info k-anonymized datasets are useless

k-anonymity: definition ... or how not to define privacy • Any (transformed) quasi-identifier must appear in at least k records in the anonymized dataset Does not mention sensitive attributes at all! Does not say anything about the computations to be done on the data Assumes that attacker will be able to join only on quasi-identifiers

Sensitive attribute disclosure Intuitive reasoning: • k-anonymity prevents attacker from telling which record corresponds to which person • Therefore, attacker cannot tell that a certain person has a particular value of a sensitive attribute This reasoning is fallacious!

3-anonymization This is 3-anonymous, right?

Joining with external database Problem: sensitive attributes are not “diverse” within each quasi-identifier group

Another attempt: l-diversity Machanavajjhalaet al. ICDE ‘06 Entropy of sensitive attributes within each quasi-identifier group must be at least L

Failure of l-diversity Original database Anonymization A Anonymization B 99% cancer quasi-identifier group is not “diverse” …yet anonymized database does not leak anything 50% cancer quasi-identifier group is “diverse” This leaks a ton of information! 99% have cancer

Membership disclosure • With high probability, quasi-identifier uniquely identifies an individual in the population • Modifying quasi-identifiers in the dataset does not affect their frequency in the population! • Suppose anonymized dataset contains 10 records with a certain quasi-identifier … and there are 10 people in the population who match it • k-anonymity may not hide whether a given person is in the dataset Nergizet al. SIGMOD ‘07

What does attacker know? Bob is Caucasian and I heard he was admitted to hospital with flu… This is against the rules! “flu” is not a quasi-identifier Yes… and this is yet another problem with k-anonymity!

Other problems with k-anonymity • Multiple releases of the same dataset break anonymity • Mere knowledge of the k-anonymization algorithm is enough to reverse anonymization Gantaet al. KDD ‘08 Zhang et al. CCS ‘07

k-Anonymity considered harmful • Syntactic • Focuses on data transformation, not on what can be learned from the anonymized dataset • “k-anonymous” dataset can leak sensitive info • “Quasi-identifier” fallacy • Assumes a priori that attacker will not know certain information about his target • Relies on locality • Destroys utility of many real-world datasets

HIPAA Privacy Rule "Under the safe harbor method, covered entities must remove all of a list of 18 enumerated identifiers and have no actual knowledge that the information remaining could be used, alone or in combination, to identify a subject of the information." “The identifiers that must be removed include direct identifiers, such as name, street address, social security number, as well as other identifiers, such as birth date, admission and discharge dates, and five-digit zip code. The safe harbor requires removal of geographic subdivisions smaller than a State, except for the initial three digits of a zip code if the geographic unit formed by combining all zip codes with the same initial three digits contains more than 20,000 people. In addition, age, if less than 90, gender, ethnicity, and other demographic information not listed may remain in the information. The safe harbor is intended to provide covered entities with a simple, definitive method that does not require much judgment by the covered entity to determine if the information is adequately de-identified."

Lessons • Anonymization does not work • “Personally identifiable” is meaningless • Originally a legal term, unfortunately crept into technical language in terms such as “quasi-identifier” • Any piece of information is potentially identifying if it reduces the space of possibilities • Background info about people is easy to obtain • Linkage of information across virtual identities allows large-scale de-anonymization

How to do it right • Privacy is not a property of the data • Syntactic definitions such as k-anonymity are doomed to fail • Privacy is a property of the computation carried out on the data • Definition of privacy must be robust in the presence of auxiliary information – differential privacy Dworket al. ’06-10

Differential privacy (intuition) similar output distributions Risk for C does not increase much if her data are included in the computation Mechanism is differentially private if every output is produced with similar probability whether any given input is included or not

Computing in the year 201X • Illusion of infinite resources • Pay only for resources used • Quickly scale up or scale down … Data

Programming model in year 201X Output Map Reduce • Data mining • Genomic computation • Social networks Data • Frameworks available to ease cloud programming • MapReduce: parallel processing on clusters of machines

Programming model in year 201X • Thousands of users upload their data • Healthcare, shopping transactions, clickstream… • Multiple third parties mine the data • Example: health-care data • Incentive to contribute: Cheaper insurance, new drug research, inventory control in drugstores… • Fear: What if someone targets my personal data? • Insurance company learns something about my health and increases my premium or denies coverage

Privacy in the year 201X ? Information leak? Untrusted MapReduce program Output • Data mining • Genomic computation • Social networks Health Data

Audit untrusted code? Hard to do! Enlightenment? Also, where is the source code? Audit MapReduce programs for correctness? Aim: confine the code instead of auditing

Airavat Untrusted program Protected Data Airavat Framework for privacy-preserving MapReducecomputations with untrusted code

Airavat guarantee Untrusted program Protected Data Airavat *Differential privacy Bounded information leak* about any individual data after performing a MapReduce computation.

Background: MapReduce map(k1,v1) list(k2,v2) reduce(k2, list(v2)) list(v2) Map phase Reduce phase

MapReduce example Map(input){ if (input has iPad) print (iPad, 1) } Reduce(key, list(v)){ print (key + “,”+ SUM(v)) } Counts no. of iPads sold (ipad,1) (ipad,1) SUM Map phase Reduce phase