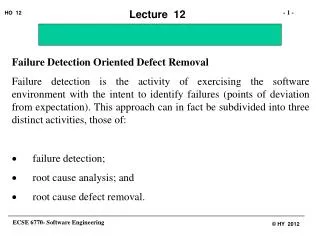

Failure Detection Oriented Defect Removal

Failure Detection Oriented Defect Removal Failure detection is the activity of exercising the software environment with the intent to identify failures (points of deviation from expectation). This approach can in fact be subdivided into three distinct activities, those of:

Failure Detection Oriented Defect Removal

E N D

Presentation Transcript

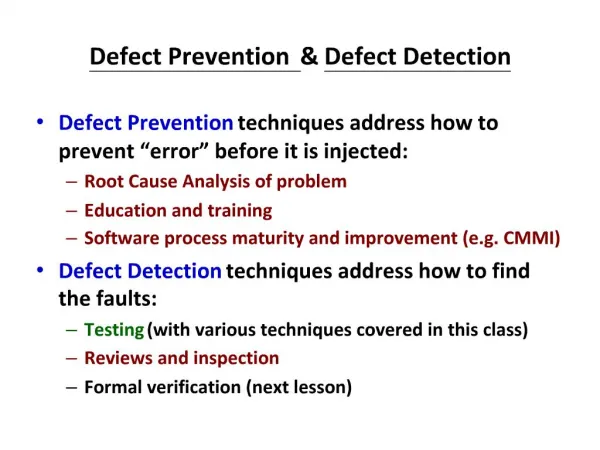

Failure Detection Oriented Defect Removal Failure detection is the activity of exercising the software environment with the intent to identify failures (points of deviation from expectation). This approach can in fact be subdivided into three distinct activities, those of: · failure detection; · root cause analysis; and · root cause defect removal.

In this sense failure detection is the activity that comes closest to the definitions that have traditionally been provided for the activity of “testing” (Myers, 1979). Even though at times differences may be detected between the intent and expression of these traditional definitions and that of the activity we term “failure detection” (Goodenough and Gerhardt, 1975), nevertheless there is sufficient proximity for the terms to be used interchangeably.

Root cause analysis is the activity of tracing the manifestation of a defect or series of defects (a failure) as discovered in the environment through failure detection or normal usage, through to the defect or defects in the source code that caused such failure. This is - as we have mentioned before - a non-trivial and time consuming task. Root cause defect removal is the activity of correcting the defect identified in the source code. The last two activities combined are usually termed “debugging”. A large number of debugging techniques exist, the most frequently cited of which are: program tracing and scaffolding (Bently, 1985). Surveys and descriptions of effective debugging techniques is available elsewhere (e.g. Kransmuller et al., 1996; McDowall and Helmbold, 1989; Stewart and Gentleman, 1997), and will not be discussed further.

Failure detection (testing) itself can be sub-divided into two sub-categories each divisible into further sub-categories themselves, as follows: • Partitioning or sub-domain testing • specification based or functionally based; • program-based or structurally based; • defect based or evolutionary based; • analytically based. • Statistical Testing • random testing; • error seeding.

Partitioning or Sub-domain Testing A popular approach employed as an attempt to controlling the combinatorial explosion problem in testing is to subdivide the input domain into a number of logical subsets called “sub-domains”, according to some criterion or set of criteria, and then to select a small number of representative elements from each subdomain as potential test case candidates (Frankl et al., 1997). The criteria usually employed may be classified as aforementioned.

Specification-based or Functionally Based Approaches Sometimes also referred to as functional testing, these techniques concentrate on selecting input data from partitioned sets that effectively test the functionality specified in the requirements specification for the program. The partitioning is therefore based on placing inputs that invoke a particular aspect of the program’s functionality into a given partition. In such techniques testing involves only the observation of the output states given the inputs, and as such no analysis of the structure of the program is attempted (Hayes, 1986; Luo et al., 1994; Weyuker and Jeng, 1991). Individual approaches to input domain partitioning include:

Equivalence Partitioning (Myers, 1975; Frankl et al., 1997). This is where each input condition is partitioned into sets of valid and invalid classes called equivalence classes. These are in turn used to generate test cases by selecting representative values of valid and invalid elements from each class. In this approach one can reasonably assume but not be certain that a test of a representative value of each class is equivalent to a test of any other value. That is, if one test case in a class detects an error, all other test cases in the class would be expected to do the same. Conversely, if a test case did not detect an error, we would expect that no other test case in the class would find an error.

Efficacy of Equivalence Partitioning The major deficiency of equivalence partitioning is that they are in general ineffective in testing combinations of input circumstances. Also there is considerable variability in terms of the number of resultant test cases or testing effectiveness depending on how and at what granularity the equivalence classes are determined. In most typical situations however, defects are inter-dependent and it is hard to predict the correct level of granularity for the equivalence classes prior to testing. These two deficiencies have a significantly detrimental effect on the defect identification efficacy of testing strategies based on equivalence partitioning (Beizer, 1991; Frankl et al., 1997).

Cause Effect Graphing Probably initially proposed by Elmendorf (1974) and popularized by Myer (1979), cause effect graphing is a method of generating test cases by attempting to translate the precondition/postcondition implications of the specification to a logical notation whose satisfaction by the program may be ascertained (Myers, 1979).

Efficacy of Cause Effect Graphing Cause effect graphing can be useful when applied to simple cases. It has the interesting side effect of assisting in discovery of potential incompleteness or conflict in the specification. Unfortunately, it suffers from a number of weaknesses which make its utility of limited scope. The major weakness of cause-effect graphing are that it:

· is difficult in practice to apply it as it still suffers from combinatorial explosion problems; · is difficult to convert the graph to a useful format (usually a decision table); · does not produce all the logically required test cases; · is deficient with regards to boundary conditions.

Boundary Value Analysis A potential resolution to the last stated deficiency with cause-effect graphing is boundary value analysis which is to be used in conjunction with the aforementioned. Boundary value analysis (Myers, 1979) is a method similar to equivalence partitioning in that it subdivides the domain into equivalence classes. The differences are that:

test cases are derived by considering both input as well as output equivalence classes; and that test element selection is made in such a way that each independent logical path of execution of the class is subjected to at least one test. In terms of efficacy, boundary value analysis suffers from exactly the same weaknesses as does equivalence partitioning (Omar and Mohammed, 1991).

Category-Partition Method This method (Ostrand and Balcer, 1988) decomposes the functional specification into independently testable functional units. For each functional unit, parameters that affect the execution of that unit (i.e. the explicit inputs) are identified and their characteristics determined, each forming a category. Each category is then partitioned into distinct classes, each containing sets of values that can be deemed equivalent. These sets are called “choices”. To develop test cases, the constraints between choices (e.g. choice X must precede choice Y), are determined and a formal test specification is composed for each functional unit.

Efficacy of Category - Partition Method Somewhat similar to equivalence partitioning, the advantage of category partitioning is in the facility with which test specifications may be modified. Additionally, the complexity and the number of cases may also be controlled. The major disadvantages of this method are those enumerated for other equivalence partitioning type methods.

The Efficacy of Specification Based Testing Approaches The number and dates of the publications cited herein indicate that the shortcomings of specification based methods were recognized early and that they have been criticized by many authors. The main criticism is that they generally are unable to find non-functional failures (Haung, 1975; Howden, 1982; Myers, 1979; Ntafos and Hakimi, 1979). They are also often imprecise in terms of rules for identification or the level of granularity for selection of partition classes (Howden, 1982). They are also usually hard to automate as human expertise is often a requirement, at least for sub-domain class selection (Ostrand and Balcer, 1988). Another major issue is that of the rapid proliferation of test cases needed for adequate coverage (Haung, 1975; Myers, 1979; Ntafos and Hakimi, 1979).

Two other major shortcomings of this category of techniques relate to: · the assumption of disjoint partitions This is the assumption that it is sufficient for the partitions or sub-domains created to be disjoint. In reality however, sub-domains may not be all disjoint and hence the special case of disjoint partitions does not reflect the characteristics of the general case (Frankl and Weyuker, 1993).

· the assumption of homogeneity This is the assumption that sub-domains are homogeneous in that either all members of a sub-domain cause a program to fail or none do so. Therefore, the assumption proceeds, that one representative of each sub-domain is sufficient to test the program adequately. This assumption is however unjustified in that in general it is very impractical, if not impossible, to achieve homogeneity. Thus frequent sampling of sub-domains is still required.

Much of the recent work in the analysis of the efficacy of sub-domain testing has attempted to challenge these assumptions and provide frameworks that do not include them. Weyuker and Jeng (1994) challenge the homogeneity assumption but still assume sub-domains to be generally disjoint. Chen and Yu (1994) carry on from this and generalize this work and demonstrate that under certain conditions, sub-domain testing is at least as efficacious as random testing (to be discussed later). Further work by Chen and Yu (1996) challenges the second assumption (that of sub-domains being disjoint) as they include in their analysis both joint and disjoint sub-domains.

The work again identifies a number of cases (albeit small in practice) where sub-domain testing might be a superior technique to use compared to random testing. In general and in the absence of a scheme by which specific conditions are built into the sub-domains, the efficacy of sub-domain testing remains very close to that of pure random testing and usually, it seems, not worth the extra effort (Duran and Ntafos, 1984; Hamlet and Taylor, 1990; Weyuker and Jeng, 1994; Chen and Yu, 1994). Structurally based techniques (often also called program based), that concentrate on the way the program has been built have been proposed as a complementary technique to those described above.

Program Based Testing Approaches When the basis for the subdivision of the domain is not the functional specification of the system or what the system should do, but what the program actually does, then it becomes possible to devise sub-domain partitions that attempt to provide “coverage” by exercising necessary elements of the program. These elements may relate to the structural elements of the program such as statements, edges, or paths, or the data flow characteristics of the program. Often termed white box testing or glass box testing (Haung, 1975; Myers, 1979; Ntafos and Hakimi, 1979), the basic requirement is the execution of each program “element” at least once. The inputs that execute a particular element form a sub-domain.

Statement Coverage Statement coverage is deemed useful on the basis that by observing a failure upon execution of a program statement, we can identify a defect. As such, it is necessary to execute every statement in a program at least once. Based on the above, therefore, white box testing methods have been devised that rely on the criterion that: test sets must be selected such that every statement of the program is executed at least once. The main problem with statement coverage as an approach (aside from the basic logical problem all program based approaches suffer from) is that it is not clear what is meant by a statement in that whether we need to deal with blocks or individual elementary statements.

Another problem is how to deal with recursion - that is, whether to consider a recursive block as one series of statements or several nested ones, and if the latter, how many nestings, etc.

Control Flow Coverage If we consider a program as a graph of nodes and edges, with nodes representing decision points and edges representing the path to the next decision point, the statement coverage criterion above calls for coverage of all nodes. Control flow coverage (also known as edge coverage) on the other hand, calls for coverage of all the edges between nodes. This is reasonable in that in such a graph as described above, edges describe the control flow of the program in that test cases make each condition generate either a true or a false value. Thus a test that gives full edge coverage tests all independently made decisions in the program.

Hidden edges however exist in the form of logical conditions. Simple control flow coverage will miss these. Yet, control flow coverage may be further strengthened by addition of the requirement that all possible compound conditions also be exercised at least once. This is called the condition coverage criterion.

Path Coverage Combining statement and control flow coverage requirements one can define a coverage criterion that states that test sets must be selected such that all paths within the program flow graph are traversed at least once. It is clear that this requirement is stronger than either the statement or the control flow coverage criteria individually or both conducted separately. The problem is, however, that traversing all control paths is infeasible as it suffers from the explosion of state space problem. It may be however feasible to cover all the “independent” paths within a program.

Data Flow Oriented Data flow coverage methods (Rapps and Weyuker, 1985) subject the system to data flow analysis (Weiser, 1984) and identify certain data flow patterns that might be induced. The way these data flows may be induced are then used as the basis for creation of test sub-domains and subsequently of test sets. Boundary interior testing (Howden, 1987) for example requires selecting two test sets for each loop, one that enters the loop but does not cause iteration, and one that does. The problem with data flow oriented approaches is that “they produce too many uninteresting anomalies unless integrated with a tool to evaluate path feasibility and subsequently remove un-executable anomalies" (Clarke et al., 1989).

The Efficacy of Program Based Approaches All program based approaches suffer from a number of logical shortcomings. As highlighted earlier, program based techniques rely on the implication that by observing a failure upon execution of a program element (statement, edge, path,..) we can identify a defect. Or, alternatively, if a failure is encountered when executing a particular program element, then a defect is presented in that program element. This statement can be written in the form of p-> q. The implication in itself is sound. The problem stems from the fact that this simple implication is frequently mis-represented in a number of generally incorrect forms such as:

· not p->not q which states: if a failure is not encountered when executing a particular program element, then a defect does not exist in that program element. This is clearly not logically equivalent to our initial implication statement, yet is the aspiration upon which program based testing in particular and partition testing in general is based.

· (p(X) -> q(x))->(p(Y)->q(y)) This may be interpreted as: if a failure encountered during testing of a program element using data set (X), yields a defect (x), then using dataset (Y) will result in a similar failure which in turn will yield defect y. This again is not a logical consequence of our original implication as confidence in the true equivalence of our “equivalence sets” must be first established.

Aside from the logical shortcomings, there remains the combinatorial weakness of these coverage based techniques. Although addressed by many authors (Clarke, 1988; Frankl and Weyuker, 1988; Goldberg et al., 1994; Hutchins; 1994) using a variety of approaches (Bicevskis et al., 1990; Chilenski and Miller, 1993; Clarke, 1976; Lindquist and Jenkins, 1988; RTCA Inc., 1992), these still remain.

Defect Based Approaches Defect based approaches were introduced and studied during the 1970s and 1980s as a potentially more effective approach to testing compared to specification based approaches (DeMillo and Offutt, 1991; DeMillo et al., 1988; Howden, 1987; Morell, 1988; Offutt, 1992; Richardson and Thompson, 1988; Weyuker, 1983; Weyuker and Ostrand, 1980). These techniques rely on the hypothesis that programs tend to contain defects of specific kind that can be well defined, and that by testing for a restricted class of defects we can find a wide class of defects. This is in turn based on the hypothesis that:

good programmers write programs that contain only a few defects (which is close to being correct) (Budd, 1980; DeMillo et al., 1978); and that a test data set that detects all simple defects, is capable of detecting more complex defects (coupling effects) (DeMillo et al., 1978). Thus if we create many different versions of a program (mutants) by making a small change to the original, intending them to correspond to typical program defects that might be injected, then test data that identifies such a defect in a mutant is also capable of identifying the same type of defect in the original program.

also due to coupling effects, a test set that identifies a mutant will also reveal more complex defects than the type just discovered. Inputs that kill a particular mutant form a sub-domain. Mutation testing (Hamlet and Taylor, 1990, Budd, 1980) is the implementation of these ideas, and is closely related to the method of detecting defects in digital circuits. Despite the superficial resemblance between mutation testing and the testing technique known as error seeding (Mills, 1989) the two methods are strictly distinct. This is so because error seeding is a statistical method that works on the basis of ratio of detected vs. remaining defects, whereas mutation testing deals with sensitivity of test sets to small changes.

The Efficacy of Defect Based Approaches Mutation testing is currently the only technique known to the author that falls into this category. As such mutation testing will be selected as the single representative of this category for comparison purposes in this section. Budd (1980) makes a cursory comparison between various techniques falling under the categories of specification and program based approaches and concludes that all testing goals stated by these approaches are also attainable through employment of mutation testing.

On the negative side, the following may be extended as criticism of this approach. · mutation testing requires the execution of a very large number of mutants; · it is very difficult (often impossible) to decide on the equivalence of a mutant and the original program even for very simple systems; · that a large number (up to 80%) of all mutants die before contributing any significant information (Budd, 1980); · that the last remaining mutants (2 to 10%) take up a significant number of test case applications (>50%)

Statistical Testing Approaches Statistical testing is the use of the random and statistical nature of defects in order to exercise a program with the aim of causing it to fail (Thevenod-Fosse and Waeselynck, 1991). There are basically two approaches: Use of a Probability Distribution to Generate Test inputs. Error Seeding

Use of a Probability Distribution to Generate Test inputs. The extent of the effectiveness of this strategy is directly related to the distribution utilized to derive the input test set. Extreme variability therefore exists in terms of which distribution is utilized, ranging from a uniform distribution (Duran and Ntafos, 1984) to very sophisticated distributions derived formally from the structure or the specification of the program (Higashino and von Bochman, 1994; Luo et al., 1994; Whittaker and Thomason, 1994).

There are essentially two directions one can take: 1. Use an informally selected "distribution" and then test each element many times. In other words leave the composition of the input distribution to the tester's intuition. This is by far the most prevalent method of software testing and is popularly termed "basic or simple random testing" (Beizer, 1991; Humphrey, 1995; Myers, 1979). With this technique the tester will generate and use random inputs that is envisaged to uncover defects without the formal use of probability distributions but with a knowledge of the structure or the required functionality of program. This is a very simple and easily understandable strategy.

However, its efficacy has been demonstrated to be generally too low for the production of commercially robust software, at least when utilized in isolation (Duran and Ntafos, 1984; Frankl, et al., 1997; Woit, 1992). Yet its popularity persists and has made this approach a standard stock technique of testing practised practically everywhere at least as one of many methods (Cobb and Mills, 1990; Humphrey, 1995).

2. Search for test distributions that are better approximations of the operational profile or the structure of the system and then reduce the number of tests for each element (e.g. Whittaker and Thomason, 1994). This is a theoretically attractive but practically arduous approach. This is because the true operational profile of a software product is usually unknown prior to commissioning. Another issue is the intricacy of the relationship between the structure of a program and the test input distribution. This can become impractically too complicated as the size and complexity of the program grows (Hamlet and Taylor, 1990; Frankl and Weyuker, 1993).

Error Seeding This is the very old but interesting technique in which a known number of carefully devised known defects are injected (seeded) into a program that is to be tested. The program is then tested during which a number of these previously known and a number of previously unknown defects are identified. Assuming that the seeded defects are "typical" defects, it stands to reason to accept that the ratio of the known defects found so far and the total of the known defects is the same as the ratio of the unknown ones. This will allow us to statistically estimate the number of remaining defects in a program (Endres, 1975; Mills, 1972; Mills, 1989).

Beyond Unit Testing Unit testing attempts to cause a failure based on the logic of a single unit of software; I.e. a class, a method, a module, etc. Thus unit testing examines the behavior of a single unit. Beyond unit testing, it would be appropriate to test the interaction of units when working together. Integration testing is at at least one level of granularity above unit testing. System testing is assessing the system in its entirety and is at the top level granularity.

IntegrationTesting: Integration testing (particularly in an OO environment) is best done in conjunction with the usecase model, interaction diagrams or sequence diagrams. The basic aim of integration testing is to assess that a module (an object for example) which is presumed internally correct can fulfill its contract with respect to its clients. Thus in OO, there is a gray area between unit testing and integration testing in that it is hard to unit test an object without at least some of its clients, or one at a time. In integration testing however, we go beyond that and usually integrate the object with all its direct clients and servers.

As larger and larger modules are integration tested, they in turn need to be integrated together. Thus integration testing is a multi-tiered operation. The essence is however that for each module (irrespective of it being an object, a collection of objects, a package , …) we must assume the element to possess a certain contract and seek to cause that contract to be violated. In a well designed system the levels (tiers) of integration testing should roughly correspond to the levels of granularity of the usecase model. Integration testing concludes when all system modules are integrated as stipulated in the System Design Document.

What if? What if we managed to cause a failure during integration testing and sought the cause and rectified it? This would change one or a number of our lower level units (e.g. classes). What do we do now? RegressionTesting As the name implies regression testing is going back to test what has already been tested because there is emergent need to do so. It is recommended that when any module is changed that its contract be re-examined through integration testing and its contents through unit testing.

SystemTesting Once the results of integration testing as applied to all system modules become acceptable. There is time for system testing. Why you might ask? Have we not already tested every module and all of system interactions? The answer is that whilst we may have examined every module, we have not done so exhaustively, also when it comes to interactions, we may have examined many of these but certainly not all. The problem of combinatorial explosion will never permit complete testing of any system.

A more subtle and probably more important issue is also that of the nature of the examination so far. Unit testing is against the object model, integration testing is against the Design Model and according to some set Test Plan. These models and tests examine the system against a background of how the system is put together, that is an interpretation of the requirements and not with respect to the actual requirements of the system. We now need to take that crucial step. This is done in potentially three forms. Requirements testing (Functional Testing) Performance testing Acceptance procedures

Requirements Testing: This is done by the the V&V team and is done with respect to the Requirements document directly and the users manual. At this stage we must attempt to cause failure in the system to fulfill as many of the stated or implied requirements as possible. Performance Testing: This is also done by the V&V team and with respect to the quality characteristics of reliability, usability and maintainability. We also specifically test performance under data and transaction loads.

Field Testing: There are two types alpha testing and beta testing This is done by the customer, the potential customer or their proxy.