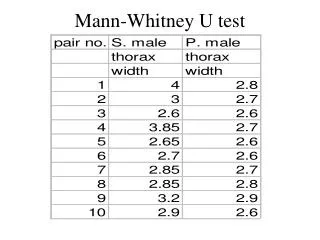

The Mann-Whitney U test

The Mann-Whitney U test. Peter Shaw. Introduction. We meet our first inferential test. You should not get put off by the messy-looking formulae – it’s usually run on a PC anyway. The important bit is to understand the philosophy of the test. Imagine.

The Mann-Whitney U test

E N D

Presentation Transcript

The Mann-Whitney U test • Peter Shaw

Introduction • We meet our first inferential test. • You should not get put off by the messy-looking formulae – it’s usually run on a PC anyway. • The important bit is to understand the philosophy of the test.

Imagine.. • That you have acquired a set of measurements from 2 different sites. • Maybe one is alleged to be polluted, the other clean, and you measure residues in the soil. • Maybe these are questionnaire returns from students identified as M or F. • You want to know whether these 2 sets of measurements genuinely differ. The issue here is that you need to rule out the possibility of the results being random noise.

The formal procedure: • Involves the creation of two competing explanations for the data recorded. • Idea 1:These are pattern-less random data. Any observed patterns are due to chance. This is the null hypothesis H0 • Idea 2: There is a defined pattern in the data. This is the alternative hypothesis H1 • Without the statement of the competing hypotheses, no meaning test can be run.

Occam’s razor • If competing explanations exist, chose the simpler unless there is good reason to reject it. • Here, you must assume H0 to be true until you can reject it. • In point of fact you can never ABSOLUTELY prove that your observations are non-random. Any pattern could arise in random noise, by chance. Instead you work out how likely H0 is to be true.

Example You conduct a questionnaire survey of homes in the Heathrow flight path, and also a control population of homes in South west London. Responses to the question “How intrusive is plane noise in your daily life” are tabulated: • Noise complaints 1= no complaint, 5 = very unhappy • Homes near airport Control site • 5 3 • 4 2 • 4 4 • 3 1 • 5 2 • 4 1 • 5

Stage 1: Eyeball the data! • These data are ordinal, but not normally distributed (allowable scores are 1, 2, 3, 4 or 5). • Use Non-parametric statistics • It does look as though people are less happy under the flightpath, but recall that we must state our hypotheses H0, H1 • H0: There is no difference in attitudes to plane noise between the two areas – any observed differences are due to chance. • H1: Responses to the question differed between the two areas.

Now we assess how likely it is that this pattern could occur by chance: • This is done by performing a calculation. Don’t worry yet about what the calculation entails. • What matters is that the calculation gives an answer (a test statistic) whose likelihood can be looked up in tables. Thus by means of this tool - the test statistic - we can work out an estimate of the probability that the observed pattern could occur by chance in random data

One philosophical hurdle to go: • The test statistic generates a probability - a number for 0 to 1, which is the probability of H0 being true. • If p = 0, H0 is certainly false. (Actually this is over-simple, but a good approximation) • If p is large, say p = 0.8, H0 must be accepted as true. • But how about p = 0.1, p = 0.01?

Significance • We have to define a threshold, a boundary, and say that if p is below this threshold H0 is rejected otherwise H1 is accepted. • This boundary is called the significance level. By convention it is set at p=0.05 (1:20), but you can chose any other number - as long as you specify it in the write-up of your analyses. • WARNING!! This means that if you analyse 100 sets of random data, the expectance (log-term average) is that 5 will generate a significant test.

The procedure: Decide significance level p=0.05 Set up H0, H1. • Data • 5 3 • 4 2 • 4 4 • 3 1 • 5 2 • 4 1 • 5 Test statistic U = 15.5 Probability of H0 being true p = 0.03 Is p above critical level? Y N Reject H0 Accept H0

This particular test: • The Mann-Whitney U test is a non-parametric test which examines whether 2 columns of data could have come from the same population (ie “should” be the same) • It generates a test statistic called U (no idea why it’s U). By hand we look U up in tables; PCs give you an exact probability. • It requires 2 sets of data - these need not be paired, nor need they be normally distributed, nor need there be equal numbers in each set.

How to do it 2 Harmonize ranks where the same value occurs more than once • 1: rank all data into ascending order, then re-code the data set replacing raw data with ranks. • Data • 5 3 • 4 2 • 4 4 • 3 1 • 5 2 • 4 1 • 5 • Data • 5 #13 3 #5 • 4 #10 2 #4 • 4 #9 4 #7 • 3 #6 1 #2 • 5 #12 2 #3 • 4 #8 1 #1 • 5 #11 • Data • 5 #13 = 12 3 #5 = 5.5 • 4 #10 = 8.5 2 #4 = 3.5 • 4 #9 = 8.5 4 #7 = 8.5 • 3 #6 = 5.5 1 #2 = 1.5 • 5 #12 = 12 2 #3 = 3.5 • 4 #8 = 8.5 1 #1 = 1.5 • 5 #11 = 12

Once data are ranked: • Add up ranks for each column; call these rx and ry • (Optional but a good check: • rx + ry = n2/2 + n/2, or you have an error) • Calculate • Ux = NxNy + Nx(Nx+1)/2 - Rx • Uy = NxNy + Ny(Ny+1)/2 - Ry • take the SMALLER of these 2 values and look up in tables. If U is LESS than the critical value, reject H0 • NB This test is unique in one feature: Here low values of the test stat. Are significant - this is not true for any other test.

In this case: • Data • 5 #13 = 12 3 #5 = 5.5 • 4 #10 = 8.5 2 #4 = 3.5 • 4 #9 = 8.5 4 #7 = 8.5 • 3 #6 = 5.5 1 #2 = 1.5 • 5 #12 = 12 2 #3 = 3.5 • 4 #8 = 8.5 1 #1 = 1.5 • 5 #11 = 12 • ___ ___ • rx=67 ry=24 • Check: rx + ry + 91 • 13*13/2 + 13/2 = 91 CHECK. Ux = 6*7 + 7*8/2 - 67 = 3 Uy = 6*7 + 6*7/2 - 24 = 39 Lowest U value is 3. Critical value of U (7,6) = 4 at p = 0.01. Calculated U is < tabulated U so reject H0. At p = 0.01 these two sets of data differ.

Tails.. Generally use 2 tailed tests 2 tailed test: These populations DIFFER. 1 tailed test: Population X is Greater than Y (or Less than Y). Upper tail of distribution Lower tail of distribution

Kruskal-Wallis: The U test’s big cousin When we have 2 groups to compare (M/F, site 1/site 2, etc) the U test is correct applicable and safe. How to handle cases with 3 or more groups? The simple answer is to run the Kruskal-Wallis test. This is run on a PC, but behaves very much like the M-W U. It will give one significance value, which simply tells you whether at least one group differs from one other. Females Males Site 2 Site 3 Site 1 Do males differ from females? Do results differ between these sites?

Your coursework: I will give each of you a sheet with data collected from 3 sites. (Don’t try copying – each one is different and I know who gets which dataset!). I want you to show me your data processing skills as follows: 1: Produce a boxplot of these data, showing how values differ between the categories. 2: Run 3 separate Mann-Whitny U tests on them, comparing 1-2, 1-3 and 2-3. Only call the result significant if the p value is < 0.01 3: Run a Kruskal-Wallis anova on the three groups combined, and comment on your results.