RIPPER Fast Effective Rule Induction

RIPPER Fast Effective Rule Induction. Machine Learning 2003 Merlin Holzapfel & Martin Schmidt Mholzapf@uos.de Martisch@uos.de. Rule Sets - advantages. easy to understand usually better than decision Tree learners representable in first order logic > easy to implement in Prolog

RIPPER Fast Effective Rule Induction

E N D

Presentation Transcript

RIPPERFast Effective Rule Induction Machine Learning 2003 Merlin Holzapfel & Martin Schmidt Mholzapf@uos.deMartisch@uos.de

Rule Sets - advantages • easy to understand • usually better than decision Tree learners • representable in first order logic • > easy to implement in Prolog • prior knowledge can be added

Rule Sets - disadvantages • scale poorly with training set size • problems with noisy data • likely in real-world data • goal: • develop rule learner that is efficient on noisy data • competitive with C4.5 / C4.5rules

Problem with Overfitting • overfitting also handles noisy cases • underfitting is too general • solution pruning: • reduced error pruning (REP) • post pruning • pre pruning

Post Pruning (C4.5) • overfit & simplify • construct tree that overfits • convert tree to rules • prune every rule separately • sort rules according accuracy • consider order when classifying • bottom - up

Pre pruning • some examples are ignored during concept generation • final concept does not classify all training data correctly • can be implemented in form of stopping criteria

Reduced Error Pruning • seperate and conquer • split data in training and validation set • construct overfitting tree • until pruning reduces accuracy • evaluate impact on validation set of pruning a rule • remove rule so it improves accuracy most

Time Complexity • REP has a time complexity of O(n4) • initial phase of overfitting alone has a complexity of O(n²) • alternative concept Grow: • faster in benchmarks • time complexity still O(n4) with noisy data

Incremental Reduced Error Pruning - IREP • by Fürnkranz & Widmer (1994) • competitive error rates • faster than REP and Grow

How IREP Works • iterative application of REP • random split of sets bad split has negative influence (but not as bad as with REP) • immediately pruning after a rule is grown (top-down approach) no overfitting

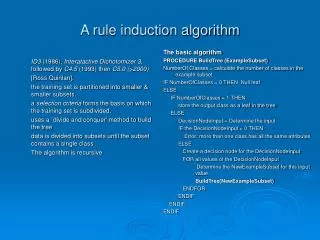

Cohens IREP Implementation • build rules until new rule results in too large error rate • divide data (randomly) into growing set(2/3) and pruning set(1/3) • grow rule from growing set • immediately prune rule • Delete final sequence of conditions • delete condition that maximizes function v until no deletion improves value of v • add pruned rule to ruleset • delete every example covered by rule (p/n)

IREP and Multiple Classes • order classes according to increasing prevalence (C1,....,Ck) • find rule set to separate C1 from other classes IREP(PosData=C1,NegData=C2,...,Ck) • remove all instances learned by rule set • find rule set to separate C2 from C3,...,Ck ... • Ck remains as default class

IREP and Missing Attributes • handle missing attributes: • for all tests involving A • if attribute A of an instance is missing test fails

Differences Cohen <> Original • pruning: final sequence <> single final condition • stopping condition: error rate 50% <> accuracy(rule) < accuracy(empty rule) • application: missing attributes, numerical variables, multiple classes <> two-class problems

Time Complexity IREP: O(m log² m),m = number of examples (fixed number of classification noise)

Generalization Performance • IREP performs worse on benchmark problems than C4.5rules • won-lost-tie ratio: 11-23-3 • error ratio • 1.13 excluding mushroom • 1.52 including mushroom

Improving IREP • three modifications: • alternative metric in pruning phase • new stopping heuristics for rule adding • post pruning of whole rule set (non-incremental pruning)

the Rule-Value Metric • old metric not intuitive R1: p1 = 2000, n1 = 1000 R2: p1 = 1000, n1 = 1 metric preferes R1 (fixed P,N) leads to occasional failure to converge • new metric (IREP*)

Stopping Condition • 50%-heuristics often stops too soon with moderate sized examples • sensitive to the ‘small disjunct problem‘ • solution: • after a rule is added, the total description length of rule set and missclassifications (DL=C+E) • If DL is d bits larger then the smallest length so far stop (min(DL)+d<DLcurrent) • d = 64 in Cohen‘s implementation MDL (Minimal Description Length) heuristics

IREP* • IREP* is IREP, improved by the new rule-value metric and the new stopping condition • 28-8-1 against IREP • 16-21-0 against C4.5rules error ratio 1.06 (IREP 1.13) respectively 1.04 (1.52) including mushrooms

Rule Optimization • post prunes rules produced by IREP* • The rules are considered in turn • for each rule R, two alternatives are constructed • Ri‘ new rule • Ri‘‘ based on Ri • final rule is chosen according to MDL

RIPPER • IREP* is used to obtain a rule set • rule optimization takes place • IREP* is used to cover remaining positive examples Repeated Incremental Pruning to Produce Error Reduction

RIPPERk • apply steps 2 and 3 k times

RIPPER Performance • 28-7-2 against IREP*

Error Rates RIPPER obviously is competitive

Efficency of RIPPERk • modifications do not change complexity

Reasons for Efficiency • find model with IREP* and then improve • effiecient first model with right size • optimization takes linear time • C4.5 has expensive optimization improvement process • to large initial model • RIPPER is especially more efficient on large noisy datasets

Conclusions • IREP is efficient rule learner for large noisy datasets but performs worse than C4.5 • IREP improved to IREP* • IREP* improved to RIPPER • k iterated RIPPER is RIPPERk • RIPPERk more efficient and performs better than C4.5

References • Fast Effective Rule Induction William W. Cohen [1995] • Incremental Reduced Error Pruning J. Fürnkranz & G. Widmer [1994] • Efficient Pruning Methods William W. Cohen [1993]