Game Playing

Game Playing. Chapter 5. Game playing. Search applied to a problem against an adversary some actions are not under the control of the problem-solver there is an opponent (hostile agent) Since it is a search problem, we must specify states & operations/actions

Game Playing

E N D

Presentation Transcript

Game Playing Chapter 5

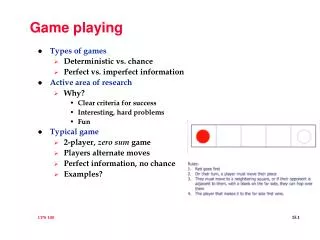

Game playing • Search applied to a problem against an adversary • some actions are not under the control of the problem-solver • there is an opponent (hostile agent) • Since it is a search problem, we must specify states & operations/actions • initial state = current board; operators = legal moves; goal state = game over; utility function = value for the outcome of the game • usually, (board) games have well-defined rules & the entire state is accessible

Basic idea • Consider all possible moves for yourself • Consider all possible moves for your opponent • Continue this process until a point is reached where we know the outcome of the game • From this point, propagate the best move back • choose best move for yourself at every turn • assume your opponent will make the optimal move on their turn

Examples • Tic-tac-toe • Connect Four • Checkers

Problem • For interesting games, it is simply not computationally possible to look at all possible moves • in chess, there are on average 35 choices per turn • on average, there are about 50 moves per player • thus, the number of possibilities to consider is 35100

Solution • Given that we can only look ahead k number of moves and that we can’t see all the way to the end of the game, we need a heuristic function that substitutes for looking to the end of the game • this is usually called a static board evaluator (SBE) • aperfect static board evaluator would tell us for what moves we could win, lose or draw • possible for tic-tac-toe, but not for chess

Creating a SBE approximation • Typically, made up of rules of thumb • for example, in most chess books each piece is given a value • pawn = 1; rook = 5; queen = 9; etc. • further, there are other important characteristics of a position • e.g., center control • we put all of these factors into one function, weighting each aspect differently potentially, to determine the value of a position • board_value = * material_balance + * center_control + … [the coefficients might change as the game goes on]

Compromise • If we could search to the end of the game, then choosing a move would be relatively easy • just use minimax • Or, if we had a perfect scoring function (SBE), we wouldn’t have to do any search (just choose best move from current state -- one step look ahead) • Since neither is feasible for interesting games, we combine the two ideas

Basic idea • Build the game tree as deep as possible given the time constraints • apply an approximate SBE to the leaves • propagate scores back up to the root & use this information to choose a move • example

Score percolation: MINIMAX • When it is my turn, I will choose the move that maximizes the (approximate) SBE score • When it is my opponent’s turn, they will choose the move that minimizes the SBE • because we are dealing with competitive games, what is good for me is bad for my opponent & what is bad for me is good for my opponent • assume the opponent plays optimally [worst-case assumption]

MINIMAX algorithm • Start at the the leaves of the trees and apply the SBE • If it is my turn, choose the maximum SBE score for each sub-tree • If it is my opponent’s turn, choose the minimum score for each sub-tree • The scores on the leaves are how good the board appears from that point • Example

Alpha-beta pruning • While minimax is an effective algorithm, it can be inefficient • one reason for this is that it does unnecessary work • it evaluates sub-trees where the value of the sub-tree is irrelevant • alpha-beta pruning gets the same answer as minimax but it eliminates some useless work • example • simply think: would the result matter if this node’s score were +infinity or -infinity?

Cases of alpha-beta pruning • Min level (alpha-cutoff) • can stop expanding a sub-tree when a value less than the best-so-far is found • this is because you’ll want to take the better scoring route [example] • Max level (beta-cutoff) • can stop expanding a sub-tree when a value greater than best-so-far is found • this is because the opponent will force you to take the lower-scoring route [example]

Alpha-beta algorithm • Maximizer’s moves have an alpha value • it is the current lower bound on the node’s score (i.e., max can do at least this well) • if alpha >= beta of parent, then stop since opponent won’t allow us to take this route • Minimizer’s moves have a beta value • it is the current upper bound on the node’s score (i.e., it will do no worse than this) • if beta <= alpha of parent, then stop since we (max) will won’t choose this

Use • We project ahead k moves, but we only do one (the best) move then • After our opponent moves, we project ahead k moves so we are possibly repeating some work • However, since most of the work is at the leaves anyway, the amount of work we redo isn’t significant (think of iterative deepening)

Alpha-beta performance • Best-case: can search to twice the depth during a fixed amount of time [O(bd/2) v. O(bd)] • Worst-case: no savings • alpha-beta pruning & minimax always return the same answer • the difference is the amount of work they do • effectiveness depends on the order in which successors are examined • want to examine the best first • Graph of savings

Refinements • Waiting for quiescence • avoids the horizon effect • disaster is lurking just beyond our search depth • on the nth move (the maximum depth I can see) I take your rook, but on the (n+1)th move (a depth to which I don’t look) you checkmate me • solution • when predicted values are changing frequently, search deeper in that part of the tree (quiescence search)

Secondary search • Find the best move by looking to depth d • Look k steps beyond this best move to see if it still looks good • No? Look further at second best move, etc. • in general, do a deeper search at parts of the tree that look “interesting” • Picture

Book moves • Build a database of opening moves, end games, tough examples, etc. • If the current state is in the database, use the knowledge in the database to determine the quality of a state • If it’s not in the database, just do alpha-beta pruning

AI & games • Initially felt to be great AI testbed • It turned out, however, that brute-force search is better than a lot of knowledge engineering • scaling up by dumbing down • perhaps then intelligence doesn’t have to be human-like • more high-speed hardware issues than AI issues • however, still good test-beds for learning