ptp

ptp. Soo-Bae Kim 4/09/02 isdl@snu. What is peer to peer?. Communication without server advantage No single point of failure Easy data sharing disadvantage No service quality guarantee Increased network traffic . Outline. Chord? Chord protocol simulation and experiment result

ptp

E N D

Presentation Transcript

ptp Soo-Bae Kim 4/09/02 isdl@snu

What is peer to peer? • Communication without server • advantage • No single point of failure • Easy data sharing • disadvantage • No service quality guarantee • Increased network traffic

Outline • Chord? • Chord protocol • simulation and experiment result • conclusion

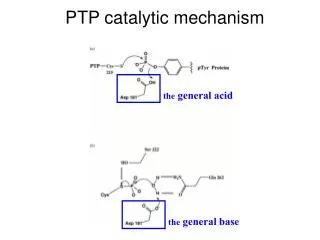

What is chord? • Provides fast distributed computation of a hash function mapping keys to nodes responsible for them. • Use a variant of a Consistent hashing • improve scalability:node needs routing information about only a few other nodes. • when new nodes join the system, only fraction of keys are moved to different location • Simplicity,provable correctness and provable performance

Related work. • DNS(domain name server) • provide host name to IP address • Freenet peer to peer storage system • like chord,decentralized and automatically adapts when hosts join and leave. • Provide a degree of anonymity • ohaha system

Related work. • Globe system • information about an object is stored in a particular leaf domain and pointer cached provide search. • Distributed data location protocol by plaxton • queries never travel further in network distance than node where the key is stored • Ocean store

Related work. • CAN(content addressable network) • use a d-dimensional cartesian space to implement a distributed hash table that maps keys onto values.

Chord’s merit • Load balance • decentralization • scalability • availability • flexible naming

Example of chord application • Cooperative mirroring • time-shared storage • distributed indexes • large-scale combinatorial search

Base chord protocol • how to find the location of keys. • Consistent hashing which has several good properties. • when new nodes join the system • only fraction of keys are moved • chord requires messages. • in N-node system,each node maintain information only aboutother nodes,and lookup requires messages

Consistent hashing • Consistent hash function assigns each node and key an m-bit identifier using a base hash function • k is assigned to the first node(successor node)whose identifier is equal to or follows k in identifier space. • When a node n joins, certain keys previously assigned to n’s successor now become assigned to n.

Scalable key location • Resolution scheme is inefficient because it may require traversing all N nodes to find the appropriate mapping. so additional routing is needed.

Node join • When nodes join(leave), chord preserve two invariant • each node’s successor is correctly maintained. • For every key k,node successor(k) is responsible for k. • Chord perform three tasks to preserve the invariants.

Concurrent operations and failure • Separate our correctness and performance goals. • Stabilization protocol is used to keep nodes’ successor pointers. • Preserve reachability of exiting nodes.

Failures and replication • When a node n fails,nodes whose finger table include n must find n’s successor. • The key step in failure recovery is maintaining correct successor pointers. • Using successor list • Even though,before stabilization,attempt to send requests through the failed node,lookup would be able to proceed by another path. • The successor list mechanism also helps higher layer software replicate data.

Simulation and experimental results • Recursive chord protocol • intermediate node forwards a request to the next node until it reachs the successor. • Load balance

Path length • Path length:the number of nodes traversed during lookup operation.

Path length • The result show that the path length is about • since the distance is random,we expect half the log N bits to be one.

Simultaneous node failures • Randomly select a fraction p of nodes that fail. • Since this is just a fraction of keys expected to be lost due to the failure of the responsible nodes,we conclude that there is no significant lookup failure in chord.

Lookup during stabilization • Lookup during stabilization may fail two reasons. • The node responsible for the key may have failed. • Some nodes’ finger tables and predecessor pointers may be inconsistent.

Experimental results • Measured latency • lookup latency grows slowly with total number of nodes.

conclusion • Many distributed peer to peer application need to determine the node that stores a data.The chord protocol solves this problem in decentralized manner. • Chord scales well with number of nodes. • Recovers from large number of simultaneous node failure and joins. • Answer most lookups correctly even during stabilization.

Scalable content addressable network • Application of CAN • CAN design • design improvement • experimental result

Application of CAN? • CAN provides a scalable indexing mechanism • Most of the peer to peer designs are not scalable. • Napster: a user queries central server : not completely decentralized ==> expensive and vulnerable for scalability • Gnutella : using flooding for requesting ==> not scalable

Application of CAN? • For storage management system, CAN can serve efficient insertion and retrieval of content. • OceanStore,Farasite,Publius • DNS

Proposed CAN • Composed of many individual nodes. • A node holds information about a small number of adjacent zone • Each node stores a entire hash table • completely distributed,scalable and fault - tolerant

Design CAN • routing in CAN :using its neighbor • CAN construction

Node departure,recovery and CAN maintenance • when node leaves a CAN or its neighbor has died. • if zone of one of neighbors can be merged, this hands over. • if not, zone is handed to neighbor whose current zone is smallest. • Using timer proportional volume.

Node departure,recovery and CAN maintenance • Under normal condition, a node sends a periodic messages to each of its neighbors. • The prolonged absence of an updated message signals its failure.

Design improvement • Multi-dimension • Realities • Better CAN routing metrics • overloading coordinate zones • multiple hash function • topologically-sensitive construction. • More uniform partioning • cashing and replication

Increasing dimension =>reduce routing path length,path penalty path length= hops improve routing fault tolerance owing to having more next hops. Multi-dimension coordinate spaces.

Realities: assigned a different zone in each coordinate space. Contents of the hash table are replicated on every realities. Improve routing falult tolerance,data availability and and path length Realities:multiple coordinate spaces

Multi dimension vs multi realities • Both results in shorter path length,but per-node neighbor state and maintenance traffic • better performance at multi-dimension • consider other benefits of multi realities

Better CAN routing metrics • Metric to better reflect the underlying IP = network level round trip time RTT before cartesian distance. • Avoid unnecessary long hops. • RTT-weighted routing aims to reducing the latency of individual hops. • Per-hop latency = overall path latency / path length

Overloading coordinate zone • Allow multiple node to share the same zone.(peer:node that share same zone) • MAXPEER • node maintains a list of peer and neighbor. • When node A join, an existence B node check whether it has fewer than MAXPEER.

Overloading coordinate zone • If fewer,node A join. • If not,zone is split into half. • Advantage • reduced path length,path and per-hop latency • improve fault tolerance

Use k different hash function to map a single key onto k points. Reducing average query latency but increasing the size of the database and query traffic by a k factor. Multiple hash function

Constrct CAN based on their relative distance from the landmarks. Latency stretch: ratio of the latency on the CAN network to the average latency on the IP network. Topologically-sensitive construstion of CAN overlay network

Achieve load lancing. Not sufficient for true load balancing because some pairs will be more popular than others : hot spot V = (entire coordinate space:Vt) / (node:n) More uniform partitioning

Caching and replication tech. for “hot spot” management • To make popular data keys widely available. • Caching : first check requested data key. • Replication : replicate the data key at each of its neighboring nodes. • Should have an associated time to live field and be eventually expired from the cache.

Design review • Metric • path length • neighbor-state • latency • volume • routing fault tolerance • hash table availability

Design review • Parameter • dimensionality of the virtual coordinate space : d • number of realities : r • number of peer nodes per zone : p • number of hash function : k • use of the RTT-weighted routing metric • use of the uniform partitioning

discussion • Two keys : scalable routing and indexing • problem. • Resistant to denial of service attack. • Extension of CAN algorithm to handle mutable content and the design of search tech.

An investigation of Geographic Mapping Techniques for Internet Hosts