Main area: User Interface Design for Small Mobile Communication Devices Contextual: Human Interaction with Auto

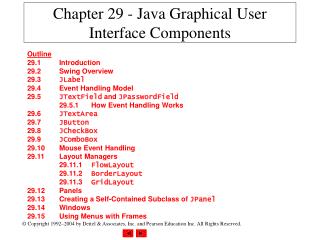

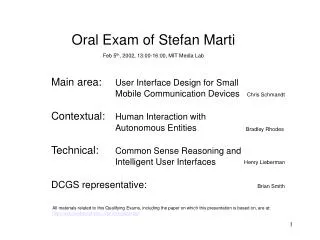

Oral Exam of Stefan Marti Feb 5 th , 2002, 13:00-16:00, MIT Media Lab Main area: User Interface Design for Small Mobile Communication Devices Contextual: Human Interaction with Autonomous Entities Technical: Common Sense Reasoning and Intelligent User Interfaces

Main area: User Interface Design for Small Mobile Communication Devices Contextual: Human Interaction with Auto

E N D

Presentation Transcript

Oral Exam of Stefan Marti Feb 5th, 2002, 13:00-16:00, MIT Media Lab Main area: User Interface Design for Small Mobile Communication Devices Contextual: Human Interaction with Autonomous Entities Technical: Common Sense Reasoning and Intelligent User Interfaces DCGS representative: Chris Schmandt Bradley Rhodes Henry Lieberman Brian Smith All materials related to this Qualifying Exams, including the paper on which this presentation is based on, are at: http://web.media.mit.edu/~stefanm/generals/

User Interface Design of Mobile Devicesand Social Impact of Mobile Communication: How do they interact?

Structure of the talk Two parts: First part: theoretical foundations Related work My approach Second part: suggestions, results Relations between social phenomena and user interface design

Motivation: Why is this interesting at all? Why should we care? • Mobile devices are ubiquitous—perhaps not in the States, but certainly in Europe and Asia. • Mobile communication has changed, or will change, our lives. Most of us profit from it and wouldn’t miss it. • User interfaces of mobile devices often sport the latesttechnology and have a fashionabledesign. But did the designers also keep in mind how their interfaces might impact our social lives?

What is social impact? Related work • Social impact in a mobile computing setting • Classification of social context situations Dryer et al. 1998 Rowson 2000

Social Impact in Mobile Computing Dryer et al. 1999 • Their perspective: Social computing in mobile computing systems • Social computing: “interplay between person’s social behavior and their interactions with computing technologies” • Mobile computing systems: devices that are designed to be used in the presence of other persons • Depending on the design of such systems, they may either promote or inhibit social relationships • Possible relationships • interpersonal relationship among co-located persons • human-machine relationship (social behavior directed toward a machine) • machine mediated human-human relationship • relationship with a community • Lab study on influences of pervasive computer design on responses to social partners • Theoretical model, consisting of four components:

Social attributions How we explain for ourselves why others behave in a certain way (traits, roles, group memberships) Human behavior What users usually do Interaction outcome Consequences of interaction, both cognitive and affective Social Impact in Mobile Computing, cont. • Factors: • Users believe that device can be used easily • Device resembles a familiar device • Users can share the input with non-users • Users can share the output with non-users • Device appears useful in current context System design e.g., UI design • Factors: The device… • makes user appear awkward • interferes with natural social behaviors • distracts nonusers from their social interaction • alters distribution of interpersonal control between partners • distracts user from social interaction • Factors: • Is partner agreeable or not • Is partner extro- or introverted • Is partner member of same group • Factors: • Was interaction successful? • Are future similar interactions desired? • Did the user like the device? • Did the user like the partner? • Quantity and quality of work produced in a social exchange

Social Impact in Mobile Computing, cont. • Empirical study to explore these relationships: manipulate system design factors and asses their effect on social attributions, human behaviors, and interaction outcomes • Method: present participants photos of persons with different mobile computing devices • Conditions: array of devices with different form factors: HMD, PDA, wearable (belt-worn sub-notebook), laptop, no device • Dependant variables: Questionnaires to asses the effects, looking for significant correlations among factors • Results:

neg neg neg neg neg Social Impact in Mobile Computing, cont. • Factors: • Users believe that device can be used easily • Device resembles a familiar device • Users can share the input with non-users • Users can share the output with non-users • Device appears useful in current context System design e.g., UI design Social attributions How we explain for ourselves why others behave in a certain way (traits, roles, group memberships) Human behavior What users usually do • Factors: The device… • makes user appear awkward • interferes with natural social behaviors • distracts nonusers from their social interaction • alters distribution of interpersonal control between partners • distracts user from social interaction • Factors: • Is partner agreeable or not • Is partner extro- or introverted • Is partner member of same group Interaction outcome Consequences of interaction, both cognitive and affective • Factors: • Was interaction successful? • Are future similar interactions desired? • Did the user like the device? • Did the user like the partner? • Quantity and quality of work produced in a social exchange

More social context situations • Dryer et al. looked at one situation: probably a work setting, involving two people working with mobile computing infrastructure. This is a very specific social context situation. • Much larger variety of social context situations. How to classify them? • Rowson (2000) suggests a 2-dimensional space with dimensions Role and Relationship. • Pragmatic, but useful.

Social context situations: “Scenario Space“ Relationship Rowson 2000 Chat, friend finder Baseballteam fan Parent-TeacherAssociation Birds offeather Churchgroup Community Group project Formal Team Soccer team Finances Meetings Study Casual Team Note passer Mallencounter Shopping Hallwaychat Hospice Healthmanagement Individual Homework Movies Organizer Prayer Role School Recreation Family Work Spiritual • Examples: • What kind of scenario is located in a work setting and in a casual team? • What kind of scenario is located in a school setting and as an individual?

How does this help us? • Dryer et al.: Mobile computing research suggest that user interface design has social impact on the interaction outcome, mainly via social attributions; the design of a system can either promote or inhibit social relationships • Rowson: It seems useful to classify social context situations in a 2-dimensional space, with dimensions role and relationship

Back to the main question How does the user interface design of mobile devices influence the social impact of mobile communication? • My strategy to answer • Define social impact = the influence on social relationships • Look at the different kinds of social relationships thatare relevant in a mobile communication setting • Find social phenomena specific to those relationships. I call them Statements. • Make suggestions for UI design that enable these socialphenomena, or at least do not get in their way!Of course there are many other possible influences on social relationships: personality of involved people, nature of task, culture, etc.

Gap of time and/or space Person 1 Interface Machine,Medium Co-located person Person 2 Class A:Social impact on relationshipbetween person and machine (medium)

Gap of time and/or space Person 1 Interface Machine,Medium Co-located person Person 2 Class B:Social impact on relationshipbetween person and co-located people

Gap of time and/or space Person 1 Interface Machine,Medium Co-located person Person 2 Class C:Social impact on relationshipbetween person and mediated people

Basic assumption • Each communication consists of two elements: • Initiation (alert) • Act of communication • More specifically • Unsuccessful initiationshappens less and less: graceful degradation, awareness applications • Blurred distinction between alerts and acts of communication e.g., caller ID, Nomadic Radio • Communication has neither clear beginning nor clear end e.g., awareness communication modes (later more about that)

Relationship between human andmachine (medium, device, etc.) Class A • In human-computer relationships, we sometimes mimic human-human relationships. Only minimal cues are necessary to trigger such behaviorThese are: use of language, human sounding speech, social role, remembering multiple prior inputs • Computers (or machines, devices) as social actors • User satisfaction with UI not determined byeffectiveness and efficiency, but affective reactions e.g., Nass et al. 1993 e.g., Shneiderman 1998

Statement 1: The more human-like the interaction, the better are the user’s attitudinal responses. Class A • User interface design suggestions; they are not orthogonal dimensions: • Interfaces that support common forms of human expression, also called Natural Interfaces, e.g., speech, pen, gesture • Recognition based user interfaces (instead of buttons and sliders) • Multimodal interfaces: natural human communication is multimodal; also good for cross-checks, since recognition based interfaces are error prone • Interfaces that allow the user and the device to select the most appropriate modality depending on context • Architectures that allow for mixed-initiative interfaces (e.g., LookOut) • Interfaces that enable human-level communication: instead of controlling the machine, controlling the task domain Abowd et al. 2000 Myers et al. 2000 Suhm et al. 1999 Oviatt et al. 2000 Ruuska et al. 2001 Walker et al. 1998 Horvitz 1999 Nielson 1993

Relationship between human andmachine (cont.) Class A • Humans probably like interacting with intelligent beings. Social intelligence probably makes us feel comfortable. • “Human social intelligence” is how we deal with relationships. • “Artificial social intelligence” is discussed in framework of SIA(R)s • "Social Intelligence Hypothesis:" primate intelligence originally evolved to solve social problems, and only later was extended to problems outside the social domain (math, abstract thinking, logic) • SIA(R)s have human-like social intelligence to address the emotional and inter-personal dimensions of social interaction. • Mechanisms that contribute to Social Intelligence: Embodiment, empathy (scripts plus memory), autobiographic agency (dynamically reconstructing its individual history), narrative agency (telling stories about itself and others) Dautenhahn 2000 Breazeal 2001 Dautenhahn 1998

Statement 2: The more “social intelligence” a device has, the more positive the social impact. Class A • Many user interface design suggestions, here are just two: • Interfaces with reduced need for explicit human-computer interaction, based on the machine's awareness of the social situation, the environment, and the goals of the user. Or in short: context aware UI. • Interfaces that are “invisible,” both physically and mentally. Can mean: not controlled directly by the user, but also by the machine.This is a consequence of the function of the machine: Its role will not be to obey orders literally, but to interpret user actions and do what it deems appropriate. Dey et al. 2001 Weiser 1991 Lieberman et al. 2000 Nielson 1993

Relationship between human andco-located people (surroundings) Class B • Each act of telecommunication disrupts the interaction with co-located people. In mobile communication, however, interruption is part of the design.

Statement 3: The less telecommunication, the better for the interaction with co-located persons. Class B The less we telecommunicate, the more we can attend to co-located people, the more time we spend with them. • Just a single, wide-focus user interface design suggestion: • Interfaces that filter in a context aware manner and therefore minimize the amount of telecommunication. The more the device (agent) knows about my social and physical environment, the less unnecessary distractions (later more) • But…

Statement 4: Find balance between useful interruptions and attention for co-located persons . Class B • Interfaces that allow communication in parallel to the ongoing co-located interactions, which in turn enable multiple activities concurrently (mobile communication happens in many different contexts). Examples: Simple speakerphone, Nomadic Radio • Interfaces that support multiple levels of “intrusiveness," enabling background awareness applications. Examples: Audio AuraAudio Aura: serendipitous information via background auditory cues; uses “sonic ecologies,” embedding the cues into a running, low-level soundtrack so that the user is not startled by a sudden sound; different “worlds” like music, natural sound effects (ocean).Adaptive background music selection system: each user can map events to songs, so personal alerts can be delivered through the music being played in the public background Sawhney et al. 2001 Mynatt et al., 1998

Class B • Interfaces that present information at different levels of the periphery of human attention.Examples: Reminder Bracelet, and many systems in the domain of ambient media and ambient alerting. • Minimal Attention User Interfaces (MAUI). The idea is to transfer mobile computing interaction tasks to interaction modes that take less of the user’s attention from their current activity. It is about shifting the human-computer interaction to unused channels or senses.Limited divided attention and limited focus of attention are only indirectly relevant in our context: they are primarily psychological phenomena and influence social relationships only if co-located persons and the communication device are both seeking attention at the same time. The real issue is what effect the user’s choice of focus of attention has on her social relationships. This is based on the assumption that the user interface gives the user the freedom to shift attention, and does not just override the user’s conscious choice of focus Abowd et al. 2000 Hansson et al. 2000 Pascoe et al. 2000

Statement 5: The less intrusive the alert and the act of communication, the more socially accepted. Class B • Interfaces that can adapt to the situation and allow for mixed-mode communication. Example: Quiet Calls. Important problem to solve is how to map communication modes adequately, e.g., Quiet Calls uses a Talk-As-Motion metaphor: “engage,” “disengage,” “listen” • Ramping interfaces, including scalable alerting. Example: Nomadic Radio Nelson et al. 2001 Rhodes 2000 Sawhney et al. 2001

Statement 6: The more public the preceding alert, the more socially accepted the following act of communication. Class B • Suggestion: design space of notification cues for mobile devices with two dimensions: subtlety and publicity. • Public and subtle cues are visible to co-located persons, and can therefore avoid unexplained activity (e.g., user suddenly leaves from a meeting). Hansson et al. 2001 subtle Reminder Bracelet Tactile cues public private Rememberance agent Auditory cues intrusive Hansson et al. 2000 • Interfaces that support and encourage public but subtle alerts.Example: Reminder Bracelet

Statement 7: The more obvious the act of communication, the more socially accepted. Class B • This statement is about the act of communication (the previous was about the alert) • The “talking alone” phenomenon: Soon communication devices will be so small that co-located people can’t see them, so a user will appear to talk to herself. That is strange, and socially not acceptable. Fukumoto et al. 1999 • Interfaces that support private communication without concealing the act of communication to the public.Example: Whisper, a wearable voice interface that is used by inserting a fingertip into the ear canal. This Grasping Posture avoids the talking alone phenomenon

Statement 8: A mobile device that can be used by a single user as well as by a group of any size will more likely get socially accepted by co-located persons. Class B In other words: A device which has a user interface that has the option to adapt to the group size of the social setting (from individual to community), will be a better device . Rowson 2001 • Interfaces that can adapt to a particular user group size, from an individual to a group. This extends its usability, spanning more social context situations.Example: TinyProjector for mobile devices; projection size is scalable and can adapt to a group of a few—using a table as a projection surface —, up to large groups of hundreds of people, using a wall of a building as a projection screen

Mediated human—human relationships Class C • “The Medium is the Message”: How a message is perceived is defined partially by the transmitting medium. How about “The Interface is the Message” ? • Early theories of effects of a medium on the message and on the evaluation of the communicating parties: • Efficiency of the interaction process: having different amounts of channels, and being able to transmit different kinds of nonverbal cues. • Media differ through the possible amount of nonverbal communication McLuhan

Class C Social Presence and Immediacy • Better heuristic to classify communication media and their social impact: Social Presence (SP) • SP is a subjective quality of a medium; a single dimension that represents a cognitive synthesis of several factors: • capacity to transmit information about facial expression • direction of looking • posture • tone of voice • non-verbal cues, etc. • These factors affect the level of presence, the extent to which a medium is perceived as sociable Short et al. 1976

Class C • SP theory says that the medium has a significant influence on both the evaluation of the act of communication, and the evaluation of the communication partner (interpersonal evaluation and attraction), which means: high social impact. • The nonverbally richer media—the ones with higher Social Presence—lead to better evaluations than the nonverbally poorer media: the transmitted nonverbal cues tend to increase the positivity of interpersonal evaluation. • Immediacy: Nonverbally richer media are perceived as more immediate, which means that more immediate media lead to better evaluations and positive attitudes. Williams 1975 Chapanis et al. 1972 Mehrabian 1971

Statement 9: The higher the Social Presence and Immediacy of a medium, the better the attitudinal responses to partner and medium. Class C Immediacy of medium e-mail phone videophone face-to-face • User interfaces that support as many as possible channels, and that can transmit non-verbal cues.This is probably simplistic.

That might be true with generic tasks. But what if the task requires the partners to disclose themselves? Class C • Hypothesis 1: • If the task requires the partners to disclose themselves extensively, their preferences shift and might get reversed: they prefer media that are lower in immediacy. • This might be explained with a drive to maintain the optimum intimacy equilibrium in a given relationship.Compensatory behaviors: personal distance, amount of eye contacts, smiling, etc. • Example: If a person’s distant cousin dies, she would rather write the parents (low immediacy medium) than to stop by (high immediacy medium), because stopping by might be too embarrassing (since she doesn't know them at all). Argyle et al. 1965

Task requires high intimacy Positive attitude towards partner and medium Class C Task requires only low intimacybetween partners Immediacy of medium E-mail videophone phone Face-to-face

That might be true if the partner don’t know each other well. What if they do? Class C • Hypothesis 2: • If the task requires the partners to behave in an intimate way and the partners know each other well, the preferences might shift back again, making higher immediacy media preferred. • Example: If a person’s father dies, she will choose the medium with the highest immediacy (which is face to face) to communicate with her mother.

Well acquainted with partner Unknown partner Amount of intimacy task requires from partners Class C Immediacy of medium E-mail videophone phone Face-to-face

Statement 10: The user’s attitudinal responses depend on how well the partners know each other, and if the communication task requires them to disclose themselves extensively. Class C • Interfaces that are aware of the existing relationships of the communication parties and adapt, suggesting communication modes that supports the right level of immediacy and social presence.Example: Agent that is not only aware of all communication history, but also keeps track of the most important communication partners of the user and current interaction themes, perhaps with commonsense knowledge to log files and “fill in the blanks” with natural language understanding • Interfaces that are aware of the task the communication partners want to solve, either by inferring it from the communication history, or by looking at the communication context

Statement 11: The more the user is aware of the social context of the partner before and during the communication, the better. Class C Milewski et al., 2000 Tang et al., 2001 Isaacs et al., 2002 • Interfaces that let the user preview the social context of the communication partner. This could include interfaces that give the user an idea where the communication partner is, or how open and/or available she is to communication attemptsExamples: Live Address Book, ConNexus andAwarenex, Hubbub • Interfaces that allow the user to be aware of the social context of the communication partner. This refers to interfaces that enable the participants to understand each other’s current social context during the act of communication.

Special Case: Awareness communication Class C • Person 1 does not communicate directly with person 2, but with an outer layer ofperson 2 • “Outer layer”: personal agent that acts on behalf of person 2 • Example agents: • Agent anticipates arrival time during traveling and “radiates” this info to trusted users • Electrical Elves/Friday: multi-agent teams for scheduling and rescheduling events Tambe et al. 2001

Statement 12: Receiving information from the “outer layer” of a person about her current context simplifies awareness between the partners, and has positive social impact. Class C (special) • Interfaces that are open for and actively request information from the context layer of communication partners. Such information is most likely to be displayed in the periphery of human attention.Examples for UI design: Reminder Bracelet, LumiTouch, ComTouch, Personal Ambient Display • Related to interfaces of class A relationships: interaction happens between a person and a machine, e.g., a personal software agent. Therefore, some design suggestions of this class are relevant: • UI should allow the user to select the most appropriate modality depending on the physical context • UI has to adapt to the user’s current social context: ramping interfaces Hansson et al., 2000 Chang et al., 2001 Chang, 2001 Wisneski, 1999

Statement 13: Mobile communication happens continuously, everywhere and anytime, and therefore is used in many different social context situations. Mobile communication The user interface has to adapt to this variety of social context situations. • Interfaces with small form factors. This is a direct consequence of the everywhere-anytime paradigm of mobile communication. The smaller the device and its interface, the more likely they will get used. Wearability as major theme: devices that will be attached to the body, “wrist-top” and “arm-top” metaphors. • Distributed interfaces that are not only part of the mobile device, but also of our environment. This includes a modular approach for user interfaces that dynamically connect to the available communication devices and channels • Interfaces with varying input and output capabilities.Example: wearable keyboards like FingeRing. • Interfaces that allow for continuous interactions. Important for ubiquitous computing, but also relevant for the always-on metaphor of mobile computing: systems that continue to operate in the background without any knowledge of on-going activity. Ruuska et al. 2001 Weiser 1991 Fukumoto et al. 1997 Abowd et al. 2000

Conclusions • Social impact = the influence on social relationships • 3 classes of relevant social relationships in the mobile communication setting • 13 statements: social phenomena, specific to a class of relationships • 28 design suggestions: how to design a UI for mobile devices in order to support the statements, or not to violate the social phenomena described in the statements, or simply to make the social impact of mobile communication positive

Thanks! :-) All materials related to my Qualifying Exams, including the paper on which this presentation is based on, are at: http://web.media.mit.edu/~stefanm/generals/

The following slides show larger pictures and more descriptions of some of the prototypes that were mentioned in the presentation

The Reminder Bracelet is an experiment in the search for complementary ways of displaying notification cues. It is a bracelet, worn on the wrist and connected to a user’s PDA. The LEDs embedded in the Reminder Bracelet act as a display for conveying notifications. The reason for using light was to allow for more subtle, less attention-demanding cues, and also to make the notifications public to a certain degree. Reminder Bracelet is places on the wrist, a location that generally rests in the periphery of the user’s attention, and also a familiar place for an informational device. When a notification occurs, it is first perceived in the periphery of the user’s vision and then it might move into the center of attention. In an effort to reduce the user interaction and to convey notifications in a consequent and easily interpreted manner, the Reminder Bracelet always notifies its user 15 minutes ahead of scheduled events in the PDA. Reminder Bracelet Hansson et al. 2000

Grasping posture • Outside noise shut out • Users hear themselves better (don’t raise voice) because ear covered Whisper Fukumoto et al. 1999

Whisper’s Talking Alone Phenomena Fukumoto et al. 1999 • Talking alone: today’s earphone-microphone units are large enough to be visible, so the surrounding people can easily notice their presence. • However, it is clear that almost invisible “ear plug” style devices—integrating telephone and PDA functionality—will be feasible sometime soon. Such devices can be easily overlooked by co-located people, and it will appear to these people as if the user is “talking to herself.” • The phenomenon of “talking alone” might look very strange, and is certainly socially not acceptable. Fukumoto et al. even hypothesize that the stigma attached to “talking alone” has hindered the spread of the wearable voice interface. Therefore, the important issues that must be addressed are the social aspects when designing and implementing wearable voice interfaces. • “Talking alone” phenomenon does not occur if the user seems to hold a tiny telephone handset, even if the grasped object is too small to be seen directly. Basically, this effect can be achieved by just mimicking the “grasping posture.” • Whisper, a wearable voice interface that is used by inserting a fingertip into the ear canal, would satisfy the socially necessary need not to conceal the act of communication

Nomadic Radio’s Soundbeam by Nortel™ Sawhney et al. 2001 Nomadic Radio explores the space of possibly parallel communication in the auditory area. The system, a wearable computing platform for managing voice and text based messaging in a nomadic environment, employs a shoulder worn device with directed speakers that make cues only audible for the user (without the use of socially distracting headphones). This allows for a natural mix of real ambient audio with the user specific local audio. To reduce the amount of interruptions, the system’s notification is adaptive and context sensitive, depending on whether the user is engaged in a conversation, her recent responses to prior messages, and the importance of the message derived from content filtering with Clues