Context Sensitive Languages and Linear Bounded Automata

Introduction. Context Sensitive languages/grammarsLinear Bounded Automata (LBA)Equivalence of CSL and LBAComplexity of CSL's / VariantsClosure properties of CSL'sDecidability of CSL's. Context Sensitive Grammars. G = (V, ?, R, S)V is set of variables? is set of terminalsR are rules of the formaA

Context Sensitive Languages and Linear Bounded Automata

E N D

Presentation Transcript

1. Context Sensitive Languages and Linear Bounded Automata Benjamin Mayne

CPSC 627

3. Context Sensitive Grammars G = (V, ?, R, S)

V is set of variables

? is set of terminals

R are rules of the form

aA� -> a?�

(A goes to ? in the context of a and �)

where A ? V, a, � ? (V U S)*, and

? ? (V U S)+

plus the rule S ? e if S is not on right side of any rule.

4. Continued | aA�| = | a?� |

CSG�s are called noncontracting grammars because no rule decreases the size of the string being generated.

For example:

S ? aSBc ? aaSBcBc ? aaabcBcBc ?

aaabBcBcc ? aaabbBccc ? aaabbbccc

5. CSL example Consider the following CSG

S ? aSBc

S ? abc

cB ? Bc

bB ? bb

The language generated is L(G) = {anbncn | n?1}

6.

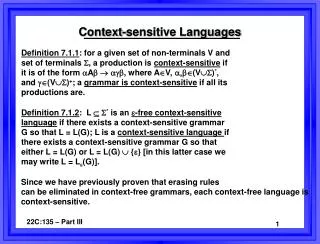

7. Chomsky Hierarchy

8. Context-sensitive languages Clearly, context-sensitive rules give a grammar more power than context-free grammars. A context-sensitive grammar can use the surrounding characters to decide to do different things with a variable, instead of always having to do the same thing every time.

All productions in context-sensitive grammars are non-decreasing or non-contracting; that is, they never result in the length of the intermediate string being reduced.

9. Linear Bounded Automata A Turing machine that has the length of its tape limited to the length of the input string is called a linear-bounded automaton (LBA).

A linear bounded automaton is a 7-tuple nondeterministic Turing machine M = (Q, S, G, d, q0,qaccept, qreject) except that:

1. There are two extra tape symbols < and >, which are not elements of G.

2. The TM begins in the configuration (q0<x>), with its tape head scanning the symbol < in cell 0. The > symbol is in the cell immediately to the right of the input string x.

3. The TM cannot replace < or > with anything else, nor move the tape head left of < or right of >.

11. L = {anbncn : n ? 0}

Q = {s,t,u,v,w} ? = {a,b,c}

? = {a,b,c,x} q0 = s

? =

{((s, <), (t, <, R)), ((t, >), (t, >, L )),

((t, x), (t, x, R)), ((t, a), (u, x, R)),

((u, a), (u, a, R)), ((u, x), (u, x, R)),

((u, b), (v, x, R)), ((v, b), (v, b, R)),

((v, x), (v, x, R)), ((v, c), (w, x, L)),

((w, c), (w, c, L)), ((w, b), (w, b, L)),

((w, a), (w, a, L)), ((w, x), (w, x, L)),

((w, <), (t, <, R))}

12. The intuition behind the previous example is

that on each pass through the input string, we

match one a, one b and one c and replace each

of them with an x until there are no a's, b's or c's

left.

Each of the states can be explained as follows:

State t looks for the leftmost a, changes this to an x, and moves into state u. If no symbol from the input alphabet can be found, then the input string is accepted.

State u moves right past any a's or x's until it finds a b. It changes this b to an x, and moves into state v.

State v moves right past any b's or x's until it finds a c. It changes this c to an x, and moves into state w.

State w moves left past any a's, b's, c's or x's until it reaches the start boundary, and moves into state t.

13. A language is accepted by an LBA iff it is generated by a CSG.

Just like equivalence between CFG and PDA

Given an x ? CSG G, you can intuitively see that and LBA can start with S, and nondeterministically choose all derivations from S and see if they are equal to the input string x. Because CSL�s are non-contracting, the LBA only needs to generate derivations of length ? |x|. This is because if it generates a derivation longer than |x|, it will never be able to shrink to the size of |x|. CSG = LBA

14. Complexity of CSL/Variants Since a context-sensitive language is equivalent to the languages recognized by an LBA, context-sensitive languages are exactly ?NSPACE(cn).

Can be solved by nondeterministic TM using �cn� space

In complexity theory is thought to lie outside of NP. Recall that:

P ? NP ? NPSPACE

The degree of complexity of context-sensitive languages is too high for practical applications.

15.

On the other hand, the context-free languages (CFL) are not powerful enough to completely describe all the syntactical aspects of a programming language like PASCAL, since some of them are inherently context dependent.

So, there are classes of languages that are strictly in between CFL and CSL.

Can make CFG�s more powerful or restrict the power of CSG�s.

16. Growing context-sensitive languages The start symbol occurs only in the left-hand side of a rule

All rules are of the form that either the left-hand side consists of the start symbol or the right-hand side is strictly longer than the left-hand side

Membership problem is NP-complete

17. Closure Properties

Closed under:

Union, Concatenation, *

Closed under Intersection

But what about:

Complementation

18. Closed under Complementation? Up until 1988, context-sensitive languages were not known to be closed under complementation.

19. Complementation (continued) Show That NSPACE(n) = co-NSPACE(n)

This means that all problems in NSPACE(n) are in co-NSPACE(n) and vice versa which means NSPACE(n) is closed under complementation.

It immediately follows that context-sensitive languages are closed under complementation.

20. Decidability ALBA = {<M,w> | M is an LBA and M accepts w}

Unlike ATM, ALBA is decidable.

Proof:

The ID of an LBA (like a TM) consists of the current tape contents (wi), the current state (q), and the current head position. (w1q0w2w3w4)

For a turing machine, there are infinitely many ID�s

However, for an LBA, there are a finite number. Precisely, there are n*|Q|*|?|n possible ID�s where n is the length of the input string. |?|n is the number of possible tape strings. |Q| is the number of possible states. And n is the number of head positions.

21. Recall that computation of a Turing Machine was defined as a chain of IDs

ID0 ? ID1 ? � � � ? IDk, where ID0 is an initial configuration

If an ID appears twice, then the machine is in a loop.

On input {M,w}, where M is an LBA and w is an input word,

1. Simulate machine M for at most n*|Q|*|?|n steps of computation.

2. If M accepted, accept. If M rejected, reject. Otherwise, M must be in a loop; reject.

22. Decidability (continued) Theorem �

ACSG = {<G,w> | G is a CSG that accepts w} is decidable.

Theorem � Every context-sensitive language is decidable

Like context-free languages

23. Theorem � ELBA = {<M> | M is an LBA and L(M) = ? }

is undecidable

(This differs from context-free languages)

24. Sources Brainerd, Walter S. and Lawrence Landweber. Theory of Computation. New York: John Wiley & Sons, 1974.

Immerman, Neil. �Nondeterministic Space is Closed Under Complementation.� Yale University, http://ieeexplore.ieee.org/iel2/209/274/00005270.pdf?isNumber=274&prod=IEEE%20CNF&arnumber=5270&arSt=112&ared=115&arAuthor=Immerman%2C+N.%3B

25. Questions?