HPC clusters in Research Computing

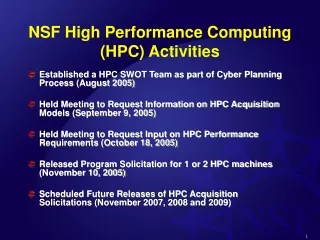

HPC clusters in Research Computing. HPC (cluster) Services offered by Research Computing to the Partners Research Community Dennis Gurgul Jan 25 th 2007. The Services. Linux based computing clusters for large-scale scientific computation or data analysis

HPC clusters in Research Computing

E N D

Presentation Transcript

HPC clusters in Research Computing HPC (cluster) Services offered by Research Computing to the Partners Research Community Dennis Gurgul Jan 25th 2007 Research Computing

The Services • Linux based computing clusters for large-scale scientific computation or data analysis • Offer computing power to Partners research community and collaborators • About 150 accounts, constantly active accounts 20-30 • Average 300-500 jobs submissions per day • User support: • Non-standard applications support : Open source software , in-house developed applications …etc • User guidance for system access and job submission, trouble shooting, performance analyzing. • Application/system integration with HPC • Beyond our cluster, general HPC support. E.g. Proteomics, DNA sequencing data processing.. Partners Research Computing

Brief History -- Intro-how did we get started • helix.mgh.harvard.edu (GCG) >> newmbcrr.dfci.harvard.edu • helix.mgh.harvard.edu email >> Partners.org • “Let's build a cluster & see what happens” —Martinson, Jon 2002 Partners Research Computing

Brief History -- Intro Research Computing Infrastructure • Brent Richter • Dennis Gurgul • Steve Roylance • Jerry Xu • Aaron Zschau HPCGG, RPDR, I2B2, *NIX, CLUSTERS Partners Research Computing

Brief History -- Intro Agenda: • Intro • Resources • Detailed Technical Description (Hardware/OS/etc) • Apps / Users • Success Stories • On-going Changes & Future Plans • How to Get an Account Partners Research Computing

Computational Resources • hpres.mgh.harvard.edu 61 nodes, LSF, 8 TB temp storage, AMD/Opteron • rescluster2.mgh.harvard.edu 25 nodes, SGE, 0.5 TB temp storage, Intel 32 bit(oldest – Matlab) • rescluster.mgh.harvard.edu 15 nodes, LSF, 0.8 TB temp storage, AMD/Opteron Partners Research Computing

System Descriptions: hpres • Deployed 4th Q 04 • HP XC4000 cluster mgt. package (de-branded RHEL), which includes LSF, Two HP Proliant DL585 login nodes w 2 AMD/Opteron, 4 GB RAM, two mirrored 72 G SCSI • One HP ProLiant DL585 head node • 61 HP ProLiant DL145 compute nodes w 2 AMD/Opteron, 4 GB RAM, 160 GB ATA • HP MSA1500 SAN w 42 300 GB SCSI as 8 .8 TB LUNS, ADG, 3 hot spares over fibre HBA to login nodes • NFS over GB Enet via HP ProCurve switches. Partners Research Computing

HPRES Architecture GB connection PHS network Root switches The major computing power provided by our group Partners Research Computing

System Descriptions: rescluster2 • Deployed in 4th Q 2003 • Rocks with included SGE on CentOS • Dell 2650 PowerEdge head/login w 2 Intel 32 bit, 4 GB RAM, 0.5 TB RAID5 SCSI • 25 Dell 1750 PowerEdge compute nodes w 1 Intel 32 bit, 2 GB RAM, 72 G SCSI • NFS over Gig Enet via Dell PowerConnect Sw. • Matlab • Biopara • CHARMM Partners Research Computing

System Descriptions: rescluster • Time Deployed: 1st quarter, 2006 • Computing Resource: • 1 Headnode: Dell 2850 PowerEdge, 2 Intel Xeon 3.0 GHz, Memory: 4GB • 15 computing nodes: HP ProLiant DL145 dual AMD Opteron 64/32 bit processors, 1.0 GHz, Memory 4GB • Storage: 0.8 TB RAID5, SCSI • Network: Gigabit Ethernet • File System: NFS over Gig Enet via Dell PowerConnect Sw • Rocks Clusters with LSF Partners Research Computing

Funding • rescluster funded & used primarily by Steel Lab at MGH. Plan to expand hardware and open to community • rescluster2 funded primarily by Biostatistics Lab at MGH. Primarily for Matlab • hpres funded by partnership with HP Partners Research Computing

System Level Apps System Extra Math Libraries Basic Compilers Partners Research Computing

Primary Apps / Key Users • I2B2, the RPDR Analysis Next Cluster: Parallel File System HP EVA Gateway Partners Research Computing

General Apps / Users Must be shared. Only maintained by System Admin • Localized Public Databases (> 500GB ) • Sequence Alignment (Accept job from both batch and web) Partners Research Computing

General Apps / Users • Protein-related Applications • Language, Statistical Applications and Others Partners Research Computing

General Apps / Users • Other Applications • Databases • MySQL (Inside cluster) • Postgres (inside cluster) • MS SQLserver (outside cluster, can be reached through PHS network) • Oracle (outside, can be reached through PHS network) Partners Research Computing

Achievements and User Feedback Department of Biological Chemistry and Molecular Pharmacology, HMS Derti Adnan, Roth FP, Church GM, Wu CT. "Mammalian ultraconserved elements are strongly depleted among segmental duplications and copy number variants." Nature Genetics. 2006 Oct;38(10):1216-20 Quote: “The Partners cluster allowed my colleagues and me to find that ultraconserved sequences in the human genome (basically no mutations in hundreds of millions of years) are significantly depleted within duplicated sequences ….. Without question, this work would not have been possible without the Partners cluster. ” Partners Research Computing

Achievements and User Feedback Children’s Hospital, Boston Atul Butte and Isaac Kohane " Creation and implications of a phenome-genome network." Nature Biotechnolgy. 2006 Jan;24(1):55-62. http://www.nature.com/nbt/journal/v24/n1/pdf/nbt1150.pdf Quote: “You all were awesome. Nice when complex technology works without problems…. At long last, this paper finally JUST was published… I have had nothing but absolute success in using your cluster.” Partners Research Computing

Achievements and User Feedback Steele Lab, HMS & MGH • Selected Submitted Papers: • M. M. Dupin, I. Halliday and C. M. Care, L. Munn, "Simple Hybrid method for the simulation of red blood cells", submitted November 2006. • Selected Paper In preparation • M. M. Dupin, ..etc , "New efficiency oriented, hybrid approach for modeling deformable particles in three dimension", in preparation 2007. • Grants Awarded: NIH R01 HL64240 (Munn; research) Quote: “Dennis Gurgul has been amazing in providing very high standard technical support, both on the hardware side and software side. ….. The results and papers we have (and forthcoming) would not have been possible without the Partners cluster help.” Partners Research Computing

Achievements and User Feedback Children's Hospital Informatics Program at the Harvard-MIT Division of Health Sciences and Technology Carter SL, Eklund AC, Kohane IS, Harris LN, Szallasi Z. "A signature of chromosomal instability inferred from gene expression profiles predicts clinical outcome in multiple human cancers." Nature Genetics. 2006 Sep;38(9):1043 Quote: “ALL of the data hosting and computational analysis for this paper was done on hpres!! thanks guys! “ Partners Research Computing

Achievements and User Feedback Department of Biostatistics, Harvard School of Public Health R.Hoffmann T, Lange C. (selected publications) "P2BAT: a massive parallel implementation of PBAT for genome-wide association studies in bioinformatics", Bioinformatics. 2006 Dec 15;22(24):3103-5. Family-based designs in the age of large-scale gene-association studies. Laird NM, Lange C. Nature Review Genetics. 2006 May;7(5):385-94. Genomic screening and replication using the same data set in family-based association testing, Nature Genetics 37, 683 - 691 (2005) Quote: “We managed to get 3 papers published in Nature and one in Science based on the cluster computations. We also won abig grant“ Partners Research Computing

Achievements and User Feedback MGH, Center for Cancer Research Ben Witnner Quote: “we use the cluster to do bootstrap-based statistical analysis…. that would not be possible without a cluster.We also use ..CellProfiler, a program that analyzes images produced by the high-throughput microscopy.This too would not be possible withtout a cluster“ Partners Research Computing

Achievements and User Feedback Quote: “It is extremely helpful in providing access to a linux system on which I can run my molecular modeling programs,which demand rapid processor execution.Having this available has made many runs possible which I just could not do before. “ John Penniston, MD, Protein Folding, Harvard Medical School Kenneth Auerbach Sr. Project Manager, Neurology (BWH) Quote: “Enabled me to do parallel processing of large numbers of candidate small molecules …and prospective binding sites in proteins.Without the cluster these calculations would have taken weeks to perform. “ Many other encouraging comments…… Partners Research Computing

Future and On-going Changes Expanding Computing Resources--hardware: • Rescluster • Add 15 – 20 nodes • Change headnode • Add storage • Make available to Partners general usage • Rescluster2 • Reconfigure (new hardware, etc) • Make Matlab / biopara / SAS server • Use Matlab scheduler • Use as boat anchor Partners Research Computing

Future and On-going Changes Expanding Computing Resources--hardware: • Upgrading Existing HPRES • Upgrade/migrate the storage system • Windows Cluster ? • Coming XC3000 Class-c Blades (64 nodes) • Dual Core Woodcrest / 8 GB • Cluster Gateway FS • EVA storage (34 TB raw) • One initial user at first Partners Research Computing

The Coming RESCLU Architecture Hope can overcome I/O bottleneck • One initial user at first (RPDR analysis) • Will be open for general usage at later date Partners Research Computing

On-going Changes and Future Plan Expanding Computing Resources--software: • Upgrading Management Tools • Commercial Compilers • Commercial statistics software (Matlab toolbox, SAS, SPSS…etc . Licenses?) Partners Research Computing

On-going Changes and Future Plans • Expanding Services—through interaction • Better understand/support our potential user Base and Increase the ability to adapt to the fast-changing research environment. • New personnel bridging IT with research • User guidance, trouble shooting, performance evaluation, benchmark the scientific jobs • Prevent powerful users from doing “naughty things” and abusing the cluster unconsciously. • Get involved in early stage of the research (apply funding together?) • Heterogeneous hardware vs. management tasks, • LDAP enabled “single sign on” for different facilities • Share file server among clusters • Improve our ability to support other HPC needs. E.g. proteomics, genetics researches involved with large-scale data processing Partners Research Computing

Future and On-going Changes • Expanding Services---through web • Provide system-specific, application-specific user guidance with job script templates and explanations http://www.partners.org/rescomputing/hpc_cluster • Extend users’ ability to submit/monitor jobs • Provide discussion platform for users • Reach more users in partners network via web service • Help to integrate the application with HPC Partners Research Computing

Easier to submit job and reach broader users by providing HPC Web Service http://www.partners.org/rescomputing/hpc_cluster/hpctools.htm Any user with valid partners email address can submit jobs and retrieve results from website http://www.partners.org/rescomputing/hpc_cluster/webportallogin.aspx Easier for job monitoring: User can login and check and monitor job status online from web portal Partners Research Computing

Possible HPC and Web Service Web portal to expand applications as web service ---Wrap the execution of your application with scheduler scripts automatically and present the results via web Partners Research Computing

Getting Account Available to researchers in the greater Partners community (MGH, BWH, DFCI, Harvard, MIT, etc.) http://www.partners.org/rescomputing/hpc_cluster/account_registration.aspx Partners Research Computing

The good news: Computers allow us to work 100% faster. The bad news: They generate 300% more work. THANK YOU! Partners Research Computing