Experience-Based Chess Play

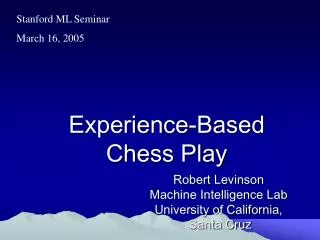

Stanford ML Seminar March 16, 2005 Experience-Based Chess Play Robert Levinson Machine Intelligence Lab University of California, Santa Cruz Outline Review of State-of-the-art in Games Review of Computer Chess Method Blindspots and Unsolved Issues ================== 4. Morph

Experience-Based Chess Play

E N D

Presentation Transcript

Stanford ML Seminar March 16, 2005 Experience-Based Chess Play Robert Levinson Machine Intelligence Lab University of California, Santa Cruz

Outline • Review of State-of-the-art in Games • Review of Computer Chess Method • Blindspots and Unsolved Issues ================== 4. Morph 4a. Philosophy and Results 4b. Patterns/Evaluation 4c. Learning * Td-learning * Neural Nets * Genetic Algorithms

Why Chess? • Human/computer approaches very different • Most studied game • Well-known internationally and by public • Cognitive studies available • Accurate, well-defined rating system ! • Complex and Non-Uniform • John McCarthy, Alan Turing, Claude Shannon, Herb Simon and Ken Thompson and…. • Game theoretic value unknown • Active Research Community/Journal

Kasparov vs. Deep Blue • 1. Deep Blue can examine and evaluate up to 200,000,000 chess positions per second • Garry Kasparov can examine and evaluate up to three chess positions per second • 2. Deep Blue has a small amount of chess knowledge and an enormous amount of calculation ability. • Garry Kasparov has a large amount of chess knowledge and a somewhat smaller amount of calculation ability. • 3. Garry Kasparov uses his tremendous sense of feeling and intuition to play world champion-calibre chess. • Deep Blue is a machine that is incapable of feeling or intuition. • 4. Deep Blue has benefitted from the guidance of five IBM research scientists and one international grandmaster. • Garry Kasparov is guided by his coach Yuri Dokhoian and by his own driving passion to play the finest chess in the world. • 5. Garry Kasparov is able to learn and adapt very quickly from his own successes and mistakes. • Deep Blue, as it stands today, is not a "learning system." It is therefore not capable of utilizing artificial intelligence to either learn from its opponent or "think" about the current position of the chessboard. • 6. Deep Blue can never forget, be distracted or feel intimidated by external forces (such as Kasparov's infamous "stare"). • Garry Kasparov is an intense competitor, but he is still susceptible to human frailties such as fatigue, boredom and loss of concentration.

Recent Man vs. Machine Matches • Garry Kasparov versus Deep Junior, January 26 - February 7, 2003 in New York City, USA. Result: 3 - 3 draw. • Evgeny Bareev versus Hiarcs-X, January 28 - 31 , 2003 in Maastricht, Netherlands. Result: 2 - 2 draw. • Vladimir Kramnik versus Deep Fritz, October 2 - 22, 2002 in Manama, Bahrain. Result: 4 - 4 draw.

Minimax Example terminal nodes: values calculated from some evaluation function Max Min 4 7 9 6 9 8 8 5 6 7 5 2 3 2 5 4 9 3

Max other nodes: values calculated via minimax algorithm Min Max 4 7 6 2 6 3 4 5 1 2 5 4 1 2 6 3 4 3 Min 4 7 9 6 9 8 8 5 6 7 5 2 3 2 5 4 9 3

Max Min 7 6 5 5 6 4 Max 4 7 6 2 6 3 4 5 1 2 5 4 1 2 6 3 4 3 Min 4 7 9 6 9 8 8 5 6 7 5 2 3 2 5 4 9 3

Max 5 3 4 Min 7 6 5 5 6 4 Max 4 7 6 2 6 3 4 5 1 2 5 4 1 2 6 3 4 3 Min 4 7 9 6 9 8 8 5 6 7 5 2 3 2 5 4 9 3

Actual move made by Max 5 Max Max makes first move down the left hand side of the tree, in the expectation that countermove by Min is now predicted. After Min makes move, tree will need to be regenerated and the minimax procedure re-applied to new tree Possible later moves 5 3 4 Min 7 6 5 5 6 4 Max 4 7 6 2 6 3 4 5 1 2 5 4 1 2 6 3 4 3 Min 4 7 9 6 9 8 8 5 6 7 5 2 3 2 5 4 9 3

5 Max 5 3 4 Min 7 6 5 5 6 4 Max 4 7 6 2 6 3 4 5 1 2 5 4 1 2 6 3 4 3 Min 4 7 9 6 9 8 8 5 6 7 5 2 3 2 5 4 9 3

Computer chess • Let's say you start with a chess board set up for the start of a game. Each player has 16 pieces. Let's say that white starts. White has 20 possible moves: • The white player can move any pawn forward one or two positions. • The white player can move either knight in two different ways. • The white player chooses one of those 20 moves and makes it. For the black player, the options are the same: 20 possible moves. So black chooses a move. • Now white can move again. This next move depends on the first move that white chose to make, but there are about 20 or so moves white can make given the current board position, and then black has 20 or so moves it can make, and so on.

Chess complexity There are 20 possible moves for white. There are 20 * 20 = 400 possible moves for black, depending on what white does. Then there are 400 * 20 = 8,000 for white. Then there are 8,000 * 20 = 160,000 for black, and so on. If you were to fully develop the entire tree for all possible chess moves, the total number of board positions is about 1,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000, or 10120. There have only been 1026 nanoseconds since the Big Bang. There are thought to be only 1075 atoms in the entire universe.

How computer chess works No computer is ever going to calculate the entire tree. What a chess computer tries to do is generate the board-position tree five or 10 or 20 moves into the future. Assuming that there are about 20 possible moves for any board position, a five-level tree contains 3,200,000 board positions. A 10-level tree contains about 10,000,000,000,000 (10 trillion) positions. The depth of the tree that a computer can calculate is controlled by the speed of the computer playing the game.

Is computer chess intelligent? The minimax algorithm alternates between the maximums and minimums as it moves up the tree. This process is completely mechanical and involves no insight. It is simply a brute force calculation that applies an evaluation function to all possible board positions in a tree of a certain depth. What is interesting is that this sort of technique works pretty well. On a fast-enough computer, the algorithm can look far enough ahead to play a very good game. If you add in learning techniques that modify the evaluation function based on past games, the machine can even improve over time. The key thing to keep in mind, however, is that this is nothing like human thought. But does it have to be?

Leaf nodes examined Search Depth

Hmmm. What to do? • Black is to move:

…Ra5! • White to move:

What is strategy? • the art of devising or employing plans or stratagems toward a goal where favorable.

Science Would you say a traditional chess program's chess strength is based mainly on a firm axiomatic theory about the strengths and balances of competing differential semiotic trajectory units in a multiagent hypergeoemetric topological manifold-like zero-sum dynamic environment or simply because it is an accurate short-term calculator??!

Morph Philosophy Reinforcement Learning Mathematical View of Chess Don’t Cheat!!!

#define DOUBLED_PAWN_PENALTY 10 #define ISOLATED_PAWN_PENALTY 20 #define BACKWARDS_PAWN_PENALTY 8 #define PASSED_PAWN_BONUS 20 #define ROOK_SEMI_OPEN_FILE_BONUS 10 #define ROOK_OPEN_FILE_BONUS 15 #define ROOK_ON_SEVENTH_BONUS 20 /* the values of the pieces */ int piece_value[6] = 100,300,350,500,900,0 Cheating!

Goal: ELO 3000+ 2800+ World Champion 2600+ GM 2400+ IM 2200+ FM (> 99 percent) • Expert 1600 Median (> 50 percent) 1543 Morph 1000 Novice (beginning tournament player) 555 Random

Milestones • MorphI: 1995. Graph Matching Draws GnuChess every 10 games. 800 rating • Morph II: 1998. Plays any game, compiles neural net based on First Order Logic rules. Optimal Tic-Tac-Toe, NIM. • Morph IV : 2003. Neural Neighborhoods + Improved Eval Reaches 1036. • Summer 2004: 1450 (improved implementation) • Today : 1558 (neural net +pattern variety + genetic alg.) • Next: genetically selected patterns and nets.

Total Information = Diversity + Symmetry • Diversity corresponds to Comp Sci “Complexity” = resources required. • Diversity can often only be resolved with Combinatorial Search

Exploit Symmetry !! “Invariant with respect to transformation.” “Shared information between objects or systems or their representations.” AB+AC = A(B+C).

Symmetry Synonyms • similarity • commonality • structure • mutual information • relationship • pattern • redundancy

Novices Experts

Levels of Learning Levels: 0. None – Brute Force It 1. Empirically –Statistical Understanding - Inductive 1a. Supervise b. Reward/Punish + TD Learning c. Imitate 2. Analytically – Mathematical - Deductive But Must Be Efficient!

Chess and Stock Trading Patterns and their interpretation • Technical Analysis: Forecasting market activity based on the study of price charts, trends and other technical data. • Fundamental Analysis: Forecasting based on analysis of economic and geopolitical data. • A Trading plan is formulated and executed only after a thorough and systematic Technical and Fundamental analysis. • All trading plans incorporate Risk Management procedures.

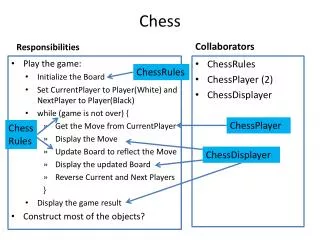

THE MORPH ARCHITECTURE Pattern-based! CHESS GAMES NEW POSITION INTUITIVELY BEST MOVE COMPILED MEMORY Pattern Processor & Matcher 4-6 PLY Search WEIGHTS ADB

MORPH Positions Chess Patterns Weights 4-Ply Search • Reply

Morph patterns • graph patterns • both nodes, edge labeled • direct attack, indirect attack, discovered attack • material patterns • Piece/square tables • (later : graph patterns realized as neural networks)

The game of Chess – example • White has over 30 possible moves • If black’s turn – can capture pawn at c3 and check (also fork)

Pattern weight formulation of search knowledge • weights • real number within the reinforcement value range, e.g {0,1} • expected value of reinforcement given that the current state satisfied the pattern • pws : <p1, .7> • the states that have p1 as a feature are more likely to lead to a win than a loss • advantage • low-level of granularity and uniformity

Evaluation Scheme 64 Neighborhood Values are Computed and Then Combined

Morph - evaluation function KEY: Use product rather than sum!! WHY? Makes risk adverse……..

Morph - evaluation asproduct x1 x2 x1*x2 .5 .5 0.25 .2 .8 0.16 .2 .5 0.10 .8 .5 0.40 .8 .8 0.64 .2 .2 0.04 .1 .8 0.08 .2 .9 0.18 1 .8 0.80 0 .2 0.00

Learning Ingredients • Graph Patterns • Neural Networks • Temporal Difference Learning • Simulated Annealing • Representation Change • Genetic Algorithms