Artificial Intelligence 14. Inductive Logic Programming

Artificial Intelligence 14. Inductive Logic Programming. Course V231 Department of Computing Imperial College, London © Simon Colton. Inductive Logic Programming. Representation scheme used Logic Programs Need to Recap logic programs Specify the learning problem Specify the operators

Artificial Intelligence 14. Inductive Logic Programming

E N D

Presentation Transcript

Artificial Intelligence 14. Inductive Logic Programming Course V231 Department of Computing Imperial College, London © Simon Colton

Inductive Logic Programming • Representation scheme used • Logic Programs • Need to • Recap logic programs • Specify the learning problem • Specify the operators • Worry about search considerations • Also • Go through a session with Progol • Look at applications

Remember Logic Programs? • Subset of first order logic • All sentences are Horn clauses • Implications where a conjunction of literals (body) • Imply a single goal literal (head) • Single facts can also be Horn clauses • With no body • A logic program consists of: • A set of Horn clauses • ILP theory and practice is highly formal • Best way to progress and to show progress

Horn Clauses and Entailment • Writing Horn Clauses: • h(X,Y) b1(X,Y) b2(X) ... bn(X,Y,Z) • Also replace conjunctions with a capital letter • h(X,Y) b1, B • Assume lower case letters are single literals • Entailment: • When one logic program, L1 can be proved using another logic program L2 • We write: L2 L1 • Note that if L2 L1 • This does not mean that L2 entails that L1 is false

Logic Programs in ILP • Start with background information, • As a logic program labelled B • Also start with a set of positive examples of the concept required to learn • Represented as a logic program labelled E+ • And a set of negative examples of the concept required to learn • Represented as a logic program labelled E- • ILP system will learn a hypothesis • Which is also a logic program, labelled H

Explaining Examples • A Hypothesis H explains example e • If logic program e is entailed by H • So, we prove e is true • Example • H: class(A, fish) :- has_gills(A) • B: has_gills(trout) • Positive example: class(trout, fish) • Entailed by H B taken together • Note that negative examples can also be entailed • By the hypothesis and background taken together

Prior Conditions on the Problem • Problem must be satisfiable: • Prior satisfiability: e E- (B e) • So, the background does not entail any negative example (if it did, no hypothesis could rectify this) • This does not mean that B entails that e is false • Problem must not already be solved: • Prior necessity: e E+ (B e) • If all the positive examples were entailed by the background, then we could take H = B.

Posterior Conditions on Hypothesis • Taken with B, H should entail all positives • Posterior sufficiency:e E+ (B H e) • Taken with B, H should entail no negatives • Posterior satisfiability: e E- (B H e) • If the hypothesis meets these two conditions • It will have perfectly solved the problem • Summary: • All positives can be derived from B H • But no negatives can be derived from B H

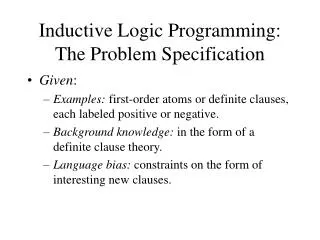

Problem Specification • Given logic programs E+, E-, B • Which meet the prior satisfiability and necessity conditions • Learn a logic program H • Such that B H meet the posterior satisfiabilty and sufficiency conditions

Moving in Logic Program Space • Can use rules of inference to find new LPs • Deductive rules of inference • Modus ponens, resolution, etc. • Map from the general to the specific • i.e., from L1 to L2 such that L1 L2 • Look today at inductive rules of inference • Will invert the resolution rule • Four ways to do this • Map from the specific to the general • i.e., from L1 to L2 such that L2 L1 • Inductive inference rules are not sound

Inverting Deductive Rules • Man alternates 2 hats every day • Whenever he wears hat X, he gets a pain, hat Y is OK • Knows that a hat having a pin in causes pain • Infers that his hat has a pin in it • Looks and finds the hat X does have a pin in it • Uses Modus Ponens to prove that • His pain is caused by a pin in hat X • Original inference (pin in hat X) was unsound • Could be many reasons for the pain in his head • Was induced so that Modus Ponens could be used

Inverting Resolution1. Absorption rule of inference • Rule written same as for deductive rules • Input above the line, and the inference below line • Remember that q is a single literal • And that A, B are conjunctions of literals • Can prove that the original clauses • Follow from the hypothesised clause by resolution

Proving Given clauses • Exercise: translate into CNF • And convince yourselves • Use the v diagram, • because we don’t want to write as a rule of deduction • Say that Absorption is a V-operator

Inverting Resolution2. Identification • Rule of inference: • Resolution Proof:

Inverting Resolution3. Intra Construction • Rule of inference: • Resolution Proof:

Predicate Invention • Say that Intra-construction is a W-operator • This has introduced the new symbol q • q is a predicate which is resolved away • In the resolution proof • ILP systems using intra-construction • Perform predicate invention • Toy example: • When learning the insertion sort algorithm • ILP system (Progol) invents concept of list insertion

Inverting Resolution4. Inter Construction • Rule of inference: • Resolution Proof: Predicate Invention Again

Generic Search Strategy • Assume this kind of search: • A set of current hypothesis, QH, is maintained • At each search step, a hypothesis H is chosen from QH • H is expanded using inference rules • Which adds more current hypotheses to QH • Search stops when a termination condition is met by a hypothesis • Some (of many) questions: • Initialisation, choice of H, termination, how to expand…

Search (Extra Logical) ConsiderationsGenerality and Speciality • There is a great deal of variation in • Search strategies between ILP programs • Definition of generality/speciality • A hypothesis G is more general than hypothesis S iff G S. S is said to be more specific than G • A deductive rule of inference maps a conjunction of clauses G onto a conjunction of clauses S, such that G S. • These are specialisation rules (Modus Ponens, resolution…) • An inductive rule of inference maps a conjunction of clauses S onto a conjunction of clauses G, such that G S. • These are generalisation rules (absorption, identification…)

Search Direction • ILP systems differ in their overall search strategy • From Specific to General • Start with most specific hypothesis • Which explain a small number (possibly 1) of positives • Keep generalising to explain more positive examples • Using generalisation rules (inductive) such as inverse resolution • Are careful not to allow any negatives to be explained • From General to Specific • Start with empty clause as hypothesis • Which explains everything • Keep specialising to exclude more and more negative examples • Using specialisation rules (deductive) such as resolution • Are careful to make sure all positives are still explained

Pruning • Remember that: • A set of current hypothesis, QH, is maintained • And each hypothesis explains a set of pos/neg exs. • If G is more general than S • Then G will explain more (>=) examples than S • When searching from specific to general • Can prune any hypothesis which explains a negative • Because further generalisation will not rectify this situation • When searching from general to specific • Can prune any hypothesis which doesn’t explain all positives • Because further specialisation will not rectify this situation

Ordering • There will be many current hypothesis in QH to choose from. • Which is chosen first? • ILP systems use a probability distribution • Which assigns a value P(H | B E) to each H • A Bayesian measure is defined, based on • The number of positive/negative examples explained • When this is equal, ILP systems use • A sophisticated Occam’s Razor • Defined by Algorithmic Complexity theory or something similar

Language Restrictions • Another way to reduce the search • Specify what format clauses in hypotheses are allowed to have • One possibility • Restrict the number of existential variables allowed • Another possibility • Be explicit about the nature of arguments in literals • Which arguments in body literals are • Instantiated (ground) terms • Variables given in the head literal • New variables • See Progol’s mode declarations

Example Session with Progol • Animals dataset • Learning task: learn rules which classify animals into fish, mammal, reptile, bird • Rules based on attributes of the animals • Physical attributes: number of legs, covering (fur, feathers, etc.) • Other attributes: produce milk, lay eggs, etc. • 16 animals are supplied • 7 attributes are supplied

Input file: mode declarations • Mode declarations given at the top of the file • These are language restrictions • Declaration about the head of hypothesis clauses :- modeh(1,class(+animal,#class)) • Means hypothesis will be given an animal variable and will return a ground instantiation of class • Declaration about the body clauses :- modeb(1,has_legs(+animal,#nat)) • Means that it is OK to use has_legs predicate in body • And that it will take the variable animal supplied in the head and return an instantiated natural number

Input file: type information • Next comes information about types of object • Each ground variable (word) must be typed animal(dog), animal(dolphin), … etc. class(mammal), class(fish), …etc. covering(hair), covering(none), … etc. habitat(land), habitat(air), … etc.

Input file: background concepts • Next comes the logic program B, containing these predicates: • has_covering/2, has_legs/2, has_milk/1, • homeothermic/1, habitat/2, has_eggs/1, has_gills/1 • E.g., • has_covering(dog, hair), has_milk(platypus), • has_legs(penguin, 2), homeothermic(dog), • habitat(eagle, air), habitat(eagle, land), • has_eggs(eagle), has_gills(trout), etc.

Input file: Examples • Finally, E+ and E- are supplied • Positives: class(lizard, reptile) class(trout, fish) class(bat, mammal), etc. • Negatives: :- class(trout, mammal) :- class(herring, mammal) :- class(platypus, reptile), etc.

Output file: generalisations • We see Progol starting with the most specific hypothesis for the case when animal is a reptile • Starts with the lizard reptile and finds most specific: class(A, reptile) :- has_covering(A,scales), has_legs(A,4), has_eggs(A),habitat(A, land) • Then finds 12 generalisations of this • Examples • class(A, reptile) :- has_covering(A, scales). • class(A, reptile) :- has_eggs(A), has_legs(A, 4). • Then chooses the best one: • class(A, reptile) :- has_covering(A, scales), has_legs(A, 4). • This process is repeated for fish, mammal and bird

Output file: Final Hypothesis class(A, reptile) :- has_covering(A,scales), has_legs(A,4). class(A, mammal) :- homeothermic(A), has_milk(A). class(A, fish) :- has_legs(A,0), has_eggs(A). class(A, reptile) :- has_covering(A,scales), habitat(A, land). class(A, bird) :- has_covering(A,feathers) Gets 100% predictive accuracy on training set

Some Applications of ILP (See notes for details) • Finite Element Mesh Design • Predictive Toxicology • Protein Structure Prediction • Generating Program Invariants