Sample Selection Bias – Covariate Shift: Problems, Solutions, and Applications

Sample Selection Bias – Covariate Shift: Problems, Solutions, and Applications. Wei Fan, IBM T.J.Watson Research Masashi Sugiyama, Tokyo Institute of Technology Updated PPT is available: http//www.weifan.info/tutorial.htm. Overview of Sample Selection Bias Problem. A Toy Example.

Sample Selection Bias – Covariate Shift: Problems, Solutions, and Applications

E N D

Presentation Transcript

Sample Selection Bias – Covariate Shift: Problems, Solutions, and Applications Wei Fan, IBM T.J.Watson Research Masashi Sugiyama, Tokyo Institute of Technology Updated PPT is available: http//www.weifan.info/tutorial.htm

A Toy Example Two classes: red and green red: f2>f1 green: f2<=f1

Unbiased and Biased Samples Not so-biased sampling Biased sampling

Unbiased 96.9% Unbiased 97.1% Unbiased 96.405% Biased 95.9% Biased 92.7% Biased 92.1% Effect on Learning • Some techniques are more sensitive to bias than others. • One important question: • How to reduce the effect of sample selection bias?

Normally, banks only have data of their own customers • “Late payment, default” models are computed using their own data • New customers may not completely follow the same distribution. Ubiquitous • Loan Approval • Drug screening • Weather forecasting • Ad Campaign • Fraud Detection • User Profiling • Biomedical Informatics • Intrusion Detection • Insurance • etc

Face Recognition • Sample selection bias: • Training samples are taken inside research lab, where there are a few women. • Test samples: in real-world, men-women ratio is almost 50-50. The Yale Face Database B

Brain-Computer Interface (BCI) • Control computers by EEG signals: • Input: EEG signals • Output: Left or Right Figure provided by Fraunhofer FIRST, Berlin, Germany

Training • Imagine left/right-hand movement following the letter on the screen Movie provided by Fraunhofer FIRST, Berlin, Germany

Testing: Playing Games • “Brain-Pong” Movie provided by Fraunhofer FIRST, Berlin, Germany

Non-Stationarity in EEG Features • Different mental conditions (attention, sleepiness etc.) between training and test phases may change the EEG signals. Bandpower differences between training and test phases Features extracted from brain activity during training and test phases Figures provided by Fraunhofer FIRST, Berlin, Germany

Robot Controlby Reinforcement Learning • Let the robot learn how to autonomously move without explicit supervision. Khepera Robot

Rewards Robot moves autonomously = goes forward without hitting wall • Give robot rewards: • Go forward: Positive reward • Hit wall: Negative reward • Goal: Learn the control policy that maximizes future rewards

Example • After learning:

Policy Iteration and Covariate Shift • Policy iteration: • Updating the policy correspond to changing the input distributions! Evaluate control policy Improve control policy

Bias as Distribution • Think of “sampling an example (x,y) into the training data” as an event denoted by random variable s • s=1: example (x,y) is sampled into the training data • s=0: example (x,y) is not sampled. • Think of bias as a conditional probability of “s=1” dependent on x and y • P(s=1|x,y) : the probability for (x,y) to be sampled into the training data, conditional on the example’s feature vector x and class label y.

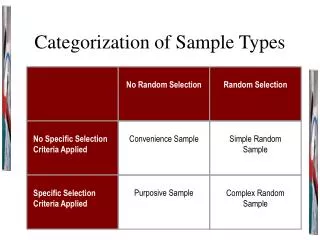

Categorization(Zadrozy’04, Fan et al’05, Fan and Davidson’07) • No Sample Selection Bias • P(s=1|x,y) = P(s=1) • Feature Bias/Covariate Shift • P(s=1|x,y) = P(s=1|x) • Class Bias • P(s=1|x,y) = P(s=1|y) • Complete Bias • No more reduction

Bias for a Training Set • How P(s=1|x,y) is computed • Practically, for a given training set D • P(s=1|x,y) = 1: if (x,y) is sampled into D • P(s=1|x,y) = 0: otherwise • Alternatively, consider D of the size can be sampled “exhaustively” from the universe of examples.

Realistic Datasets are biased? • Most datasets are biased. • Unlikely to sample each and every feature vector. • For most problems, it is at least feature bias. • P(s=1|x,y) = P(s=1|x)

Effect on Learning • Learning algorithms estimate the “true conditional probability” • True probability P(y|x), such as P(fraud|x)? • Estimated probabilty P(y|x,M): M is the model built. • Conditional probability in the biased data. • P(y|x,s=1) • Key Issue: • P(y|x,s=1) = P(y|x) ?

Heckman’s Two-Step Approach • Estimate one’s donation amount if one does donate. • Accurate estimate cannot be obtained by a regression using only data from donors. • First Step: Probit model to estimate probability to donate: • Second Step: regression model to estimate donation: • Expected error • Gaussian assumption

Covariate Shift or Feature Bias • However, no chance for generalization if training and test samples have nothing in common. • Covariate shift: • Input distribution changes • Functional relation remains unchanged

Example of Covariate Shift (Weak) extrapolation: Predict output values outside training region Training samples Test samples

Covariate Shift Adaptation • To illustrate the effect of covariate shift, let’s focus on linear extrapolation Training samples Test samples True function Learned function

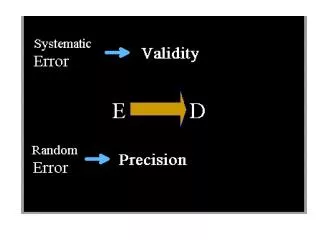

Generalization Error= Bias + Variance : expectation over noise

Model Specification • Model is said to be correctly specified if • In practice, our model may not be correct. • Therefore, we need a theory for misspecified models!

If model is correct: OLS minimizes bias asymptotically If model is misspecified: OLS does not minimize bias even asymptotically. We want to reduce bias! Ordinary Least-Squares (OLS)

Law of Large Numbers • Sample average converges to the population mean: • We want to estimate the expectation overtest input points only using training input points .

Key Trick:Importance-Weighted Average • Importance: Ratio of test and training input densities • Importance-weighted average: (cf. importance sampling)

Even for misspedified models, IWLS minimizes bias asymptotically. We need to estimate importance in practice. Importance-Weighted LS (Shimodaira, JSPI2000) :Assumed strictly positive

Use of Unlabeled Samples: Importance Estimation • Assumption: We have training inputs and test inputs . • Naïve approach: Estimate and separately, and take the ratio of the density estimates • This does not work well since density estimation is hard in high dimensions.

Vapnik’s Principle When solving a problem, more difficult problems shouldn’t be solved. • Directly estimating the ratio is easier than estimating the densities! (e.g., support vector machines) Knowing densities Knowing ratio

Modeling Importance Function • Use a linear importance model: • Test density is approximated by • Idea: Learn so that well approximates .

Kullback-Leibler Divergence (constant) (relevant)

Learning Importance Function • Thus • Since is density, (objective function) (constraint)

KLIEP (Kullback-LeiblerImportance Estimation Procedure) (Sugiyama et al., NIPS2007) • Convexity: unique global solution is available • Sparse solution: prediction is fast!

Experiments: Setup • Input distributions: standard Gaussian with • Training: mean (0,0,…,0) • Test: mean (1,0,…,0) • Kernel density estimation (KDE): • Separately estimate training and test input densities. • Gaussian kernel width is chosen by likelihood cross-validation. • KLIEP • Gaussian kernel width is chosen by likelihood cross-validation

Experimental Results • KDE:Error increases as dim grows • KLIEP: Error remains small for large dim KDE Normalized MSE KLIEP dim

Ensemble Methods (Fan and Davidson’07) Averaging of estimated class probabilities weighted by posterior Posterior weighting Integration Over Model Space Class Probability Removes model uncertainty by averaging

How to Use Them • Estimate “joint probability” P(x,y) instead of just conditional probability, i.e., • P(x,y) = P(y|x)P(x) • Makes no difference use 1 model, but Multiple models

Examples of How This Works • P1(+|x) = 0.8 and P2(+|x) = 0.4 • P1(-|x) = 0.2 and P2(-|x) = 0.6 • model averaging, • P(+|x) = (0.8 + 0.4) / 2 = 0.6 • P(-|x) = (0.2 + 0.6)/2 = 0.4 • Prediction will be –

But if there are two P(x) models, with probability 0.05 and 0.4 • Then • P(+,x) = 0.05 * 0.8 + 0.4 * 0.4 = 0.2 • P(-,x) = 0.05 * 0.2 + 0.4 * 0.6 = 0.25 • Recall with model averaging: • P(+|x) = 0.6 and P(-|x)=0.4 • Prediction is + • But, now the prediction will be – instead of + • Key Idea: • Unlabeled examples can be used as “weights” to re-weight the models.

Structure Discovery (Ren et al’08) Structural Discovery Original Dataset Structural Re-balancing Corrected Dataset

Active Learning • Quality of learned functions depends on training input location . • Goal: optimize training input location Good input location Poor input location Target Learned

Challenges • Generalization error is unknown and needs to be estimated. • In experiment design, we do not have training output valuesyet. • Thus we cannot use, e.g., cross-validationwhich requires . • Only training input positions can be used in generalization error estimation!

Agnostic Setup • The model is not correctin practice. • Then OLS is not consistent. • Standard “experiment design” method does not work! (Fedorov 1972; Cohn et al., JAIR1996)

Bias Reduction byImportance-Weighted LS (IWLS) (Wiens JSPI2001; Kanamori & Shimodaira JSPI2003; Sugiyama JMLR2006) • The use of IWLS mitigates the problem of in consistency under agnostic setup. • Importance is known in active learning setup since is designed by us! Importance