Association Graphs

340 likes | 640 Views

Association Graphs. Selim Mimaroglu University of Massachusetts Boston. This Presentation is Built On:. A Graph-Based Approach for Discovering Various Types of Association Rules ( MAIN) by Show-Jane Yen and A.L.P. Chen at IEEE Transactions on Data and Knowledge Engineering 2001

Association Graphs

E N D

Presentation Transcript

Association Graphs Selim Mimaroglu University of Massachusetts Boston

This Presentation is Built On: • A Graph-Based Approach for Discovering Various Types of Association Rules (MAIN)by Show-Jane Yen and A.L.P. Chen at IEEE Transactions on Data and Knowledge Engineering 2001 • Mining Multiple-Level Association Rules from Large Databases by Jiawei Han and Yongjian Fu at VLDB ’95 • Mining Multilevel Association Rules from Transaction Databases section 6.3 ISBN 1558604898 • Mining Generalized Association Rules by R. Srikant, and R. Agrawal at VLDB ’95 • Introduction to Data Mining by Tan, Steinbach, Kumar ISBN:0321321367

Organization • Primitive Association Rules • Definition • Association Graphs for finding large (frequent) item sets • Multiple-Level Association Rules • Definition • Why it’s important? • Association Graphs for finding large item sets • Interest Measure • Generalized Association Rules • Definition • Association Graphs for finding large item sets

Association Rules • Discovering patterns from a large database (generally data warehouse) is computationally expensive • To find all the rules that satisfy minsupport and minconf • X → Y • Transactions in the database contain the items in X tend also contain the items in Y.

Definitions • Let I={i1, i2, …, id} be set of all items in a market basked data • Let T={t1, t2, ..tN} be the set of all transactions • A collection of items is termed itemset

Definitions Let X and Y be two disjoint itemsets • Support Count of X • Support of X → Y • Confidence of X → Y

Taxonomy (or Concept Hierarchy) • This is created by the domain expert, e.g. store manager. • There may be more than one taxonomy for the same items • is-a relation • “Folgers Coffee Classic Roast Singles - 19 ct”(barcode 7890045) is-a “Regular Ground Coffee” • “Regular Ground Coffee” is-a “Coffee” • “Coffee” is-a “Beverage” • “Beverage” is-a “General Grocery Item”

Taxonomy Example ……………………………………………………

Primitive Association Rules • Deals with the lowest level items of the taxonomy (most widely used) • Very well studied • Algorithms using Apriori principle • FP-Growth Algorithms • Steps • Large (frequent) itemset generation • Rule Generation

Frequent Itemset Generation (Apriori) Found to be Infrequent Pruned supersets

Many database scans for checking if the candidate itemsets are qualified (i.e. ≥ minsupport ) Reducing the database scans FP-Growth: 2 scans Association Graphs: 1 scan ? Motivation

Mining Primitive Association Rules Create Bit Vectors Number the Attributes A:1 D:4 B:2 E:5 C:3

This is a column store (as opposed to a row store) Read Efficient Row stores are write efficient For an item or itemset a 64 bit processor can count the support count of 64 rows in one instruction only Logical AND, OR minsupport = 50% (2 transactions) Is item 1 (column A) frequent ? Yes Bit Vectors (Column Store) Is the itemset {1, 3} frequent? Yes =

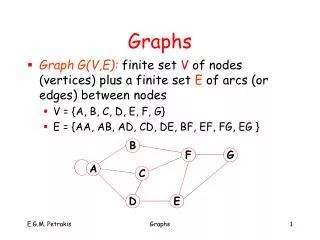

Association Graph Construction Property 1:The support for the itemset {i1, i2, …, ik} is the number of 1s in BVi1∧ BVi2 … ∧ BVik, where the notation “∧” is a logical AND operator ACG: Association Graph Construction:For every two large items i and j ( i < j), if the number of 1s in BVi∧ BVj achieves the user-specified minimum support, a directed edge from item i to item j is created (The Association Graph is a Directed Acyclic Graph. Proven in Appendix A). Also, itemset (i, j) is a large 2 - itemset

Example 1: 1 5 2 4 3

Lemma 1: If an itemset is not a large itemset, then any itemset which contains this itemset cannot be a large itemset (Proof: Apriori principle) Lemma 2: For a large itemset (i1, i2, …, ik), if there is no directed edge from ik to an item v, then itemset (i1, i2, …, ik, v) cannot be a large itemset Proof of Lemma 2: If there is not an edge from ik to v then (ik, v) is not a large itemset. If (ik, v) is not a large itemset then none of the supersets of (ik, v) can be a large itemset (e.g. (i1, i2, …, ik, v) isn’t a large itemset )

1 5 2 3 Finding All Large Itemsets by Using an Association Graph • Suppose (i1, i2, …, ik) is a large k-itemset. If there is no directed edge from ik to v then the itemset need not be extended into k+1-itemset because (i1, i2, …, ik, v) must not be a large itemset according to Lemma 2 • If there is a directed edge from ik to u, then the itemset (i1, i2, …, ik) is extended to k+1-itemset (i1, i2, …, ik, u). The itemset (i1, i2, …, ik, u) is a large k+1 itemset if the number of 1s in BVi1∧ BVi2 … ∧ BVik ∧ BViu achieves the minsupport Fig 1: Association Graph

Large 2-itemsets: (1, 3) (2, 3) (2, 5) (3,5) minsupport : 50% (2 rows) Example 2: Finding all large itemsets by using the Association Graph of Example1 1 5 2 3 Fig 1: Association Graph

(1, 3, 5) (2, 3, 5) Large 3-itemset Generation = = 1 5 2 3

1 5 2 3 Is it really 1 (one) scan? • Creating an Association Graph may be computationally expensive • This really is not 1 scan only. • Bit Vectors make reading extremely efficient. • This is almost as good as it gets Fig 2: An itemset lattice (at level 2) Fig 1: An Association Graph

Why Multilevel Association Rules? • Support at the lower concept levels is low • You can miss the association rules at higher levels (bigger picture) • Support: • Uniform support for all levels • Reduced minimum support at lower levels • Level by level independent • Other approaches

Replace items/concepts at level k with the concepts at level k-1 Apply an Association Rules Algorithm at the items at level k-1 Mining Multiple level Association Rules computer desktop laptop IBM Dell Sony Toshiba

For level 4 Generate Association Graphs on this table Upgrade the items at Table 1 to level 3 (by looking at the taxonomy tree) Example 3 for Multilevel Associations Table 2: Market basket data (at level 3) Table 1: Market basket data (at lowest level taxonomy: level 4)

Redundant Multilevel Rules desktop computer → b/w printer [support=8%, confidence = 70%] (R1) IBM desktop computer → b/w printer [support=2%, confidence=72%] (R2) • A rule is interesting if: • it doesn’t have a parent rule • it can’t be deduced from its parent rule Suppose that one quarter of all “desktop computers” are “IBM desktop computers”, so we expect following • Confidence of R2 shall be around 70% • Support of R2 shall be around 8% * ¼ = 2% R2 is redundant (uninteresting) because it does not convey any additional information All redundant rules shall be pruned

Mining Generalized Association Rules all computer software printer computer accessory desktop laptop educational financial management color b/w wrist pad mouse IBM Dell Sony Toshiba Microsoft … … HP … Sony … Ergoway Logitech

Rule Generation • To generate generalized association patterns one can add all ancestors of each item and then apply the basic algorithm • {IBM desktop computer, Sony b/w printer} • {IBM desktop computer, desktop computer, computer, Sony b/w printer, b/w printer, printer} • This way works, but inefficient • If {IBM desktop computer} is a large itemset then • {IBM desktop computer, desktop computer}, {IBM desktop computer, computer} and {desktop computer, computer} are large itemsets but redundant

Definition • A generalized association rule is an implication of the form X→Y, where X⊂I, Y ⊂I, X∩Y=∅, and no item in Y is an ancestor of any item in X. • X →ancestor(X) is trivially true with 100% confidence and hence redundant

Support for an item and its ancestor Lemma 3: The support for an itemset X that contains an item xi and its ancestor yi will be the same as the support for the itemset X- yi Proof of Lemma 3: X={x1, ..., xi, yi,…, xn} The support for the itemset X is: BV x1∧ … ∧ BV xi∧ BV yi …∧ BV xn Note that: BV xi = BV xi∧ BV yi The support of X becomes as the support of X- yi BV x1∧ … ∧ BV xi∧ …∧ BV xn

Number the items using the Post Order Numbering method (PON) Lemma 4:For every two items i and j (i<j), item v is an ancestor of item i, but not an ancestor of item j , then v<j Post Order Numbering An example of concept hierarchy

Support for an ancestor • Lemma 5: Suppose items i1, i2, …, and im are all specific descendants of the generalized item in. The bit vector BV in associated with item in is BV i1∨BV i2 ∨ …… ∨BV im and the number of 1s in this bit vector is the support for item in where ∨ stands for logical OR operation. • Lemma 6: If an itemset X is large itemset, then any itemset generated by replacing an item in itemset X with its ancestor is also a large itemset

Creating the Generalized Association Graph • Lemma 7: if (the number of 1s in BVi∧BVj)≥ minsupport, then for each ancestor u of item i and for each ancestor v of item j, (the number of 1s in BVu∧BVj)≥minsupport and (the number of 1s in BVi∧BVv)≥minsupport From Lemma 7, if an edge from item i to item j is created, the edges from item i to the ancestors of item j, which are not ancestors of item i, are also created From Lemma 4, the ancestors of item i, which are not ancestors of item j, are all less than j. Hence if an edge from item i to item j is created, the edges from the ancestors of item i, which are not ancestors of j, to item j are also created (A. Graph Construction Pr.)

Example of Generalized Association Rule with minsupport 40% (2 transactions) The generalized Association Graph

Finally Theorem 1: Any itemset generated by traversing the Generalized Association Graph (GAG) will not contain both an item and its ancestor Proof of Theorem 1: • Basis of Induction: In GAG there will be no edge between an item and its ancestor. Therefore all 2-itemsets are free of ancestors • Inductive Hypothesis: We assume that any large k-itemset (i1, i2, …, ik) does not contain both an item and its ancestor • Inductive Step: Large k-itemset (i1, i2, …, ik) is extended to k+1-itemset (i1, i2, …, ik,, w). Suppose v1, v2, …, vk-1 are ancestors of i1, i2, …, ik-1 respectively, but none are ancestors of item ik. Because items are numbered by PON method, ik> vj (1≤ j ≤ k-1). Hence, there are no edges from item ik to the ancestors of items i1, i2, …, ik. So, item w cannot be an ancestor of item i1, i2, …, ik