Uintah Runtime System Tutorial

600 likes | 882 Views

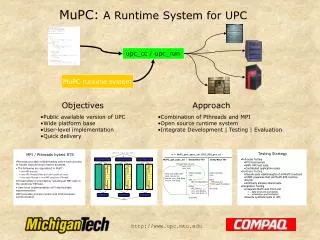

www.uintah.utah.edu. Uintah Runtime System Tutorial. Outline of Runtime System How Uintah achieves parallelism Uintah Memory management Adaptive Mesh refinement and Load Balancing Executing tasks – Task Graph and Scheduler Heterogeneous architectures GPUs & Intel MIC.

Uintah Runtime System Tutorial

E N D

Presentation Transcript

www.uintah.utah.edu Uintah Runtime System Tutorial • Outline of Runtime System • How Uintah achieves parallelism • Uintah Memory management • Adaptive Mesh refinement and Load Balancing • Executing tasks – Task Graph and Scheduler • Heterogeneous architectures GPUs & Intel MIC Alan Humphrey, Justin Luitjens,* Dav de St. Germain and Martin Berzins *NVIDIA here as an ex-Uintah Researcher/Developer DOE ASCI (97-10), NSF , DOE NETL+NNSA ARL NSF , INCITE, XSEDE

Uintah Runtime System: How Uintah Runs Tasks • Memory Manager: Uintah Data Warehouse (DW) • Variable dictionary (hashed map from: Variable Name, Patch ID, Material ID keys to memory) • Provide interfaces for tasks to • Allocate variables • Put variables into DW • Get variables from DW • Automatic Scrubbing (garbage collection) • Checkpointing & Restart (data archiver) • Task Manager: Uintah schedulers • Decides when and where to run tasks • Decides when to process MPI

xml Task Graph Compilattion Run Time (each timestep) RUNTIME SYSTEM Parallel I/O Calculate Residuals Solve Equations Visus PIDX VisIt UINTAH ARCHITECTURE

How Does Uintah Work • Patch-based Domain Decomposition • Distribute Patches to Processors • Adaptive Mesh Refinement • Forecast Load Balancing • Task-Graph Specification • No Processor/Domain Information • Variables Requires & Computes, no Task Before & After • Compiled by Scheduler

Uintah Parallelism (Data) • Structured Grid(Flows) + Particles System(Solids) • Patch-based Domain Decomposition • Adaptive Mesh Refinement • Dynamic Load Balancing1 • Profiling + Forecasting Model • Parallel Space Filling Curves • Data Migration 1. J. Luitjens, M. Berzins. “Improving the Performance of Uintah: A Large-Scale Adaptive Meshing Computational Framework,”IPDPS10’

Uintah Parallelism (Task) • Abstract specification of user codes as tasks. • Uintah Task – serial code w/o MPI/thread on a generic patch • Require Variable(s) with ghost cells • Compute Variable(s) • Call-back Function • Separation of user code and parallelism • Framework automatically generates MPI messages • Easy to switch/combine multiple algorithms Inputs Callback Functions Outputs

OndemandDatawarehouse Directory based hash map Var versions v v1 v v1 v2 v3 C v2 v3 v v v R M v v1 v3 v For in order execution Variable versioning for out-of-order execution

Uintah: Parallelism & Scaling Patch-based domain decomposition Asynchronous task-based paradigm ALCF Mira OLCF Titan • Task - serial code on generic “patch” • Task specifies desired halo region • Clear separation of user code from parallelism/runtime • Uintah infrastructure provides: • automatic MPI message generation • load balancing • particle relocation • check pointing & restart Strong Scaling: Fluid-structure interaction problem using MPMICE algorithm w/ AMR Existing components scale from 5k to 500K cores w/o changing any user code

Uintah: Task-Graph Approach • Task Graph: Directed Acyclic Graph (DAG) • Task – basic unit of work • C++ method with computation (user written callback) • Asynchronous, dynamic, out of order execution of tasks - key idea • Overlap communication & computation • Allows Uintah to be generalized to support accelerators • GPU extension is realized without massive, sweeping code changes • Infrastructure handles device API details • Provides convenient GPU APIs • User writes only GPU kernels for appropriate CPU tasks

Why Does Dynamic Execution of Directed Acyclic Graphs Work So Well? Bulk Synchronous Approach Eliminate spurious synchronization points Multiple task-graphs across multicore (+GPU) nodes – parallel slackness Overlap communication with computation executing tasks as they become available – avoid waiting (out-of order execution). Load balance complex workloads by having a sufficiently rich mix of tasks per multicore node that load balancing is done per node (not core) Time DAG-based: dynamic scheduling Time saved DATA FLOWS SPECIFY ORDER OF EXECUTION WITHOUT SYNCHRONIZATION

Scalable AMR Regridding Algorithm Berger-Rigoutsos Recursively split patches based on histograms Histogram creation requires all-gathers O(Processors) Complex and does not parallelize well Irregular patch sets Two versions – version 2 uses less cores in forming histogram

AMR regridding algorithm (Berger-Rigoutsos) Number of points in each column Box is split into 2 3 3 3 1 1 3 3 2 Tagged points Initial box Process is repeated on the two new boxes (1) tag cells where refinement is needed (2) create a box to enclose tagged cells (3) split the box along its long direction based on a histogram of tagged cells This step is repeated at least log(B) times where B is the number of patches (4) fit new boxes to each split box and repeat the steps as needed.

Berger Rigoutsos Communications costs • Every processor has to pass its part of the histogram to every other so that the partitioning decision can be made • The is done using the MPI_ALLGATHER function - cost of an allgather on P (cores) processors is Log(P) Version 1: (2B-1)log(P) messages Version 2: Only certain cores take part [Gunney et al.] 2P –log(P)-2 messages Alternative: use Berger Rigoutsos locally on each processor

Scalable AMR Regridding Algorithm Tiled Algorithm Tiles that contain flags become patches Simple and easy to parallelize Semi-regular patch sets that canbe exploited Example: Neighbor finding Each core processes subset of refinement flags in parallel-helps produce global patch set [Analysis to appear in Concurrency]

EXAMPLE – MESH REFINEMENT AROUND A CIRCULAR FRONT Global Berger- Rigoutsos Local Berger- Rigoutsos Tiled Algorithm More patches generated by tiled but noneed to split them to load balance

Theoretical Models of Refinement Algorithms C = number of mesh cells in domain F = number of refinement flags in domain B = number of mesh patches in the domain Bc = number of coarse mesh patches in the domain P = number of processing cores M = number of messages T: GBRv1 = c1 (F/P) log(B) + c2 M(GBRv1) T: LBR = c4 (F/P) log(B/Bc) T: Tiled = c5 C/P T: Split = c6 B/P + c7 log (P) - is the time to split large patches

Strong Scaling of AMR Algorithms GBR is Global Berger-Rigoutsos – problem size fixed as number of cores increases should lead to decreasing execution time. No strong scaling Strong Scaling T: GBRv1 = c1 (Flags/Proc) log(npatches ) + c2 M(GBRv1) T: Tiled = c5 Nmeshcells / Proc T: LBR = GBR on a compute node Dots are data and lines are models

Performance Improvements Improve Algorithmic Complexity Identify & Eliminate O(Processors) O(Patches) via Tau, and hand profiling, memory usage analysis Improve Task Graph Execution Out of order execution of task graph e.g. Improve Load Balance Cost Estimation Algorithms based on data assimilation Use load balancing algorithms based on patches and a new fast space filling curve algorithm Hybrid model using message passing between nodes and asynchronous threaded execution of tasks on cores Partial Ice task graph

Load Balancing Weight Estimation Algorithmic Cost Models based on discretization method Vary according to simulation+machine Requires accurate information from the user Time Series Analysis Used to forecast time for execution on each patch Automatically adjusts according to simulation and architecture with no user interaction Need to assign the same workload to each processor. Er,t: Estimated Time Or,t: Observed Time α: Decay Rate Er,t+1 =αOr,t + (1 -α) Er,t =α (Or,t-Er,t) + Er,t Error in last prediction Simple Exponential Smoothing:

Comparison between Forecast Cost Model FCM & Algorithmic Cost Model Particles + Fluid code FULL SIMULATION

Uintah Task Scheduler (De-centralized) • One MPI Process per Multicore node (MPI + Pthreads) • All threads access task queues and process their own MPI • Tasks for one patch may run on different cores • Shared data warehouse and task queues per multicore node • Lock-free data warehouse enables fast mem access to all cores

Uintah Runtime System Data Management Network 3 2 Data Warehouse (one per-node) Thread 1 Execution Layer (runs on multiple cores) MPI_IRecv Internal Task Queue Task Graph recv Select Task & Post MPI Receives Comm Records Check Records & Find Ready Tasks valid MPI_Test External Task Queue get Select & Execute Task put MPI_ISend Post Task MPI Sends Shared Objects (one per node) send

New Hybrid Model Memory Savings: Ghost Cells MPI: Thread/MPI: Raw Data: 49152 doubles 31360 doubles MPI buffer: 28416 doubles 10624 doubles Total: 75K doubles40Kdoubles Local Patch Ghost Cells (example on Kraken, 12 cores/node, 98K core 11% of memory needed

Memory Savings • Global Meta-data copies • 60 bytes or 7.5 doubles per patch • Each copy per core vs Each copy per node • MPI library buffer overhead • Results: AMRICE: Simulation of the transport of two fluids with a prescribed initial velocity of Mach two: 435 million cells, strong scaling runs on Kraken

Lock-Free Data Structures Global scalability depends on the details of nodal run-time system. Change from Jaguar to Titan – more faster cores and faster communications broke our Runtime System which worked fine with locks previously • Using atomic instruction set • Variable reference counting • fetch_and_add, fetch_and_subcompare_and_swap • both read and write simultaneously • Data warehouse • Redesigned variable container • Update: compare_and_swap • Reduce: test_and_set

Scalability Improvement De-centralized MPI/Thread Hybrid Scheduler (with Lock-free Data Warehouse) And new Threaded runtime system Original Dynamic MPI-only Scheduler Out of memory on 98K cores Severe load imbalance • Achieved much better CPU Scalability

Schedule Global Sync Task R1 R2 • Synchronization task • Update global variable • e.g. MPI_Allreduce • Call third party library • e.g. PETSc, hypre • Out-of-order issues • Deadlock • Load imbalance • Task phases • One global task per phase • Global task runs last • In phase out-of-order R2 R1 Deadlock Load imbalance

Multi-threaded Task Graph Execution • 1) Static: Predetermined order • Tasks are Synchronized • Higher waiting times Task Dependency Execution Order • 2) Dynamic: Execute when ready • Tasks are Asynchronous • Lower waiting times • 3) Dynamic Multi-threaded • Task-Level Parallelism • Memory saving • Decreases Communication and Load Imbalance Multicorefriendly Support GPU tasks (Future)

Scalability is at least partially achieved by not executing tasks in order e.g. AMR fluid-structure interaction Straight line represents given order of tasks Green X shows when a task is actually executed. Above the line means late execution while below the line means early execution took place. More “late” tasks than “early” ones as e.g. TASKS: 1 2 3 4 5 1 4 2 3 5 Early Late execution

UintahRuntime System GPUS

When extending a general computational framework to GPUs, with over 800K lines of code ….where to start?….Uintah’s asynchronous task-based approach makes this surprisinglymanageable

NVIDIA Fermi Outline Host memory to Device memory is max 8GB/second, BUT … Device memory to cores is 144GB/second with less than half of this being achieved on memory bound applications ( Blas level 1,2) 40GFlops

Hiding PCIe Latency • Nvidia CUDA Asynchronous API • Asynchronous functions provide: • Memcopies asynchronous with CPU • Concurrently execute memcopy with kernel(s) • Stream - sequence of operations that execute in order on GPU • Operations from different streams can be interleaved Page-locked Memory Normal Data Transfer Data Transfer Kernel Execution Kernel Execution

GPU Task and Data Management Pin this memory with cudaHostRegister() Framework Manages Data Movement Host Device Call-back executed here (kernel run) existing host memory • Use CUDA Asynchronous API • Automatically generate CUDA streams for task dependencies • Concurrently execute kernels and memory copies • Preload data before task kernel executes • Multi-GPU support cudaMemcpyAsync(H2D) hostRequires devRequires Page locked buffer computation Result back on host hostComputes devComputes cudaMemcpyAsync(D2H) Free pinned host memory Automatic D2H copy here

Multistage Task Queue Architecture • Overlap computation with PCIe transfers and MPI communication • Uintah can “pre-load”GPU task data • Scheduler queries task-graph for a task’s data requirements • Migrate data dependencies to GPU and backfill until ready

GPU DataWarehouse Async H2D Copy • Automatic, on-demand variable movement to-and-from device • Implemented interfaces for both CPU/GPU Tasks Host Device CPU Task GPU Task MPI Buffer CPU Task dw.get() dw.put() MPI Buffer Hash map Flat array Async D2H Copy

Performance and Scaling Comparisons • Uintah strong scaling results when using: • Multi-threaded MPI • Multi-threaded MPI w/ GPU • GPU-enabled RMCRT • All-to-all communication • 100 rays per cell 1283cells • Need port Multi-level CPU tasks to GPU Cray XK-7, DOE Titan Thread/MPI: N=16 AMD Opteron CPU cores Thread/MPI+GPU: N=16 AMD Opteron CPU cores + 1 NVIDIA K20 GPU

UintahRuntime System Xeon Phi (Intel MIC)

Uintah on Stampede: Host-only Model Incompressible turbulent flow • Intel MPI issues beyond 2048 cores (seg faults) • MVAPICH2 required for larger core counts Using Hypre with a conjugate gradient solver Preconditioned with geometric multi-grid Red Black Gauss Seidel relaxation - each patch

Uintah on Stampede: Native Model • Compile with –mmic • cross compiling • Need to build all Uintah required 3p libraries * • libxml2 • zlib • Run Uintah natively on Xeon Phi within 1 day • Single Xeon Phi Card * During early access to TACC Stampede

Uintah on Stampede: Offload Model • Use compiler directives (#pragma) • Offload target: #pragma offload target(mic:0) • OpenMP: #pragma ompparallel • Find copy in/out variables from task graph • Functions called in MIC must be defined with __attribute__((target(mic))) • Hard for Uintah to use offload mode • Rewrite highly templated C++ methods with simple C/C++ so they can be called on the Xeon Phi • Less effort than GPU port, but still significant work for complex code such as Uintah

Uintah on Stampede: Symmetric Model • Xeon Phi directly calls MPI • Use Pthreads on both host CPU and Xeon Phi: • 1 MPI process on host – 16 threads • 1 MPI process on MIC – up to 120 threads • Currently only Intel MPI supported mpiexec.hydra-n 8 ./sus – nthreads16 : -n 8./sus.mic –nthreads 120 • Challenges: Different floating point accuracy on host and co-processor • Result Consistency • MPI message mismatch issue: Control related FP operations

p=0.421874999999999944488848768742172978818416595458984375 c=0.0026041666666666665221063770019327421323396265506744384765625 b=p/c Example: Symmetric Model FP Issue Host-only Model Symmetric Model b=162 b=162 161 162 163 164 161 162 163 164 MPI Size Mismatch Rank0: CPU MPI OK Rank0: CPU Rank1: CPU Rank1: MIC 161 162 163 164 161 162 163 164 b=162 b=161.99999999999999 Control related FP operations must use consistent accuracy model

Scaling Results on Xeon Phi • Multi MIC Cards (Symmetric Model) • Xeon Phi card: 60 threads per MPI process, 2 MPI processes • host CPU :16 threads per MPI process, 1 MPI processes • Issue: load imbalance - profiling differently on host and Xeon Phi Multiple MIC cards

Summary • DAG abstraction important for achieving scaling. Powerful abstraction for solving challenging engineering problems • Clear applicability to new architectures - accelerators and co-processors • Provides convenient separation of problem structure from data and communication - application code vs. runtime • Layered approach very important for not needing to change applications code. Shields applications developer from complexities of parallel programming • Scalability still requires engineering of the runtime system. Scalability still a challenge even with DAG approach – which does work amazingly well • GPU development ongoing • The approach used here shows promise for very large core and GPU counts but using these architectures is an exciting challenge • DSL approach very important very future • Waiting for Access to in-socket MICs (Knight’s Landing)